Quantitative Testing With Concept Activation Vectors Tcav Been Kim Google 2018

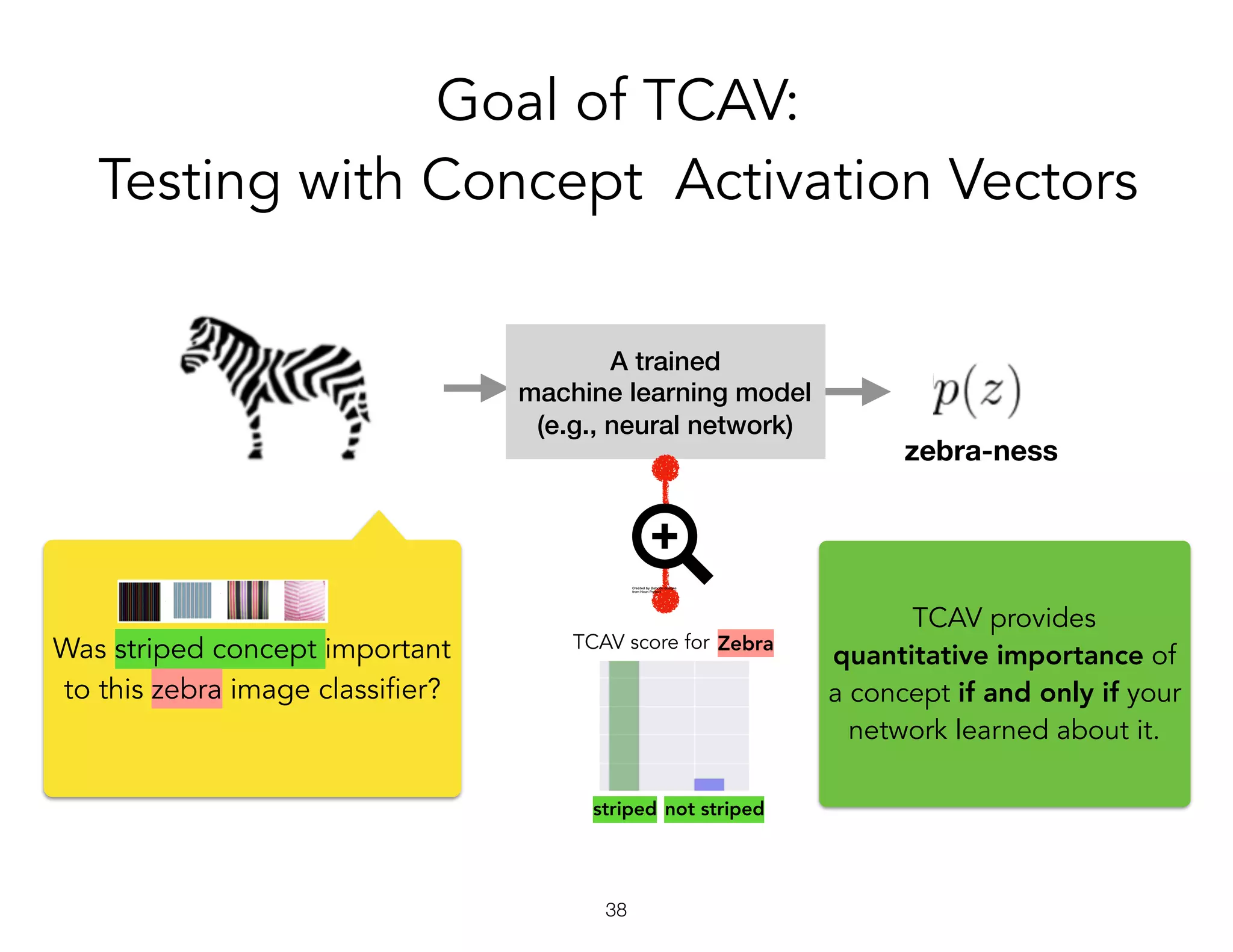

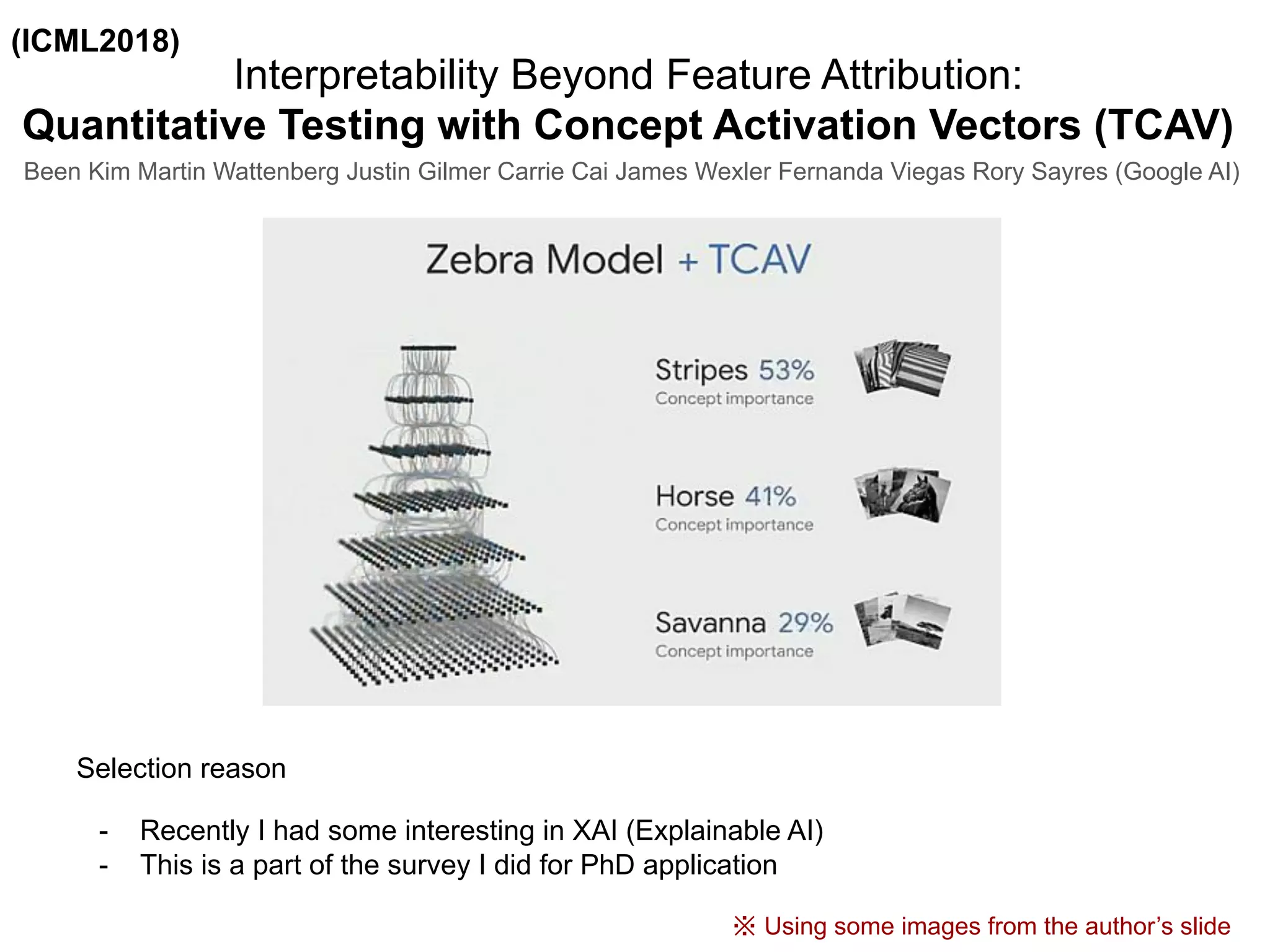

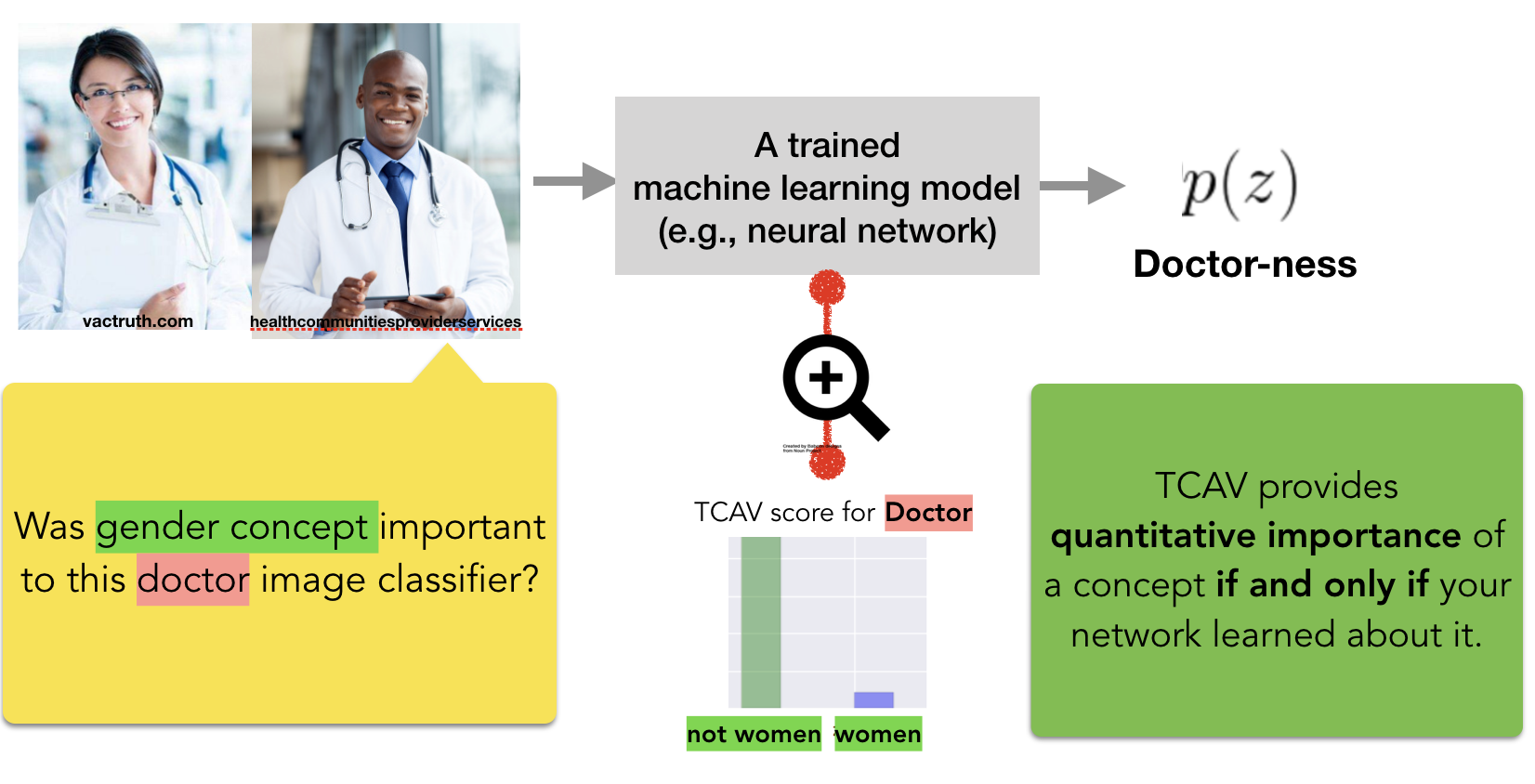

Interpretability Beyond Feature Attribution Quantitative Testing With We show how to use cavs as part of a technique, testing with cavs (tcav), that uses directional derivatives to quantify the degree to which a user defined concept is important to a classification result for example, how sensitive a prediction of "zebra" is to the presence of stripes. To address these challenges, we introduce concept activation vectors (cavs), which provide an interpretation of a neural net’s internal state in terms of human friendly concepts. the key idea is to view the high dimensional internal state of a neural net as an aid, not an obstacle.

Interpretability Beyond Feature Attribution Quantitative Testing With We show how to use cavs as part of a technique, testing with cavs (tcav), that uses directional derivatives to quantify the degree to which a user defined concept is important to a classification result for example, how sensitive a prediction of “zebra” is to the presence of stripes. Using the domain of image classification as a testing ground, we describe how cavs may be used to explore hypotheses and generate insights for a standard image classification network as well as a medical application. To address these challenges, we introduce concept activa tion vectors (cavs), which provide an interpre tation of a neural net’s internal state in terms of human friendly concepts. the key idea is to view the high dimensional internal state of a neural net as an aid, not an obstacle. Proceedings of the 2019 chi conference on human factors in computing systems … how do humans understand explanations from machine learning systems? an evaluation of the human interpretability of.

Introduction To Tcav Icml2018 Pdf To address these challenges, we introduce concept activa tion vectors (cavs), which provide an interpre tation of a neural net’s internal state in terms of human friendly concepts. the key idea is to view the high dimensional internal state of a neural net as an aid, not an obstacle. Proceedings of the 2019 chi conference on human factors in computing systems … how do humans understand explanations from machine learning systems? an evaluation of the human interpretability of. We show how to use cavs as part of a technique, testing with cavs (tcav), that uses directional derivatives to quantify the degree to which a user defined concept is important to a classification result for example, how sensitive a prediction of "zebra" is to the presence of stripes. What is tcav? testing with concept activation vectors (tcav) is a new interpretability method to understand what signals your neural networks models uses for prediction. Methods to detect concepts in internal representations of deep neural networks, such as network dissection, feature visualization, and testing with concept activation vectors, are argued to provide evidence that dnns are able to learn non trivial relations between concepts. Explore the concept of quantitative testing with concept activation vectors (tcav) in this 56 minute talk by been kim from google, presented at the center for language & speech processing (clsp) at jhu in 2018.

Quantitative Testing With Concept Activation Vectors Tcav Been Kim We show how to use cavs as part of a technique, testing with cavs (tcav), that uses directional derivatives to quantify the degree to which a user defined concept is important to a classification result for example, how sensitive a prediction of "zebra" is to the presence of stripes. What is tcav? testing with concept activation vectors (tcav) is a new interpretability method to understand what signals your neural networks models uses for prediction. Methods to detect concepts in internal representations of deep neural networks, such as network dissection, feature visualization, and testing with concept activation vectors, are argued to provide evidence that dnns are able to learn non trivial relations between concepts. Explore the concept of quantitative testing with concept activation vectors (tcav) in this 56 minute talk by been kim from google, presented at the center for language & speech processing (clsp) at jhu in 2018.

Been Kim Methods to detect concepts in internal representations of deep neural networks, such as network dissection, feature visualization, and testing with concept activation vectors, are argued to provide evidence that dnns are able to learn non trivial relations between concepts. Explore the concept of quantitative testing with concept activation vectors (tcav) in this 56 minute talk by been kim from google, presented at the center for language & speech processing (clsp) at jhu in 2018.

Comments are closed.