Interpretable Paper 3 Interpretability Beyond Feature Atribution

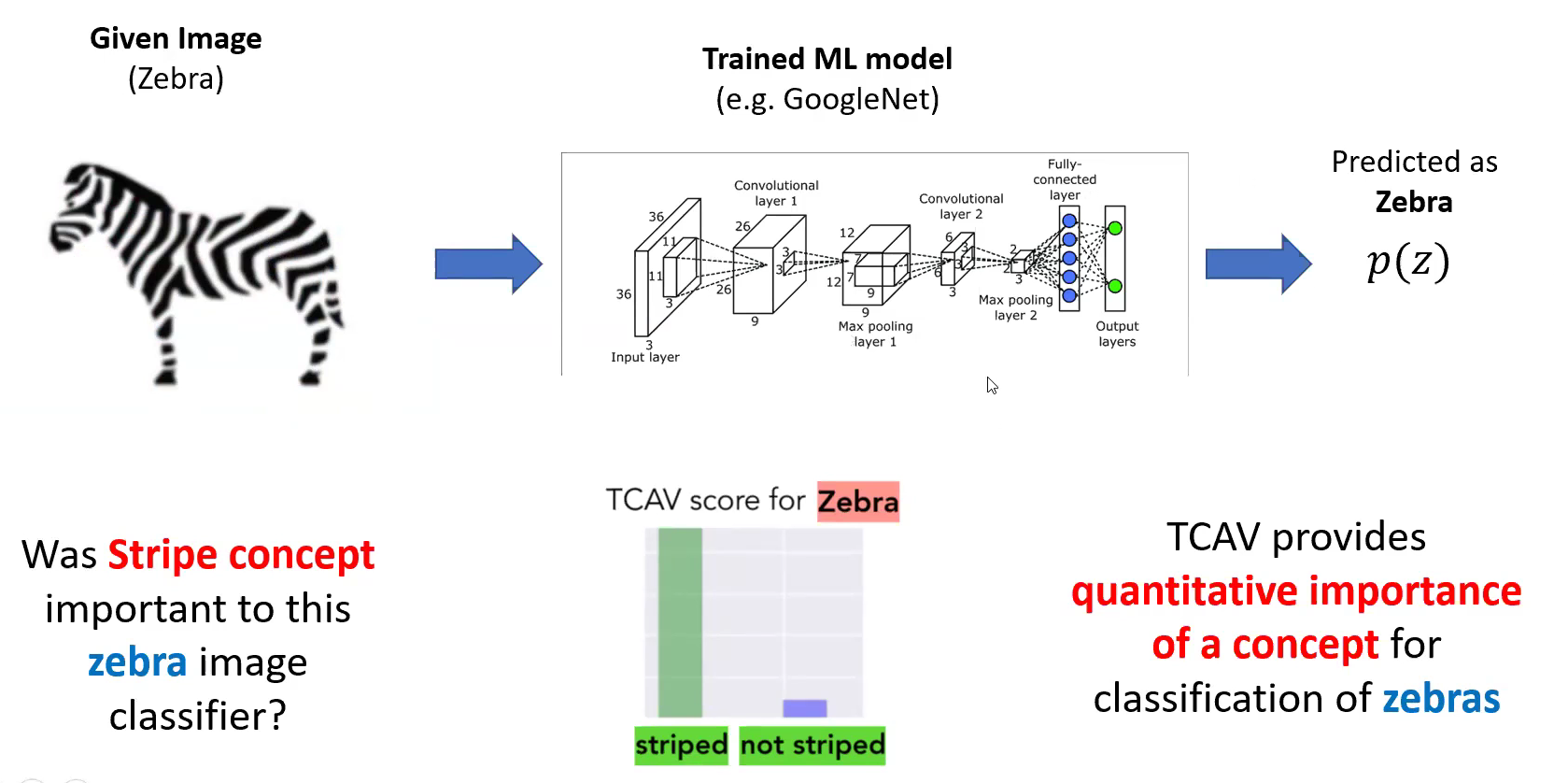

Interpretable Paper 3 Interpretability Beyond Feature Atribution View a pdf of the paper titled interpretability beyond feature attribution: quantitative testing with concept activation vectors (tcav), by been kim and 6 other authors. The main result of this paper is a new linear interpretability method, quantitative testing with cav (tcav) (outlined in figure1). tcav uses directional derivatives to quantify the model prediction’s sensitivity to an underlying high level concept, learned by a cav.

Interpretable Paper 3 Interpretability Beyond Feature Atribution To address these challenges, we introduce concept activation vectors (cavs), which provide an interpretation of a neural net's internal state in terms of human friendly concepts. the key idea is to view the high dimensional internal state of a neural net as an aid, not an obstacle. This paper contrast and compare several techniques applied on stacked denoising autoencoders and deep belief networks, trained on several vision datasets, and shows that good qualitative interpretations of high level features represented by such models are possible at the unit level. To address these challenges, we introduce concept activation vectors (cavs), which provide an interpretation of a neural net’s internal state in terms of human friendly concepts. the key idea is to view the high dimensional internal state of a neural net as an aid, not an obstacle. To address the aforementioned challenges, this study proposes an interpretable deep learning framework that integrates a fascia line–based partition attention mechanism with anatomical train priors, termed the fascia line attention and interpretable representation network (flairnet). we advocate for a deep integration of modern artificial intelligence with classical movement anatomy theory.

Interpretable Paper 3 Interpretability Beyond Feature Atribution To address these challenges, we introduce concept activation vectors (cavs), which provide an interpretation of a neural net’s internal state in terms of human friendly concepts. the key idea is to view the high dimensional internal state of a neural net as an aid, not an obstacle. To address the aforementioned challenges, this study proposes an interpretable deep learning framework that integrates a fascia line–based partition attention mechanism with anatomical train priors, termed the fascia line attention and interpretable representation network (flairnet). we advocate for a deep integration of modern artificial intelligence with classical movement anatomy theory. Awesome explainable ai an up to date library on all explainable and interpretable deep learning papers with codes. if you find some overlooked papers, please open issues or pull requests, and provide the paper (s) in this format:. This hands on tutorial introduces two powerful and complementary approaches that go beyond traditional feature attribution: concept based explainable ai (c xai) and mechanistic interpretability. During the paper is proven that cavs do indeed correspond to their intended concepts, and they can be used in standard image classification methods and for specialized medical applications. This paper presented a new concept to explain what part of the model's prediction process is based on which prediction is made. i think this is a very well written thesis in that it expands new concepts with appropriate logic and allows for quantitative calculation of ambiguous concepts.

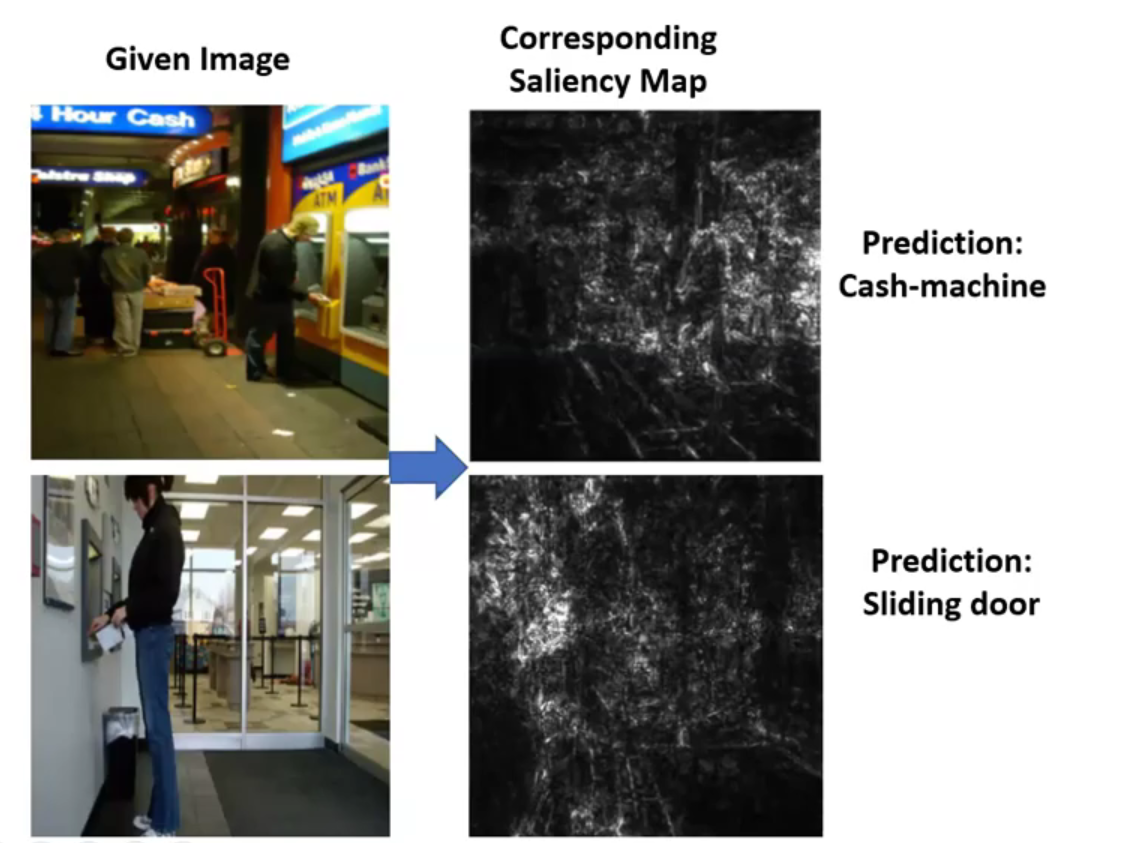

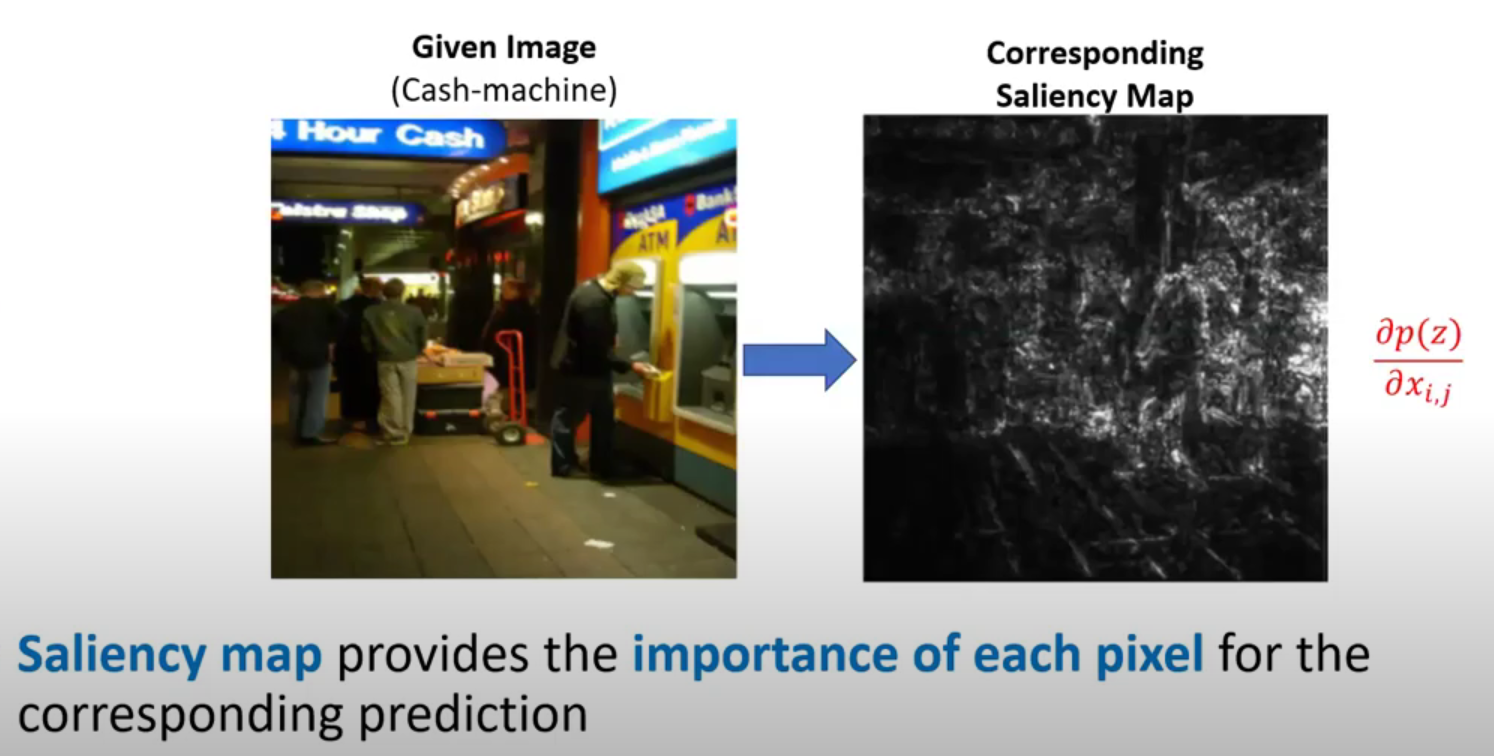

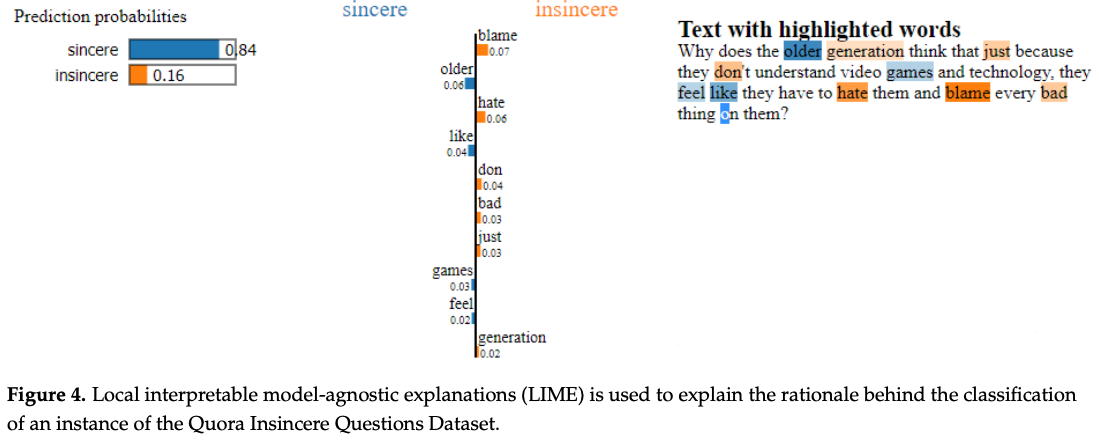

Machine Learning Interpretability With Feature Attribution Christian Awesome explainable ai an up to date library on all explainable and interpretable deep learning papers with codes. if you find some overlooked papers, please open issues or pull requests, and provide the paper (s) in this format:. This hands on tutorial introduces two powerful and complementary approaches that go beyond traditional feature attribution: concept based explainable ai (c xai) and mechanistic interpretability. During the paper is proven that cavs do indeed correspond to their intended concepts, and they can be used in standard image classification methods and for specialized medical applications. This paper presented a new concept to explain what part of the model's prediction process is based on which prediction is made. i think this is a very well written thesis in that it expands new concepts with appropriate logic and allows for quantitative calculation of ambiguous concepts.

Comments are closed.