Scale And Deploy Llms In Production Environments

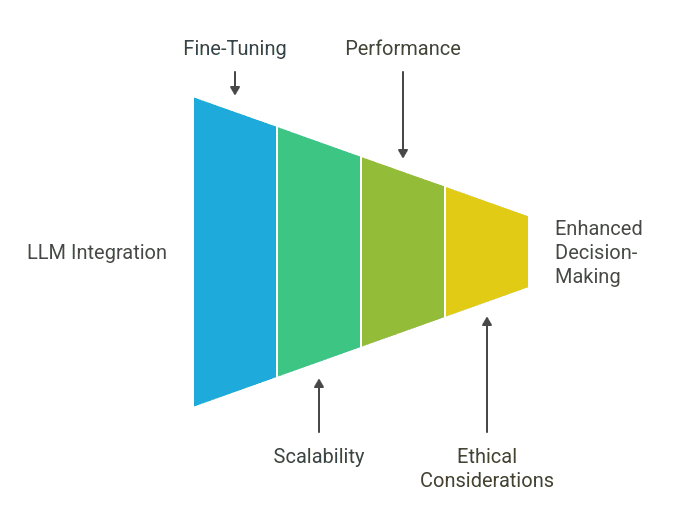

Scale And Deploy Llms In Production Environments A comprehensive guide to deploying large language models (llms) in production environments, covering architectures, optimization techniques, monitoring, and …. Deploying a large language model (llm) in production requires robust and scalable infrastructure to handle the model’s computational demands. llms are resource intensive, so setting up the right environment is critical to ensure efficient operation and a seamless user experience.

How To Deploy Llms In Production Strategies Pitfalls And Best Learn how to deploy llms in production with a clear plan for infrastructure, automation, testing, and compliance. Discover practical strategies for scaling llms in production, including efficient compute optimization, cost effective deployment techniques, and best practices for high performance ai workloads. It covers the architecture, infrastructure, operational practices, and economic realities of running llms in production at enterprise scale through thirteen chapters, each addressing a specific domain of production readiness. This course will teach you to create and customize blueprints for deploying llms in a scalable, cost effective, and secure way that aligns with your enterprise goals.

How To Deploy And Manage Llms â Meta Ai Labsâ It covers the architecture, infrastructure, operational practices, and economic realities of running llms in production at enterprise scale through thirteen chapters, each addressing a specific domain of production readiness. This course will teach you to create and customize blueprints for deploying llms in a scalable, cost effective, and secure way that aligns with your enterprise goals. In this hands on guide, we'll be taking a closer look at the avenues for scaling your ai workloads from local proofs of concept to production ready deployments, and walk you through the process of deploying models like gemma 3 or llama 3.1 at scale. This course, scale and deploy llms in production environments, equips you with the skills to overcome these challenges. learn to build and tailor deployment blueprints for llms, ensuring they are scalable, cost effective, and secure, perfectly aligned with your organization's objectives. Llmops (large language model operations) is the discipline of managing large language models in production. it encompasses the people, processes, tools, and governance needed to move llms from experiments into reliable, cost effective, and auditable systems that deliver business value at scale. it can be thought of as the operational backbone that keeps ai applications running safely and. Everything you need to deploy llms in production – inference frameworks, serving layers, scaling strategies, monitoring, and cost management.

Four Ways That Enterprises Deploy Llms In this hands on guide, we'll be taking a closer look at the avenues for scaling your ai workloads from local proofs of concept to production ready deployments, and walk you through the process of deploying models like gemma 3 or llama 3.1 at scale. This course, scale and deploy llms in production environments, equips you with the skills to overcome these challenges. learn to build and tailor deployment blueprints for llms, ensuring they are scalable, cost effective, and secure, perfectly aligned with your organization's objectives. Llmops (large language model operations) is the discipline of managing large language models in production. it encompasses the people, processes, tools, and governance needed to move llms from experiments into reliable, cost effective, and auditable systems that deliver business value at scale. it can be thought of as the operational backbone that keeps ai applications running safely and. Everything you need to deploy llms in production – inference frameworks, serving layers, scaling strategies, monitoring, and cost management.

Comments are closed.