Deploying Llms For Production Advanced Llmops Deploying And Managing

Deploying Llms For Production Advanced Llmops Deploying And Managing Gain practical skills in ml ops with mlflow for effective model management and deployment. conduct cost benefit analyses and apply strategic planning for economical ai project management. implement the latest llm optimization and scaling techniques to enhance model performance. Deploying large language models in production is a complex process, but with the right planning and tools, it can unlock powerful ai capabilities for your business.

Advanced Llmops Deploying And Managing Llms In Production Softarchive In this course, learn the advanced techniques and best practices for deploying and monitoring llms in production environments. explore llm deployment options, handling api limitations, performance monitoring techniques, prompt management, addressing hallucinations, and more. In this course, learn the advanced techniques and best practices for deploying and monitoring llms in production environments. explore llm deployment options, handling api limitations,. Implement and manage the operational lifecycle of large language models (llms) in production environments. this course covers advanced techniques for infrastructure management, model deployment, performance optimization, and monitoring specific to the scale and complexity of llms. This chapter will guide you through the key gcp services for llmops and provide a step by step example of deploying a large language model on gcp, along with sample code and templates.

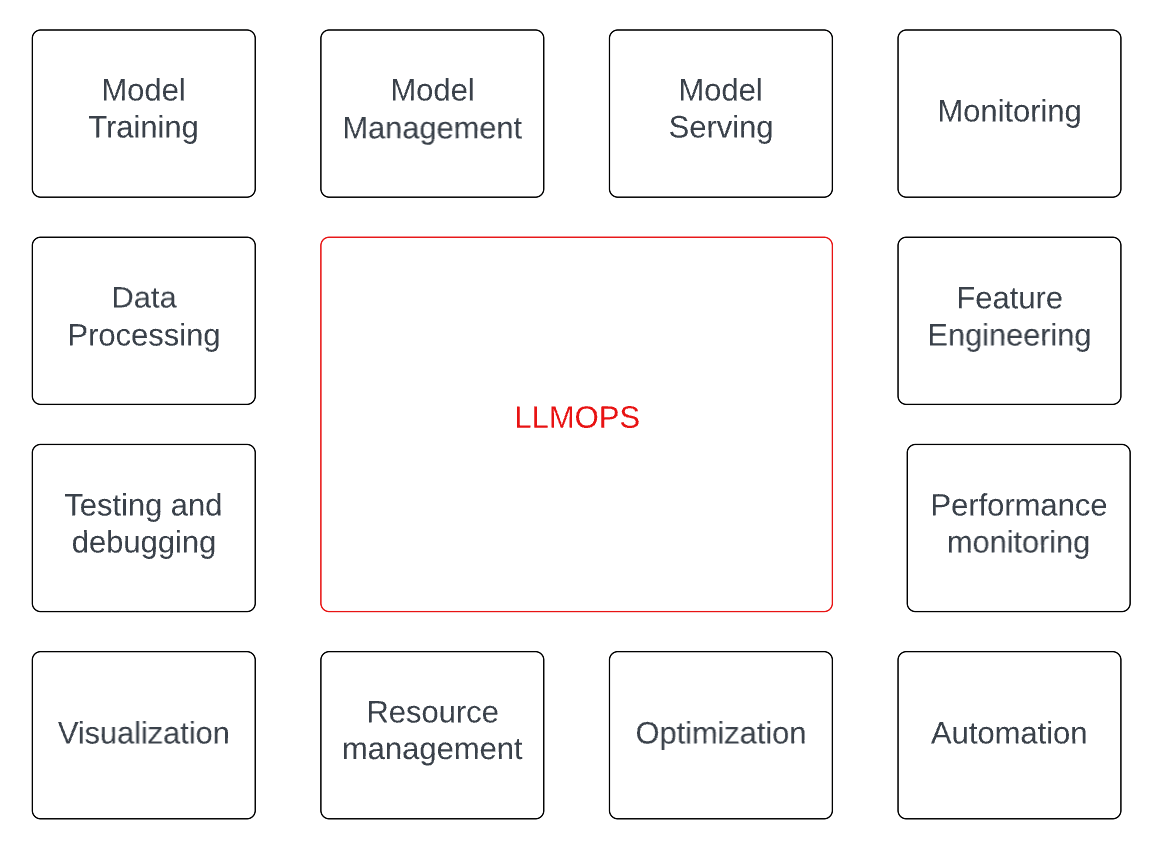

Day 8 Llmops Managing Llms In Production By Nikhil Kulkarni Gopenai Implement and manage the operational lifecycle of large language models (llms) in production environments. this course covers advanced techniques for infrastructure management, model deployment, performance optimization, and monitoring specific to the scale and complexity of llms. This chapter will guide you through the key gcp services for llmops and provide a step by step example of deploying a large language model on gcp, along with sample code and templates. However, deploying and managing these complex models in production environments presents unique challenges that traditional machine learning operations (mlops) methodologies often cannot fully address. Learn llmops best practices for deploying, managing, and scaling large language models in production environments securely. It is a multifaceted orchestration that navigates the complexities of developing and deploying llms and other solution components. in summary, llmops provides a cohesive workflow that facilitate the entire lifecycle of llm applications, from initiation to production. As the field continues to evolve, the principles and practices outlined in this guide provide a foundation for organizations seeking to leverage the full potential of llms in production environments while managing resources effectively and maintaining high security standards.

Best Practices For Deploying Llms In Production Qwak However, deploying and managing these complex models in production environments presents unique challenges that traditional machine learning operations (mlops) methodologies often cannot fully address. Learn llmops best practices for deploying, managing, and scaling large language models in production environments securely. It is a multifaceted orchestration that navigates the complexities of developing and deploying llms and other solution components. in summary, llmops provides a cohesive workflow that facilitate the entire lifecycle of llm applications, from initiation to production. As the field continues to evolve, the principles and practices outlined in this guide provide a foundation for organizations seeking to leverage the full potential of llms in production environments while managing resources effectively and maintaining high security standards.

Deploying Llms With Octopus Octopus Blog It is a multifaceted orchestration that navigates the complexities of developing and deploying llms and other solution components. in summary, llmops provides a cohesive workflow that facilitate the entire lifecycle of llm applications, from initiation to production. As the field continues to evolve, the principles and practices outlined in this guide provide a foundation for organizations seeking to leverage the full potential of llms in production environments while managing resources effectively and maintaining high security standards.

Llmops Devops Strategies For Deploying Large Language Models In

Comments are closed.