Residual Vector Quantization Rvq From Scratch

Residual Vector Quantization Residual vector quantization (rvq) from scratch priyam mazumdar 3.18k subscribers subscribed. Residual vector quantization (rvq) is a data compression technique found in state of the art neural audio codecs such as google’s soundstream, and facebook meta ai’s encodec, which in turn form the backbone of generative audio models such as audiolm (google) and musicgen (facebook).

Residual Vector Quantization Towards Data Science In practice, the first quantization layer tends to capture coarse (low frequency) patterns while later layers capture fine grained (higher frequency) patterns. the figure below shows how the quantizer stage is expanded in a residual quantizer. it’s from this blog post (about half way down the page). Residual vector quantizer (rvq) is a hierarchical, additive vector quantization framework that represents a target vector as the sum of multiple codeword vectors, each selected from stage specific codebooks, by recursively quantizing the residual error at each stage. Revqom is an end to end method that compresses feature dimensions via a simple bottleneck network followed by multi stage residual vector quantization (rvq). this allows only per pixel code indices to be transmitted, reducing payloads from 8192 bits per pixel (bpp) of uncompressed 32 bit float features to 6 30 bpp per agent with minimal. Implemening fundamental deep learning architectures from scratch deep learning from scratch [12] residual vector quantization at main · mayankpratapsingh022 deep learning from scratch.

Residual Vector Quantization Overview Revqom is an end to end method that compresses feature dimensions via a simple bottleneck network followed by multi stage residual vector quantization (rvq). this allows only per pixel code indices to be transmitted, reducing payloads from 8192 bits per pixel (bpp) of uncompressed 32 bit float features to 6 30 bpp per agent with minimal. Implemening fundamental deep learning architectures from scratch deep learning from scratch [12] residual vector quantization at main · mayankpratapsingh022 deep learning from scratch. Instead of trying to capture all information in a single quantization step, rvq quantizes the original input with the first quantizer, then captures the remaining details by quantizing the residual (difference between input and quantized output) with subsequent quantizers. To model this hierarchy, we employ residual vector quantized variational autoencoders (rvq vaes) to learn a coarse to fine representation of motion. we further enhance the disentanglement by integrating contrastive learning and a novel information leakage loss with codebook learning to organize the content and the style across different codebooks. Discover how rvq enables massive memory savings and faster inference in ai by iteratively refining complex data compression. Residual vector quantisation (rvq) is a technique for encoding audio into discrete tokens called codes. it's like a tokeniser for audio.

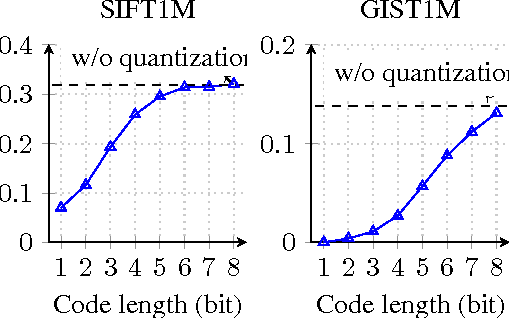

Improved Residual Vector Quantization For High Dimensional Approximate Instead of trying to capture all information in a single quantization step, rvq quantizes the original input with the first quantizer, then captures the remaining details by quantizing the residual (difference between input and quantized output) with subsequent quantizers. To model this hierarchy, we employ residual vector quantized variational autoencoders (rvq vaes) to learn a coarse to fine representation of motion. we further enhance the disentanglement by integrating contrastive learning and a novel information leakage loss with codebook learning to organize the content and the style across different codebooks. Discover how rvq enables massive memory savings and faster inference in ai by iteratively refining complex data compression. Residual vector quantisation (rvq) is a technique for encoding audio into discrete tokens called codes. it's like a tokeniser for audio.

Bhaskara Reddy Sannapureddy On Linkedin What Is Residual Vector Discover how rvq enables massive memory savings and faster inference in ai by iteratively refining complex data compression. Residual vector quantisation (rvq) is a technique for encoding audio into discrete tokens called codes. it's like a tokeniser for audio.

Comments are closed.