Residual Vector Quantization Overview

Residual Vector Quantization What’s a residual vq? an rvq has layers of quantizers, with the idea that the second layer tries to capture the residual (unexplained) variance from the first layer, the third layer tries to capture the residual variance from the second layer, and so on. Residual vector quantization (rvq) is an additive quantization technique in which a high dimensional vector is encoded as the sum of codewords from multiple codebooks, each sequentially approximating the residual error left by previous stages.

Residual Vector Quantization Towards Data Science Residual vector quantization (rvq) is a data compression technique found in state of the art neural audio codecs such as google’s soundstream, and facebook meta ai’s encodec, which in turn form the backbone of generative audio models such as audiolm (google) and musicgen (facebook). Residual vector quantisation (rvq) is a technique for encoding audio into discrete tokens called codes. it's like a tokeniser for audio. The quantizer then compresses this encoded vector through a process known as residual vector quantization, a concept originating in digital signal processing. finally, the decoder takes this compressed signal and reconstructs it into an audio stream. In this paper, a novel quantization method, residual vector product quantization (rvpq), is proposed to strike a better balance between accuracy and efficiency.

Residual Vector Quantization Overview The quantizer then compresses this encoded vector through a process known as residual vector quantization, a concept originating in digital signal processing. finally, the decoder takes this compressed signal and reconstructs it into an audio stream. In this paper, a novel quantization method, residual vector product quantization (rvpq), is proposed to strike a better balance between accuracy and efficiency. Our work advances the field of generative modeling by intro ducing a memory efficient approach for high fidelity sample generation using residual vector quantization (rvq). Residual vector quantization (rvq) is a hierarchical quantization technique in which high dimensional data vectors are approximated by a sum of codewords drawn sequentially from multiple codebooks, each operating on the residual error left by the preceding quantization stage. In this paper, we propose a novel quantization method, residual vector product quan tization (rvpq), which constructs a residual hierarchy structure con sisted of several ordered residual codebooks for each subspace. Abstract: advances in residual vector quantization (rvq) are surveyed. definitions of joint encoder optimality and joint decoder optimality are discussed. design techniques for rvqs with large numbers of stages and generally different encoder and decoder codebooks are elaborated and extended.

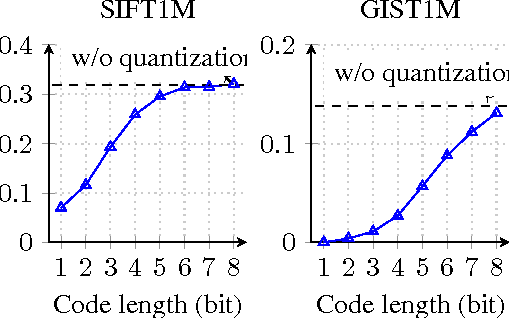

Improved Residual Vector Quantization For High Dimensional Approximate Our work advances the field of generative modeling by intro ducing a memory efficient approach for high fidelity sample generation using residual vector quantization (rvq). Residual vector quantization (rvq) is a hierarchical quantization technique in which high dimensional data vectors are approximated by a sum of codewords drawn sequentially from multiple codebooks, each operating on the residual error left by the preceding quantization stage. In this paper, we propose a novel quantization method, residual vector product quan tization (rvpq), which constructs a residual hierarchy structure con sisted of several ordered residual codebooks for each subspace. Abstract: advances in residual vector quantization (rvq) are surveyed. definitions of joint encoder optimality and joint decoder optimality are discussed. design techniques for rvqs with large numbers of stages and generally different encoder and decoder codebooks are elaborated and extended.

Additive Residual Product Vector Quantization Methods Train Residual Vq In this paper, we propose a novel quantization method, residual vector product quan tization (rvpq), which constructs a residual hierarchy structure con sisted of several ordered residual codebooks for each subspace. Abstract: advances in residual vector quantization (rvq) are surveyed. definitions of joint encoder optimality and joint decoder optimality are discussed. design techniques for rvqs with large numbers of stages and generally different encoder and decoder codebooks are elaborated and extended.

Comments are closed.