Production Deep Learning With Nvidia Gpu Inference Engine Nvidia

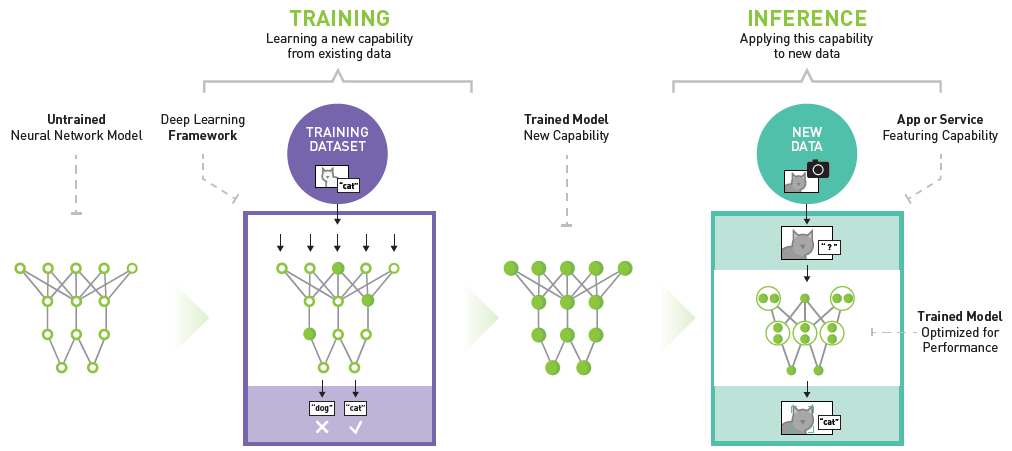

Production Deep Learning With Nvidia Gpu Inference Engine Nvidia In this post, we will discuss how you can use gie to get the best efficiency and performance out of your trained deep neural network on a gpu based deployment platform. solving a supervised machine learning problem with deep neural networks involves a two step process. Nvidia gpu inference engine (gie) is a high performance deep learning inference solution for production environments that maximizes performance and power efficiency for deploying deep neural networks.

Production Deep Learning With Nvidia Gpu Inference Engine Nvidia Nvidia merlin is an open source library providing end to end gpu accelerated recommender systems, from feature engineering and preprocessing to training deep learning models and running inference in production. Nvidia gpu inference engine (gie) is a high performance deep learning inference solution for production environments. power efficiency and speed of response are two key metrics for deployed deep learning applications, because they directly affect the user experience and the cost of the service provided. Tensorrt is a powerful sdk from nvidia that can optimize, quantize, and accelerate inference on nvidia gpus. in this article, we’ll walk through how to convert a pytorch model into a tensorrt optimized engine and benchmark its performance. In this paper, we will begin with a view of the end to end deep learning workflow and move into the details of taking ai enabled applications from prototype to production deployments.

Production Deep Learning With Nvidia Gpu Inference Engine Nvidia Tensorrt is a powerful sdk from nvidia that can optimize, quantize, and accelerate inference on nvidia gpus. in this article, we’ll walk through how to convert a pytorch model into a tensorrt optimized engine and benchmark its performance. In this paper, we will begin with a view of the end to end deep learning workflow and move into the details of taking ai enabled applications from prototype to production deployments. Maximize gpu efficiency for deep learning with nvidia cudnn's advanced techniques. accelerate training and inference while mastering graph api fusion. We provide a hands on walkthrough, which uses the nvidia dynamo blueprint on the ai on eks github repo by aws labs to provision the infrastructure, configure monitoring, and install the nvidia dynamo operator. A deep dive into nvidia’s h100 architecture and the monitoring techniques required for production grade llm inference optimization. While gpus have been instrumental in training llms, efficient inference is equally crucial for deploying these models in production environments. nvidia tensorrt, a high performance deep learning inference optimizer and runtime, plays a vital role in accelerating llm inference on cuda enabled gpus.

Production Deep Learning With Nvidia Gpu Inference Engine Nvidia Maximize gpu efficiency for deep learning with nvidia cudnn's advanced techniques. accelerate training and inference while mastering graph api fusion. We provide a hands on walkthrough, which uses the nvidia dynamo blueprint on the ai on eks github repo by aws labs to provision the infrastructure, configure monitoring, and install the nvidia dynamo operator. A deep dive into nvidia’s h100 architecture and the monitoring techniques required for production grade llm inference optimization. While gpus have been instrumental in training llms, efficient inference is equally crucial for deploying these models in production environments. nvidia tensorrt, a high performance deep learning inference optimizer and runtime, plays a vital role in accelerating llm inference on cuda enabled gpus.

Nvidia Deep Learning Inference Platform Performance Study Nvidia A deep dive into nvidia’s h100 architecture and the monitoring techniques required for production grade llm inference optimization. While gpus have been instrumental in training llms, efficient inference is equally crucial for deploying these models in production environments. nvidia tensorrt, a high performance deep learning inference optimizer and runtime, plays a vital role in accelerating llm inference on cuda enabled gpus.

Maximizing Deep Learning Inference Performance With Nvidia Model

Comments are closed.