Llmops How To Use Nvidia Tensorrt Sdk For Gpu Inference Datascience Machinelearning

Free Video Llmops Using Nvidia Tensorrt Sdk For Gpu Inference From Today, nvidia announces the public release of tensorrt llm to accelerate and optimize inference performance for the latest llms on nvidia gpus. Tensorrt llm provides users with an easy to use python api to define large language models (llms) and supports state of the art optimizations to perform inference efficiently on nvidia gpus.

Tensorrt Sdk Nvidia Developer Learn how to compare throughput and inference time by varying batch size and data precision, using both native pytorch inference and tensorrt runtime. gain practical insights into optimizing gpu inference for machine learning models, with a focus on llmops techniques. In this blog post, my goal was to demonstrate how state of the art inference can be achieved using tensorrt llm. we covered everything from compiling an llm to deploying the model in production. The llm api streamlines the process by managing model loading, optimization, and inference, all through a single llm instance. here is a simple example to show how to use the llm api with tinyllama. In this how to guide, we’ll go end to end—from install to engine build to serving—so you can confidently deploy faster, cheaper inference on nvidia gpus. this tutorial is written in a practical & solution oriented style.

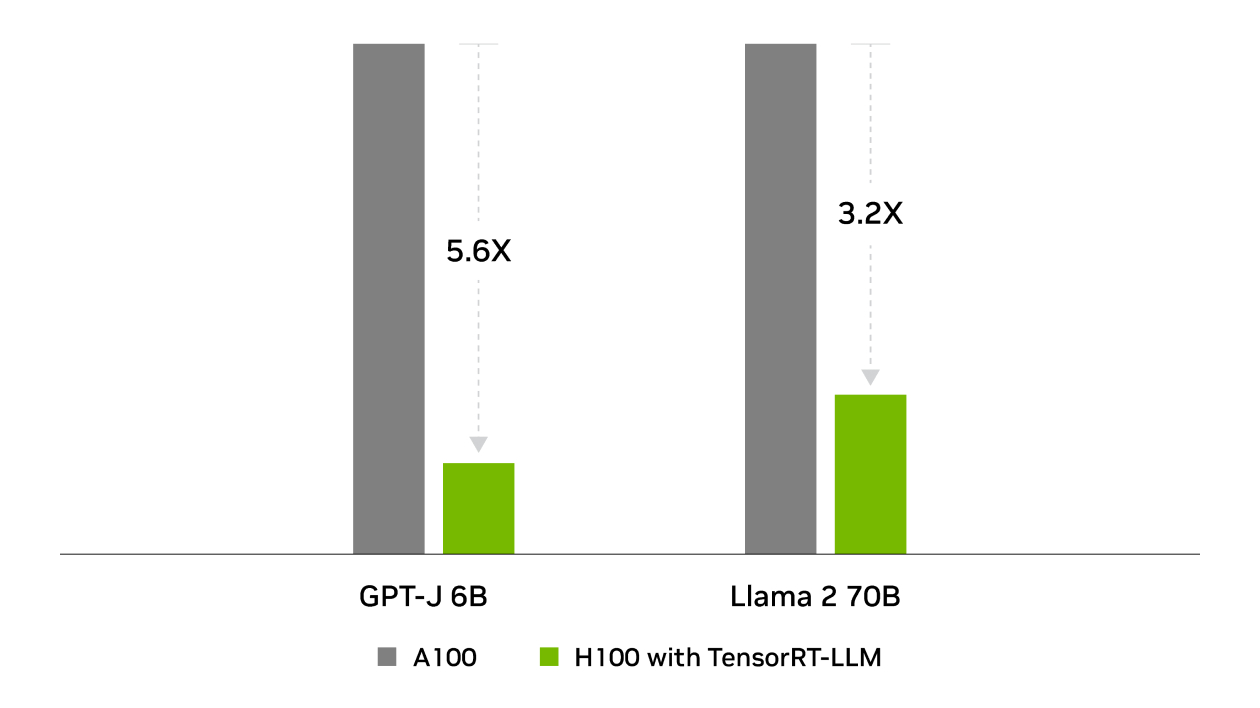

Tensorrt Sdk Nvidia Developer The llm api streamlines the process by managing model loading, optimization, and inference, all through a single llm instance. here is a simple example to show how to use the llm api with tinyllama. In this how to guide, we’ll go end to end—from install to engine build to serving—so you can confidently deploy faster, cheaper inference on nvidia gpus. this tutorial is written in a practical & solution oriented style. Nvidia’s tensorrt llm provides an answer, offering a dedicated inference optimisation toolkit designed to maximise llm performance using hardware acceleration and fine tuned software configurations. this blog provides a comprehensive guide to tuning tensorrt llm for optimal model serving. Ship faster llm apps on nvidia: step by step tensorrt llm guide with real code, quantization tips & vllm tgi comparisons for ai builders. Whether you’re an ai engineer, software developer, or researcher, this guide will give you the knowledge to leverage tensorrt llm for optimizing llm inference on nvidia gpus. Tensorrt llm optimization reduces inference latency by up to 300% while maintaining model accuracy. this guide provides step by step instructions to optimize your llm deployments with proven techniques and real benchmarks.

Nvidia Tensorrt Llm Now Supports Recurrent Drafting For Optimizing Llm Nvidia’s tensorrt llm provides an answer, offering a dedicated inference optimisation toolkit designed to maximise llm performance using hardware acceleration and fine tuned software configurations. this blog provides a comprehensive guide to tuning tensorrt llm for optimal model serving. Ship faster llm apps on nvidia: step by step tensorrt llm guide with real code, quantization tips & vllm tgi comparisons for ai builders. Whether you’re an ai engineer, software developer, or researcher, this guide will give you the knowledge to leverage tensorrt llm for optimizing llm inference on nvidia gpus. Tensorrt llm optimization reduces inference latency by up to 300% while maintaining model accuracy. this guide provides step by step instructions to optimize your llm deployments with proven techniques and real benchmarks.

Comments are closed.