Parameter Efficient Fine Tuning Methods For Pretrained Language Models

Parameter Efficient Fine Tuning Methods For Pretrained Language Models In this paper, we present a comprehensive and systematic review of peft methods for plms. we summarize these peft methods, discuss their applications, and outline future directions. In this analysis, different methods of fine tuning with only a small number of parameters are compared on a large set of natural language processing tasks.

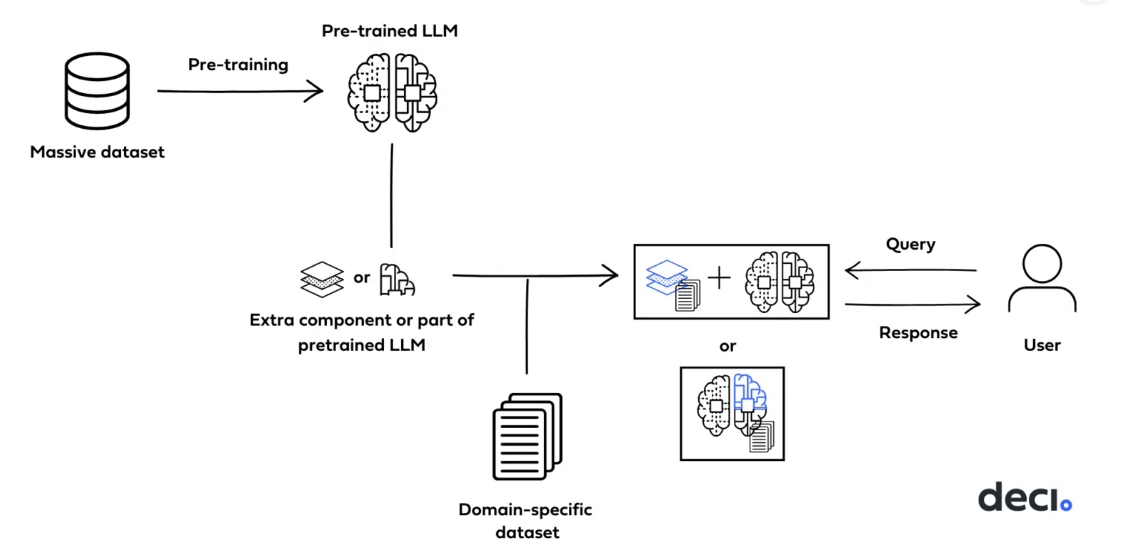

Fine Tuning Methods Of Large Language Models In this paper, we present a comprehensive and systematic review of peft methods for plms. we summarize these peft methods, discuss their applications, and outline future directions. furthermore, we conduct experiments using several representative peft methods to better understand their effectiveness in parameter efficiency and memory efficiency. To address this issue, parameter efficient fine tuning (peft) offers a practical solution by efficiently adjusting the parameters of large pre trained models to suit various downstream tasks. Parameter efficient fine tuning (peft) methods are transformative for adapting large scale plms to specific tasks without the exorbitant costs of full fine tuning. In this survey, we delve into reparameterization based peft methods, which aim to fine tune llms with reduced computational costs while preserving their knowledge. we systematically analyze their design principles and divide these methods into six categories.

Parameter Efficient Tuning Large Language Models For Graph Parameter efficient fine tuning (peft) methods are transformative for adapting large scale plms to specific tasks without the exorbitant costs of full fine tuning. In this survey, we delve into reparameterization based peft methods, which aim to fine tune llms with reduced computational costs while preserving their knowledge. we systematically analyze their design principles and divide these methods into six categories. In this paper, we present a comprehensive and systematic review of peft methods for plms. we summarize these peft methods, discuss their applications, and outline future directions. furthermore, we conduct experiments using several representative peft methods to better understand their effectiveness in parameter efficiency and memory efficiency. Parameter efficient fine tuning (peft) is a technique that fine tunes large pretrained language models (llms) for specific tasks by updating only a small subset of their parameters while keeping most of the model unchanged. This paper re frame state of the art parameter efficient transfer learning methods as modifications to specific hidden states in pre trained models, and defines a set of design dimensions along which different methods vary, achieving comparable results to fine tuning all parameters on all four tasks.

Comments are closed.