Llm Parameter Efficient Fine Tuning Explained

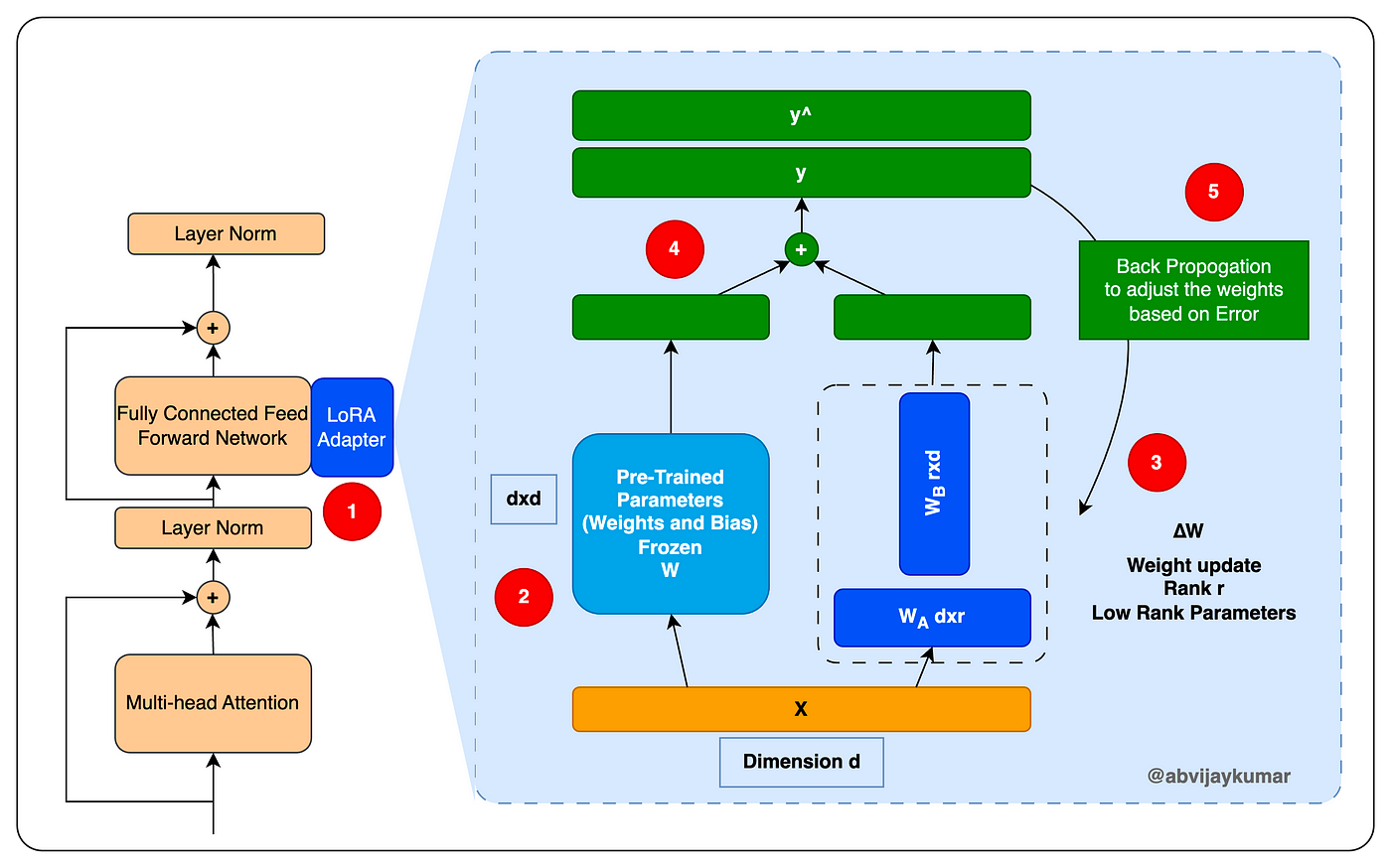

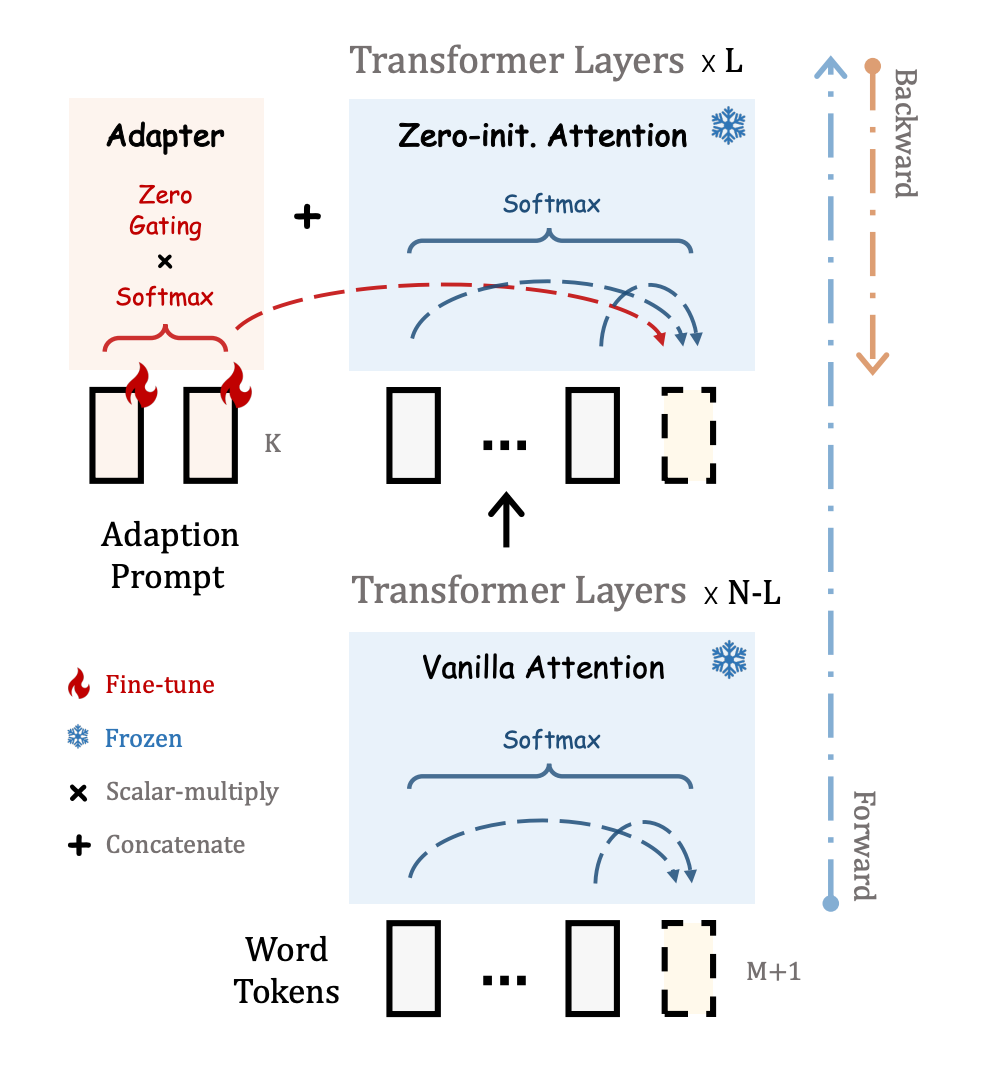

Fine Tuning Llm Parameter Efficient Fine Tuning Peft Lora Qlora Parameter efficient fine tuning (peft) is a technique that fine tunes large pretrained language models (llms) for specific tasks by updating only a small subset of their parameters while keeping most of the model unchanged. Parameter efficient fine tuning (peft): techniques like lora, qlora, and adapters allow for fine tuning with reduced computational costs by updating a small subset of model parameters.

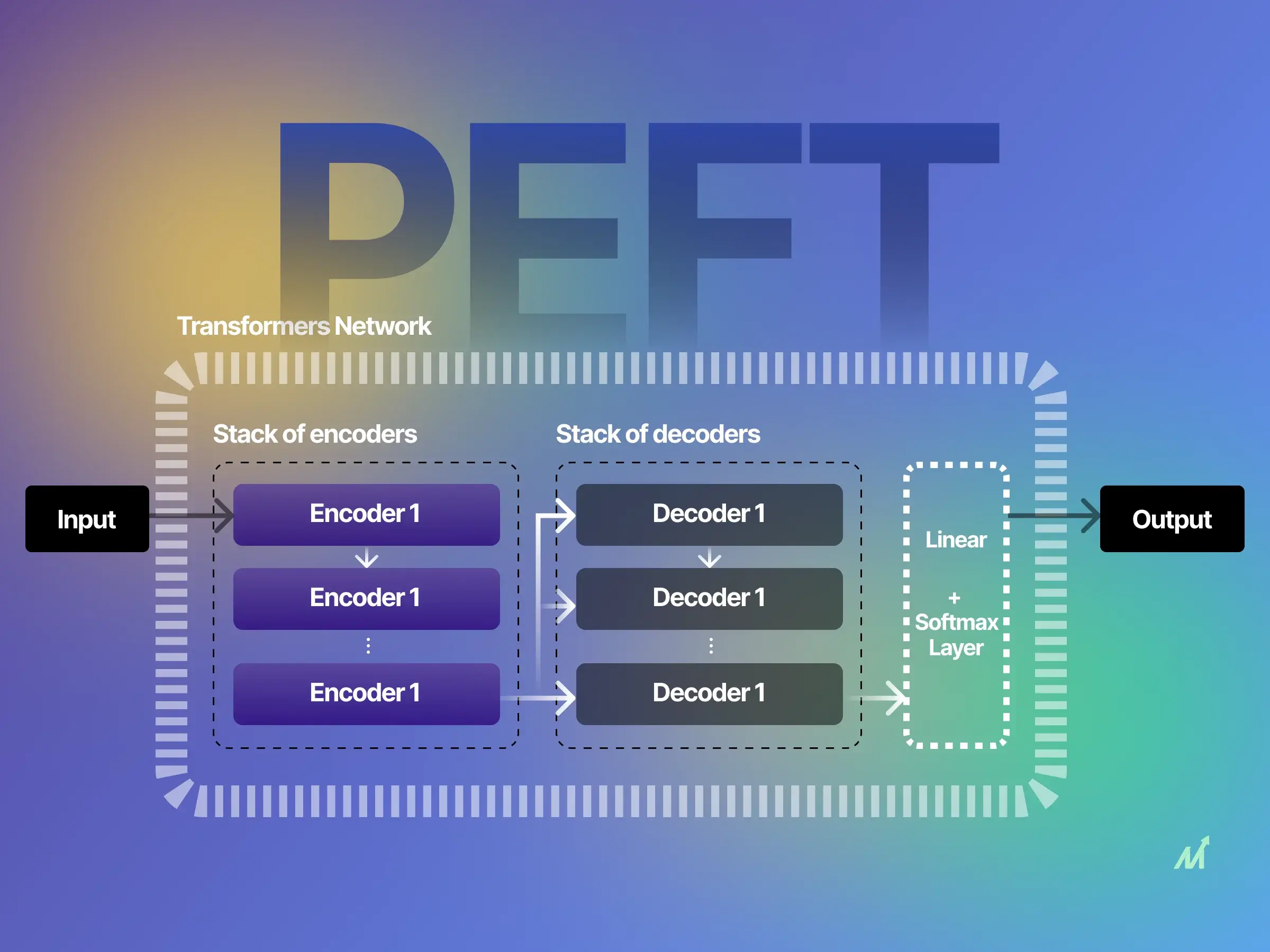

Parameter Efficient Fine Tuning Guide For Llm Let’s embark on a journey to understand the intricacies of fine tuning llms and explore the innovative peft (parameter efficient fine tuning) technique that’s transforming the field. Parameter efficient fine tuning (peft) peft methods freeze most pretrained weights and train only a small subset of parameters, dramatically reducing memory requirements while often matching full fine tuning performance. This article explores the universe of parameter efficient fine tuning (peft) techniques—a set of approaches that enable the adaptation of large language models (llms) more efficiently in terms of memory and computational performance. Llm fine tuning is the process of adapting a pre trained model on a task specific dataset to improve accuracy, reduce hallucinations, and produce outputs that reflect domain specific knowledge not present in the base model parameter efficient fine tuning (peft) methods such as lora and qlora enable organizations to fine tune large language models at a fraction of the compute cost of full fine.

Parameter Efficient Fine Tuning Guide For Llm This article explores the universe of parameter efficient fine tuning (peft) techniques—a set of approaches that enable the adaptation of large language models (llms) more efficiently in terms of memory and computational performance. Llm fine tuning is the process of adapting a pre trained model on a task specific dataset to improve accuracy, reduce hallucinations, and produce outputs that reflect domain specific knowledge not present in the base model parameter efficient fine tuning (peft) methods such as lora and qlora enable organizations to fine tune large language models at a fraction of the compute cost of full fine. Parameter efficient fine tuning (peft) is a technique used to improve the performance of pre trained llms on specific downstream tasks while minimizing the number of trainable parameters. Parameter efficient finetuning (peft) of llm this article explains the peft technique to finetune llm and how can we implement it. Parameter efficient fine tuning (peft) is a method of improving the performance of pretrained large language models (llms) and neural networks for specific tasks or data sets. This llm is used to train the data more efficiently according to different categorizations such as medical dataset prediction, language translation, and user support.

Parameter Efficient Fine Tuning Guide For Llm Parameter efficient fine tuning (peft) is a technique used to improve the performance of pre trained llms on specific downstream tasks while minimizing the number of trainable parameters. Parameter efficient finetuning (peft) of llm this article explains the peft technique to finetune llm and how can we implement it. Parameter efficient fine tuning (peft) is a method of improving the performance of pretrained large language models (llms) and neural networks for specific tasks or data sets. This llm is used to train the data more efficiently according to different categorizations such as medical dataset prediction, language translation, and user support.

Comments are closed.