Full Parameter Llm Fine Tuning Guide

Parameter Efficient Fine Tuning Guide For Llm This report aims to serve as a comprehensive guide for researchers and practitioners, offering actionable insights into fine tuning llms while navigating the challenges and opportunities inherent in this rapidly evolving field. Learn the mechanics of full fine tuning, managing resources, configuring hyperparameters, and monitoring the training process.

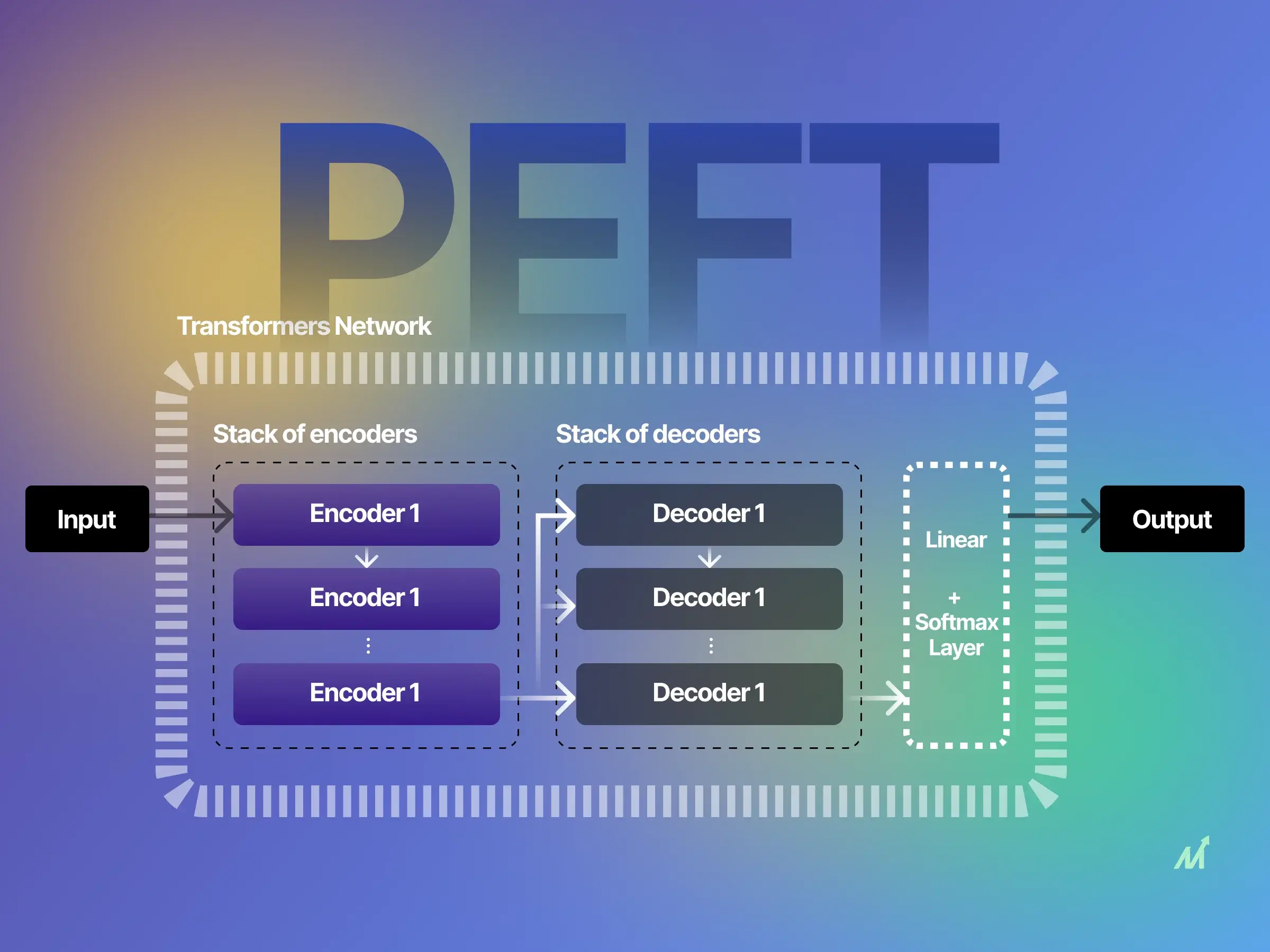

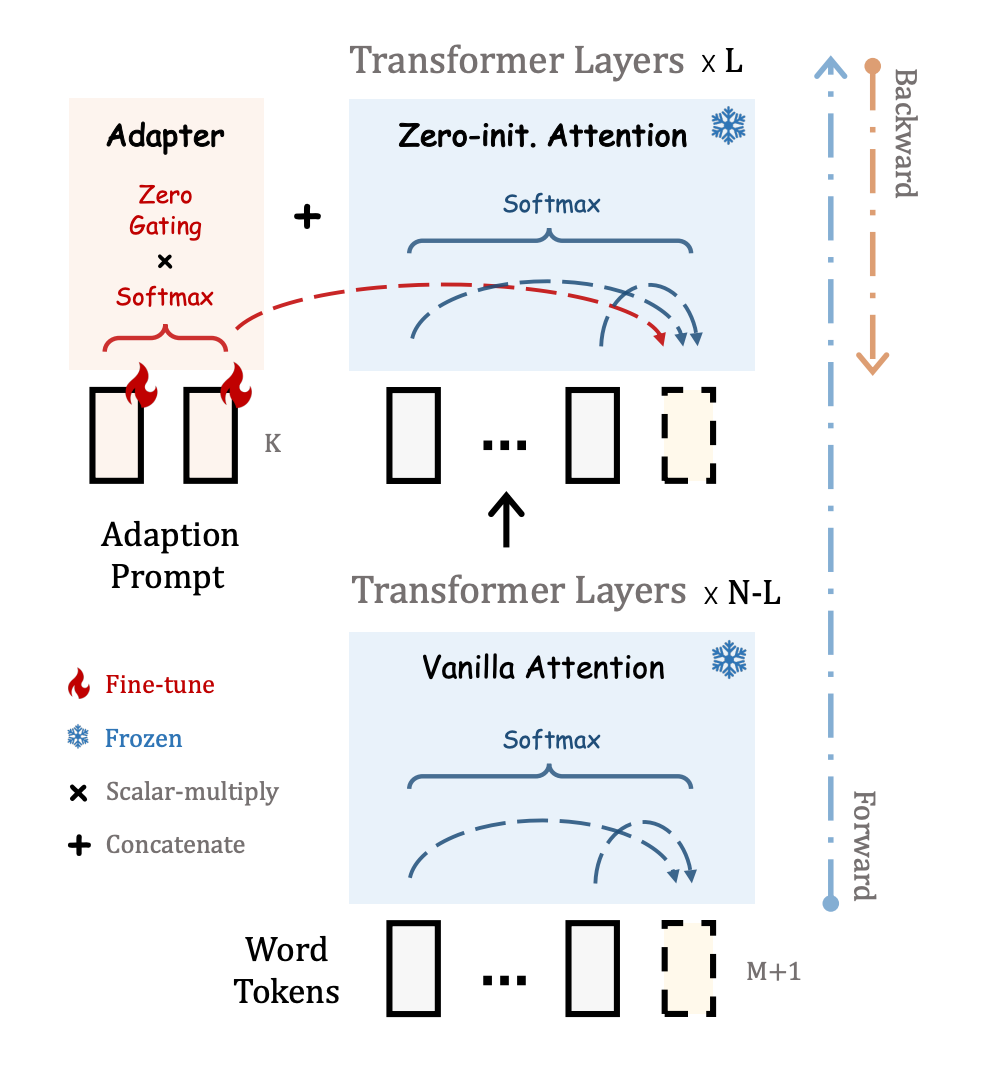

Parameter Efficient Fine Tuning Guide For Llm A comprehensive guide to fine tuning large language models (llms) from scratch. covers full fine tuning, instruction tuning, and parameter efficient techniques like lora and qlora. Process: fine tuning the model on datasets that contain instructions and the desired outputs. this also includes rlhf. Fine tuning involves adjusting llm parameters, and the scale of this adjustment depends on the specific task that you want to fulfill. broadly, there are two fundamental approaches to fine tuning llms: feature extraction and full fine tuning. Before lora, the most straightforward way to adapt a pre trained llm to a new task or domain was “full fine tuning” (also known as full parameter fine tuning or full weight.

Parameter Efficient Fine Tuning Guide For Llm Fine tuning involves adjusting llm parameters, and the scale of this adjustment depends on the specific task that you want to fulfill. broadly, there are two fundamental approaches to fine tuning llms: feature extraction and full fine tuning. Before lora, the most straightforward way to adapt a pre trained llm to a new task or domain was “full fine tuning” (also known as full parameter fine tuning or full weight. In this guide, we’ll cover the complete fine tuning process, from defining goals to deployment. we’ll also highlight why dataset creation is the most crucial step and how using a larger llm for filtering can make your smaller model much smarter. Enter parameter efficient fine tuning (peft) techniques, particularly low rank adaptation (lora), which have revolutionized how we approach llm customization. these methods allow you to achieve remarkable results while using a fraction of the computational resources required by full fine tuning. Discover the power of llm fine tuning.our comprehensive guide walks you through adapting large language models for enhanced domain specific results. Full fine tuning of a model with dimensional weight matrices requires storing full gradients and optimizer states, resulting in memory consumption of approximately s x bytes per parameter (weights gradients adam first second moments in mixed precision).

Full Parameter Llm Fine Tuning Guide In this guide, we’ll cover the complete fine tuning process, from defining goals to deployment. we’ll also highlight why dataset creation is the most crucial step and how using a larger llm for filtering can make your smaller model much smarter. Enter parameter efficient fine tuning (peft) techniques, particularly low rank adaptation (lora), which have revolutionized how we approach llm customization. these methods allow you to achieve remarkable results while using a fraction of the computational resources required by full fine tuning. Discover the power of llm fine tuning.our comprehensive guide walks you through adapting large language models for enhanced domain specific results. Full fine tuning of a model with dimensional weight matrices requires storing full gradients and optimizer states, resulting in memory consumption of approximately s x bytes per parameter (weights gradients adam first second moments in mixed precision).

The Essential Guide To Llm Fine Tuning Humansignal Discover the power of llm fine tuning.our comprehensive guide walks you through adapting large language models for enhanced domain specific results. Full fine tuning of a model with dimensional weight matrices requires storing full gradients and optimizer states, resulting in memory consumption of approximately s x bytes per parameter (weights gradients adam first second moments in mixed precision).

Comments are closed.