Openfree Llm Quantization At Main

Openfree Llm Quantization At Main We’re on a journey to advance and democratize artificial intelligence through open source and open science. This is a curated list of resources related to quantization techniques for large language models (llms). quantization is a crucial step in deploying llms on resource constrained devices, such as mobile phones or edge devices, by reducing the model's size and computational requirements.

Llm Quantization Llm Quantization This paper presents a data free quantization method specifically designed for addressing outliers in large language models (llms). by separately quantizing the non outlier portion of the weights, the method aims to mitigate the performance drop caused by outliers. Ion method for llms to guarantee its generalization per formance? in this work, we propose easyquant, a trainin. free and data free weight only quantization al gorithm for llms. our observation indicates that two factors: outliers in the weight and quant. We systematically explore various methodologies designed to tackle the resource intensive nature of llms, including post training quantization (ptq), quantization aware fine tuning (qaf), and quantization aware training (qat). This paper provides a comprehensive overview of llm quantization, delving into various quantization methods, their impact on model performance, and their practical applications across diverse domains.

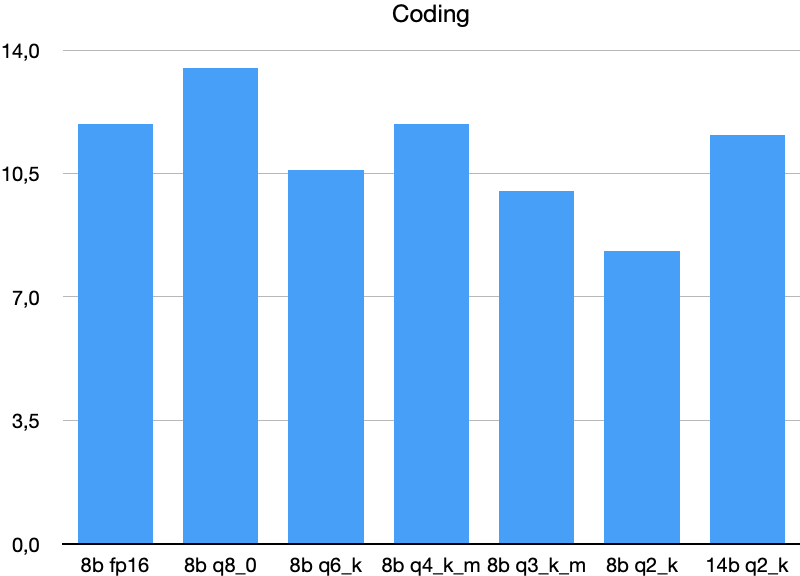

Github Zhitengli Awesome Llm Quantization Collect Llm Quantization We systematically explore various methodologies designed to tackle the resource intensive nature of llms, including post training quantization (ptq), quantization aware fine tuning (qaf), and quantization aware training (qat). This paper provides a comprehensive overview of llm quantization, delving into various quantization methods, their impact on model performance, and their practical applications across diverse domains. Converts a hugging face model to gguf format. st.write (f"🔄 converting `{model dir}` to gguf format ") quantizes a gguf model. st.write (f"⚡ quantizing `{model path}` with `{quant type}` precision ") orchestrates the entire quantization process. st.success (f"🎉 all steps completed! quantized model available at: `{quantized file}`"). Our contribution: in this work, we propose a novel data free model quantization algorithm, namely easyquant, that potentially improves the performance of low bits quantized llms. the gen eralization ability of llms is inherently guaran teed since easyquant does not need any input data. Complete guide to running llms locally with gpu requirements, ram needs, ollama setup, quantization levels, and performance benchmarks for 2026. The llm compressor examples are organized primarily by quantization scheme. each folder contains model specific examples showing how to apply that quantization scheme to a particular model. some examples are additionally grouped by model type, such as: multimodal audio multimodal vision quantizing moe other examples are grouped by algorithm.

Llm Quantization Making Models Faster And Smaller Matterai Blog Converts a hugging face model to gguf format. st.write (f"🔄 converting `{model dir}` to gguf format ") quantizes a gguf model. st.write (f"⚡ quantizing `{model path}` with `{quant type}` precision ") orchestrates the entire quantization process. st.success (f"🎉 all steps completed! quantized model available at: `{quantized file}`"). Our contribution: in this work, we propose a novel data free model quantization algorithm, namely easyquant, that potentially improves the performance of low bits quantized llms. the gen eralization ability of llms is inherently guaran teed since easyquant does not need any input data. Complete guide to running llms locally with gpu requirements, ram needs, ollama setup, quantization levels, and performance benchmarks for 2026. The llm compressor examples are organized primarily by quantization scheme. each folder contains model specific examples showing how to apply that quantization scheme to a particular model. some examples are additionally grouped by model type, such as: multimodal audio multimodal vision quantizing moe other examples are grouped by algorithm.

Llm Quantization Comparison Complete guide to running llms locally with gpu requirements, ram needs, ollama setup, quantization levels, and performance benchmarks for 2026. The llm compressor examples are organized primarily by quantization scheme. each folder contains model specific examples showing how to apply that quantization scheme to a particular model. some examples are additionally grouped by model type, such as: multimodal audio multimodal vision quantizing moe other examples are grouped by algorithm.

Comments are closed.