Llm Quantization Comparison

Exploiting Llm Quantization We evaluate qwen2.5, deepseek, mistral, and llama 3.3 across five key tasks and multiple quantization formats. discover which formats like gptq int8 and q5 k m offer the best accuracy, efficiency, and stability for real world use cases like agents, finance tools, and coding assistants. Complete guide to llm quantization comparing q4, q8, and fp16. learn how quantization works, quality tradeoffs by task type.

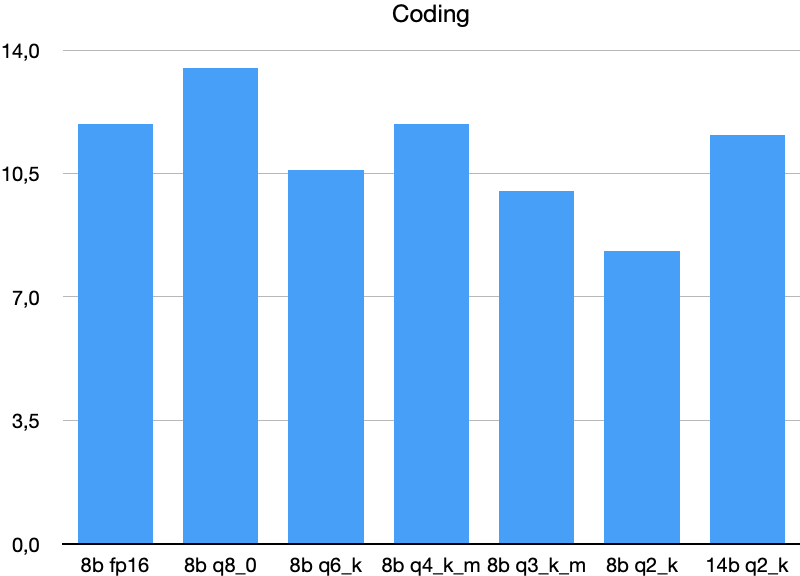

Llm Quantization Comparison In this article, we compare various degrees of quantization, analyzing their impact on both speed and output quality. the table below presents a performance comparison of different quantization levels applied to the deepseek r1 abliterated model. the models were evaluated across various tasks. Quantization solves this by compressing weights from 16 bit floats to 4 bit integers, shrinking models by 75% with surprisingly little quality loss. a llama 3 70b that normally requires multiple a100s can run on a single rtx 4090 after quantization. but the method matters. This paper aims to provide a comprehensive review of quantization techniques in the context of llms. we begin by detailing the underlying mechanisms of quantization, followed by a comparison of various approaches, with a specific focus on their application at the llm level. Explore the results of our llm quantization benchmark where we compared 4 precision formats of qwen3 32b on a single h100 gpu.

Llm Quantization Comparison This paper aims to provide a comprehensive review of quantization techniques in the context of llms. we begin by detailing the underlying mechanisms of quantization, followed by a comparison of various approaches, with a specific focus on their application at the llm level. Explore the results of our llm quantization benchmark where we compared 4 precision formats of qwen3 32b on a single h100 gpu. Twelve llm quantization strategies compared — when to choose 8 bit, 4 bit, gptq, awq, nf4, kv cache quantization, and more to balance speed, cost, and quality. This is a curated list of resources related to quantization techniques for large language models (llms). quantization is a crucial step in deploying llms on resource constrained devices, such as mobile phones or edge devices, by reducing the model's size and computational requirements. We identify links between the multilingual performance of widely adopted llm quantization methods and multiple factors such as language’s prevalence in the training set and similarity to model’s dominant language. Dynamic llm quantization offers more flexibility and usually results in a higher quality output. static model quantization provides a faster inference speed, but usually has more loss of accuracy compared to dynamic ai quantization methods.

Comments are closed.