Ollama Integration With Github Copilot And Visual Studio

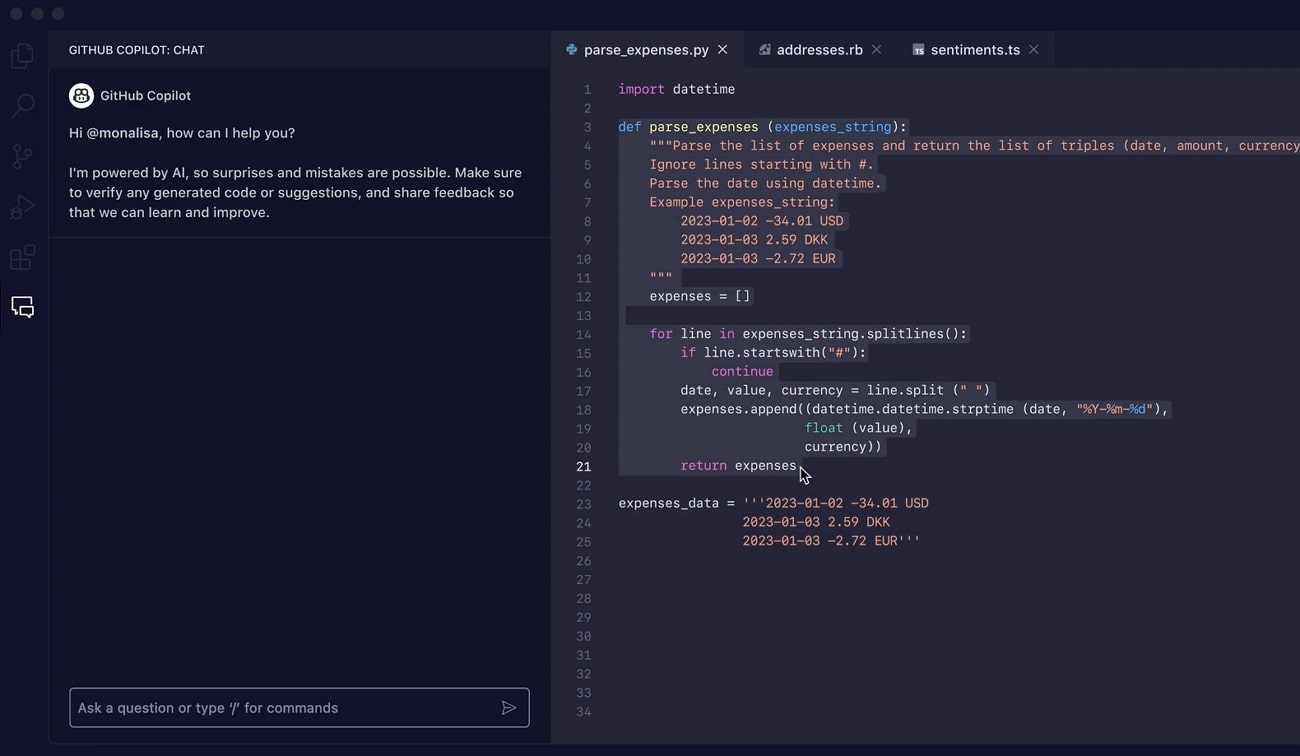

Github Colcearionut Ollama Vscode Integration Vs Code Extension To Ollama copilot is a vs code extension that brings github copilot like ai assistance directly to your editor, running entirely on your local machine using ollama. With ollama and vs code’s language model customization feature, you can now pipe any locally running model straight into github copilot chat — and use it just like you’d use gpt 4o or.

Integrating Github Copilot In Visual Studio A Complete Guide Opilot integrates the full ollama ecosystem — local models, cloud models, and the ollama model library — directly into vs code's copilot chat interface. your conversations never leave your machine when using local models, and you can switch between models without leaving the editor. Vs code includes built in ai chat through github copilot chat. ollama models can be used directly in the copilot chat model picker. Steps integration ollma with github copilot and vscode 1) ollma should be there 2) vs code should there 3) make sure the right model was there like qwen 3:8b or something compatible. Github shipped ollama integration for copilot in march 2026. every code suggestion, chat prompt, and agentic workflow can now route to local models running on your machine.

Github Nickytonline Ollama Copilot Extension A Github Copilot Steps integration ollma with github copilot and vscode 1) ollma should be there 2) vs code should there 3) make sure the right model was there like qwen 3:8b or something compatible. Github shipped ollama integration for copilot in march 2026. every code suggestion, chat prompt, and agentic workflow can now route to local models running on your machine. Visual studio code now integrates with ollama via github copilot. if you have ollama installed, any local or cloud model from ollama can be selected for use within visual studio code. In this guide, i’ll show you how to set up ollama, deepseek coder, and continue inside vs code to create your own “copilot like” experience. ollama: a lightweight runtime that lets you download and run large language models (llms) locally. think of it like “docker for ai models.”. On march 26, 2026, ollama said visual studio code now integrates with ollama through github copilot, so any local or cloud model available in ollama can be selected directly inside vs code. that matters because the integration is not framed as a side extension or a separate chat panel. 𝐕𝐢𝐬𝐮𝐚𝐥 𝐒𝐭𝐮𝐝𝐢𝐨 𝐂𝐨𝐝𝐞 𝐍𝐨𝐰 𝐈𝐧𝐭𝐞𝐠𝐫𝐚𝐭𝐞𝐬 𝐎𝐥𝐥𝐚𝐦𝐚 𝐕𝐢𝐚 𝐆𝐢𝐭𝐇𝐮𝐛 𝐂𝐨𝐩𝐢𝐥𝐨𝐭 🚀 developers can now run local or cloud llms directly inside visual.

Ollama Copilot Visual Studio Marketplace Visual studio code now integrates with ollama via github copilot. if you have ollama installed, any local or cloud model from ollama can be selected for use within visual studio code. In this guide, i’ll show you how to set up ollama, deepseek coder, and continue inside vs code to create your own “copilot like” experience. ollama: a lightweight runtime that lets you download and run large language models (llms) locally. think of it like “docker for ai models.”. On march 26, 2026, ollama said visual studio code now integrates with ollama through github copilot, so any local or cloud model available in ollama can be selected directly inside vs code. that matters because the integration is not framed as a side extension or a separate chat panel. 𝐕𝐢𝐬𝐮𝐚𝐥 𝐒𝐭𝐮𝐝𝐢𝐨 𝐂𝐨𝐝𝐞 𝐍𝐨𝐰 𝐈𝐧𝐭𝐞𝐠𝐫𝐚𝐭𝐞𝐬 𝐎𝐥𝐥𝐚𝐦𝐚 𝐕𝐢𝐚 𝐆𝐢𝐭𝐇𝐮𝐛 𝐂𝐨𝐩𝐢𝐥𝐨𝐭 🚀 developers can now run local or cloud llms directly inside visual.

Github Kwame Mintah Vscode Ollama Local Code Copilot Run A Local On march 26, 2026, ollama said visual studio code now integrates with ollama through github copilot, so any local or cloud model available in ollama can be selected directly inside vs code. that matters because the integration is not framed as a side extension or a separate chat panel. 𝐕𝐢𝐬𝐮𝐚𝐥 𝐒𝐭𝐮𝐝𝐢𝐨 𝐂𝐨𝐝𝐞 𝐍𝐨𝐰 𝐈𝐧𝐭𝐞𝐠𝐫𝐚𝐭𝐞𝐬 𝐎𝐥𝐥𝐚𝐦𝐚 𝐕𝐢𝐚 𝐆𝐢𝐭𝐇𝐮𝐛 𝐂𝐨𝐩𝐢𝐥𝐨𝐭 🚀 developers can now run local or cloud llms directly inside visual.

Comments are closed.