Using Authenticated Ollama Endpoint With Github Copilot Chat Issue

Github Copilot Chat Github Docs I have searched through the documentation but could not find how to configure github copilot chat to provide the api token when invoking the ollama api endpoint. Scroll down to github › copilot › chat › byok: ollama endpoint. this field allows you to provide your own server endpoint. paste this url (or the address of your own ollama instance) into the setting. after saving the setting, restart vs code to ensure github copilot picks up the configuration.

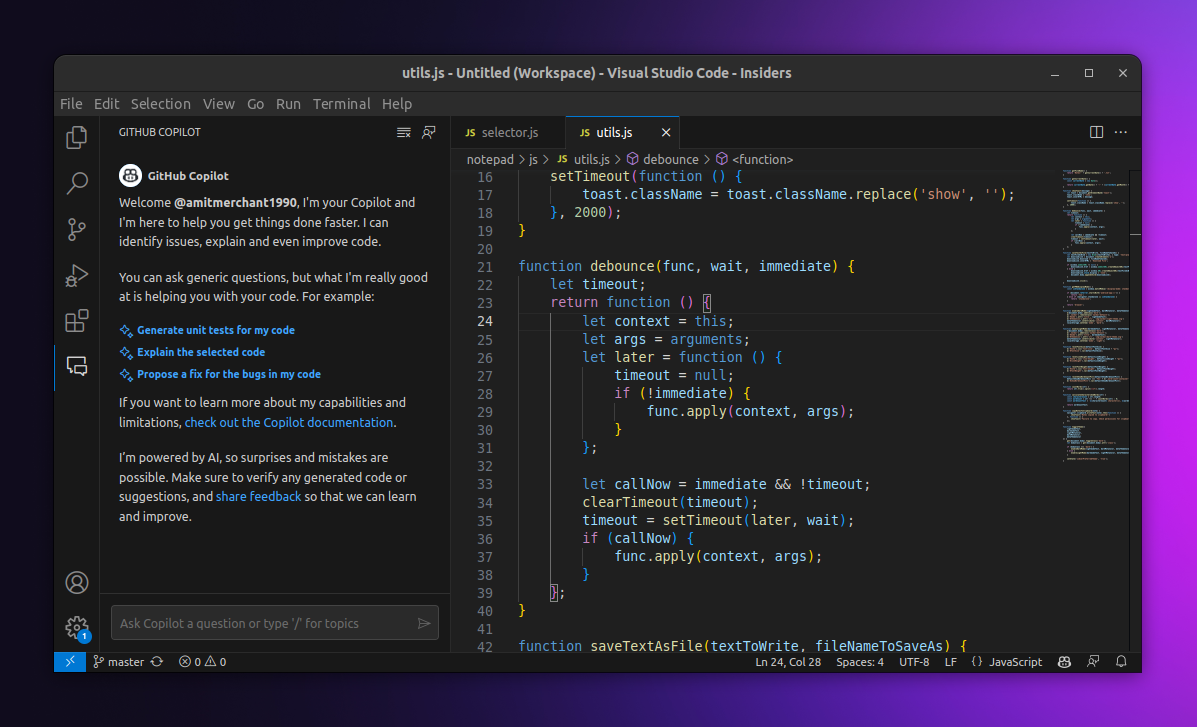

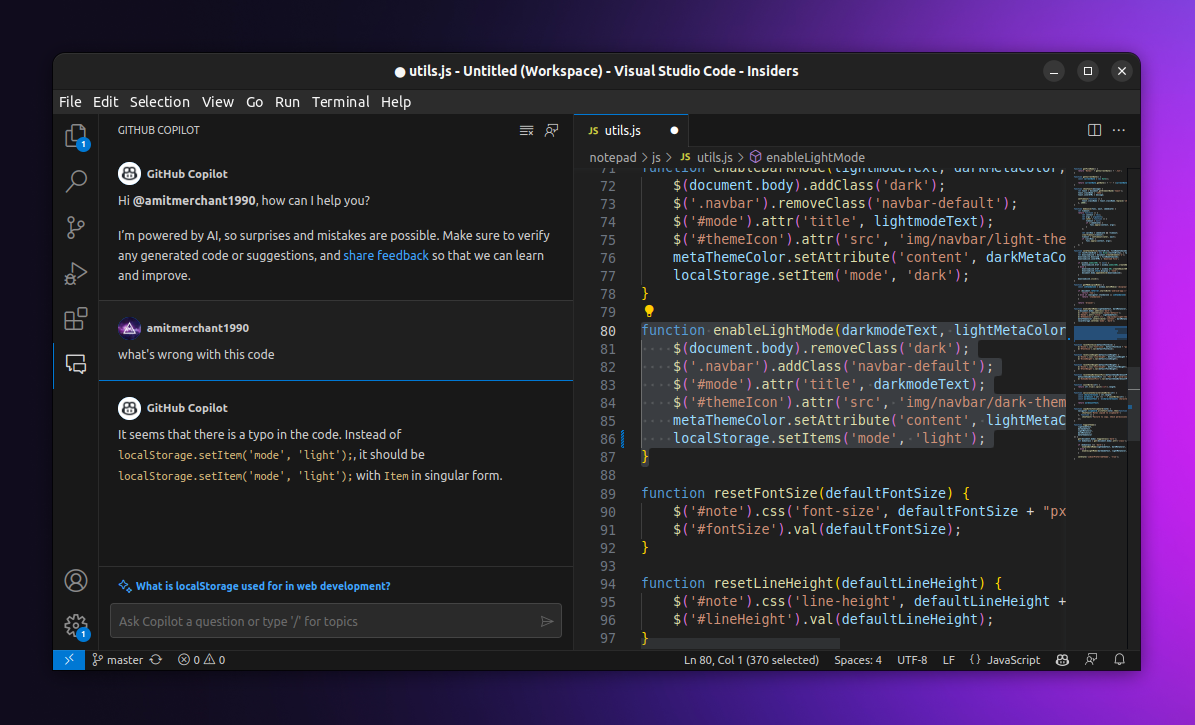

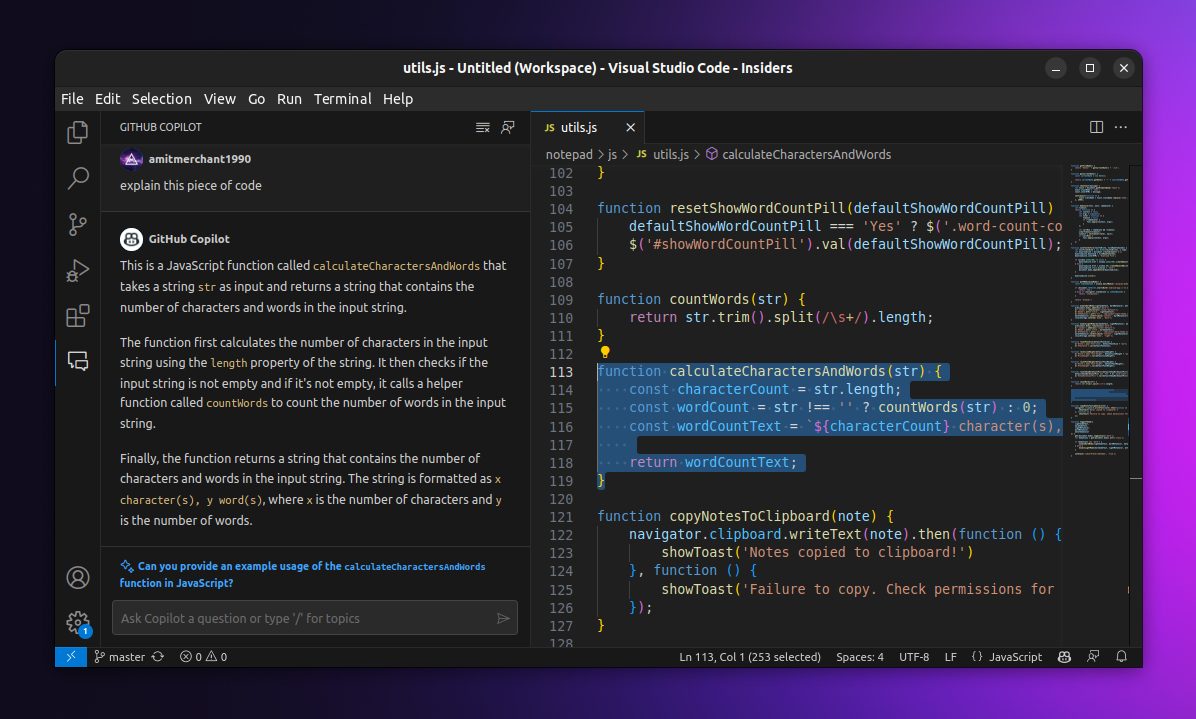

I Got Access To Github Copilot Chat Amit Merchant A Blog On Php I'm experiencing a frustrating issue with the integration between github copilot and ollama in visual studio code. the problem: my local ollama models are correctly detected by vs code and appear in the "language models" management tab. Given the current api incompatibility, direct integration between copilot chat and local ollama is unsupported. however, there are viable workarounds: use vs code ai toolkit playground: this remains the most straightforward way to interact with local ollama models within vs code. Vs code includes built in ai chat through github copilot chat. ollama models can be used directly in the copilot chat model picker. I already knew ollama was supported — in fact, hooking it up to copilot was the first thing i did after running my first model. wiring it up to ollama running on a different computer proved to be a little less obvious, and fortunately i stumbled across a relevant github discussion.

I Got Access To Github Copilot Chat Amit Merchant A Blog On Php Vs code includes built in ai chat through github copilot chat. ollama models can be used directly in the copilot chat model picker. I already knew ollama was supported — in fact, hooking it up to copilot was the first thing i did after running my first model. wiring it up to ollama running on a different computer proved to be a little less obvious, and fortunately i stumbled across a relevant github discussion. We want to use custom openai compatible api llms with github copilot chat in vs code without api keys. we will use litellm as a proxy for authentication and use the azure ai model support in chat as a hack. This document covers the ollama api compatibility layer that enables ollama llama clients to interact with github copilot through the proxy server. the implementation is located in ollama.ts and provides bidirectional translation between ollama api format and github copilot api format. I wanted to configure the extention so when i want to chat it would use the ollama models on the server. the issue is that github copilot only allows for local ollama. Issue description when using github copilot chat with ollama cloud, all models work correctly except gm 5.1:cloud, which consistently returns a 403 authentication error on every request.

I Got Access To Github Copilot Chat Amit Merchant A Blog On Php We want to use custom openai compatible api llms with github copilot chat in vs code without api keys. we will use litellm as a proxy for authentication and use the azure ai model support in chat as a hack. This document covers the ollama api compatibility layer that enables ollama llama clients to interact with github copilot through the proxy server. the implementation is located in ollama.ts and provides bidirectional translation between ollama api format and github copilot api format. I wanted to configure the extention so when i want to chat it would use the ollama models on the server. the issue is that github copilot only allows for local ollama. Issue description when using github copilot chat with ollama cloud, all models work correctly except gm 5.1:cloud, which consistently returns a 403 authentication error on every request.

I Got Access To Github Copilot Chat Amit Merchant A Blog On Php I wanted to configure the extention so when i want to chat it would use the ollama models on the server. the issue is that github copilot only allows for local ollama. Issue description when using github copilot chat with ollama cloud, all models work correctly except gm 5.1:cloud, which consistently returns a 403 authentication error on every request.

I Got Access To Github Copilot Chat Amit Merchant A Blog On Php

Comments are closed.