Neural Network Tutorial 2 Perceptron Learning Algorithm

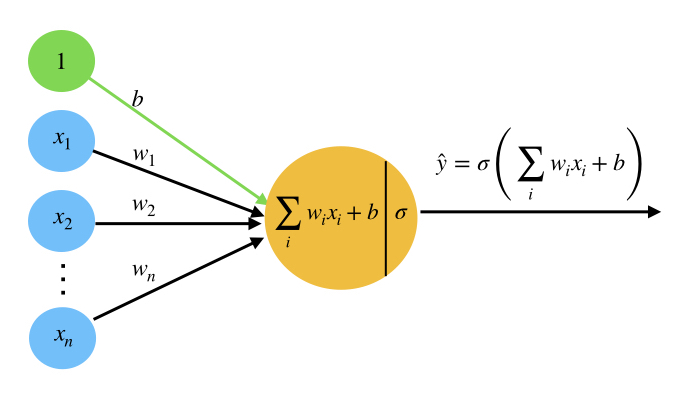

Chapter 3 2 Perceptron Learning Algorithm Pdf A perceptron is the simplest form of a neural network that makes decisions by combining inputs with weights and applying an activation function. it is mainly used for binary classification problems. An artificial neural network is composed of many artificial neurons that are linked together according to a specific network architecture. the objective of the neural network is to.

Neural Network Tutorial 2 Perceptron Learning Algorithm Algorithm What we are going to do in this post is to delve deep into the concept of perceptron and its learning algorithm. the perceptron is a fundamental building block in neural networks, pivotal in machine learning and deep learning. If you’re just getting into machine learning (as i am), you’ve invariably heard about the perceptron — a simple algorithm that laid the foundation for neural networks. Learn the perceptron learning algorithm step by step. understand its models, key features, limitations, and how it can help advance your machine learning career. The perceptron is the fundamental building block of neural networks. this tutorial provides a comprehensive understanding of perceptrons, covering their architecture, functionality, and python implementation.

Neural Network Machine Learning Tutorial Learn the perceptron learning algorithm step by step. understand its models, key features, limitations, and how it can help advance your machine learning career. The perceptron is the fundamental building block of neural networks. this tutorial provides a comprehensive understanding of perceptrons, covering their architecture, functionality, and python implementation. For deeper insight, this tutorial provides python code (a widely used language in machine learning) to implement these algorithms, along with informative visualisations. This is typically done using a learning algorithm such as the perceptron learning rule or a backpropagation algorithm. the learning process presents the perceptron with labeled examples, where the desired output is known. Connectionism: explain intellectual abilities using connections between neurons (i.e., artificial neural networks) example: perceptron, larger scale neural networks. The proof utilizes the fact that the number of mistakes the algorithm makes during training is bounded, irrespectively of the number of observations. that means that we can add more observations to the training dataset (particularly, the test observation), without changing much.

Comments are closed.