Perceptron Learning Algorithm Understanding The 1958 Neural Network

Perceptron Learning Algorithm Understanding The 1958 Neural Network In 1958, psychologist frank rosenblatt at cornell aeronautical laboratory built the mark i perceptron, an actual physical machine that could learn to recognize simple patterns in 20×20 pixel images using photocells, potentiometers, and electric motors to implement the algorithm in hardware. Frank rosenblatt’s perceptron proved that machines could learn from experience —a radical claim in 1958 that now feels obvious. its initial shortcomings spurred deeper theory, eventually leading to networks thousands of layers deep that classify images, translate languages, and generate code.

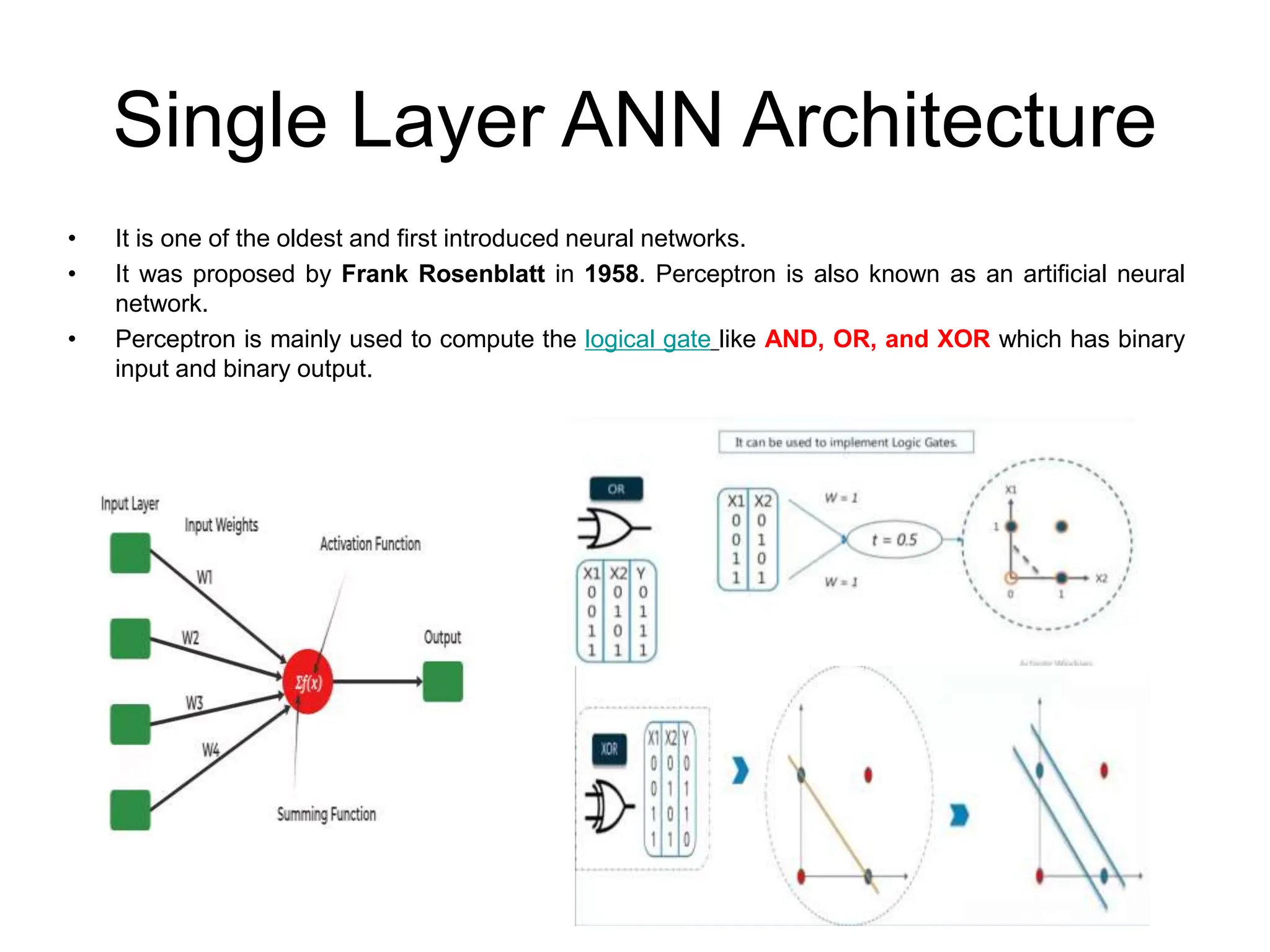

Understanding The Perceptron Neural Network Nomidl The perceptron algorithm is also termed the single layer perceptron, to distinguish it from a multilayer perceptron, which is a misnomer for a more complicated neural network. A perceptron is the simplest form of a neural network that makes decisions by combining inputs with weights and applying an activation function. it is mainly used for binary classification problems. Understanding perceptrons is key to grasping more complex neural network architectures. let’s explore what perceptrons are all about, including their history, how they work, and a practical code example. the perceptron concept was introduced by frank rosenblatt in 1958. This article is part of my series on the history of machine learning and signal processing, where i revisit the pioneers who laid the foundations of today’s ai.

Artificial Neural Network Learning Algorithm Ppt Understanding perceptrons is key to grasping more complex neural network architectures. let’s explore what perceptrons are all about, including their history, how they work, and a practical code example. the perceptron concept was introduced by frank rosenblatt in 1958. This article is part of my series on the history of machine learning and signal processing, where i revisit the pioneers who laid the foundations of today’s ai. This document discusses the perceptron learning algorithm, a foundational neural network model introduced in 1958. it explains how the algorithm updates weights based on prediction errors for binary classification tasks, emphasizing its application in supervised learning with linearly separable datasets. The document discusses artificial neural networks, focusing on the perceptron model, its structure, learning algorithm, and limitations, particularly in solving non linearly separable problems like xor. In 1958, frank rosenblatt created the perceptron at cornell aeronautical laboratory, the first artificial neural network that could actually learn to classify patterns. this groundbreaking algorithm proved that machines could learn from examples, not just follow rigid rules. Understanding the perceptron model and its theory will provide you with a good basis for understanding many of the key concepts in neural networks in general. a biological neural network (such as the one we have in our brain) is composed of a large number of nerve cells called neurons.

Comments are closed.