Master Evaluation Techniques For Llm Apps

.png)

Master Evaluation Techniques For Llm Apps Evaluating llm applications is crucial for ai teams to ensure the effectiveness and reliability of ai systems. mastering evaluation techniques helps ai teams increase development speed, drive better business outcomes, and maintain a competitive edge. In this post, we explore robust evaluation techniques and best practices for assessing the accuracy and reliability of llms and rag systems for various use cases, like chatbots and ai agents.

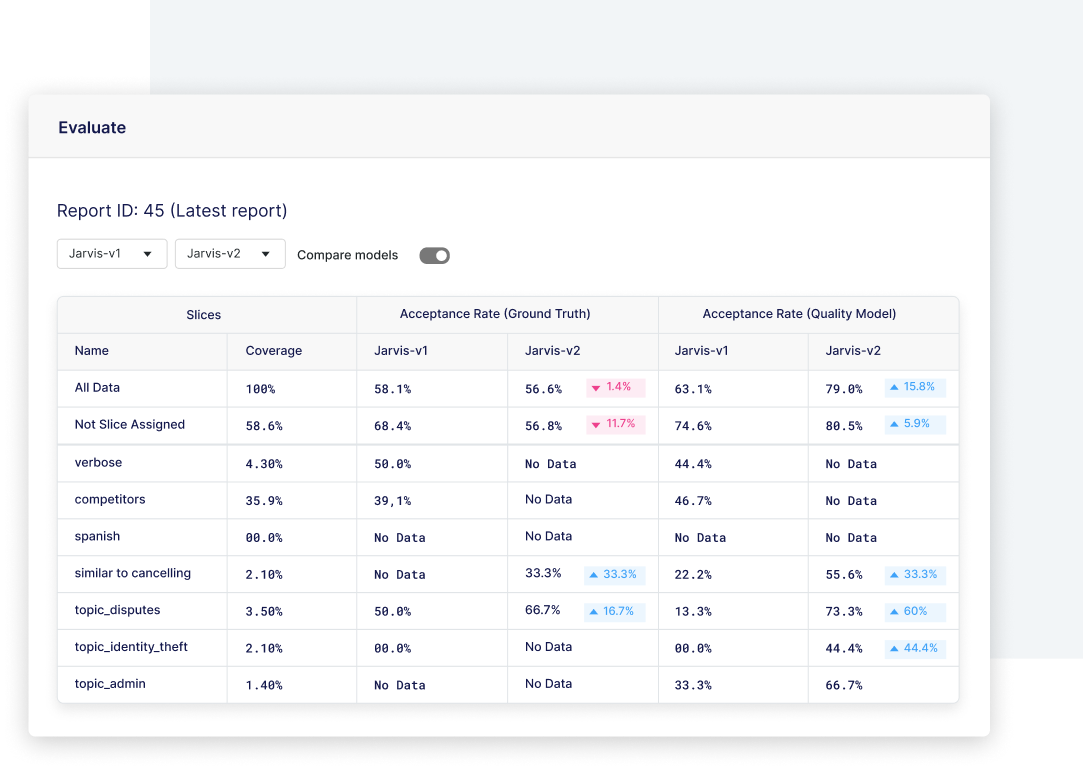

Llm Evaluation For Enterprise Ai Applications Snorkel Ai Explore practical evaluation techniques, such as automated tools, llm judges, and human assessments tailored for domain specific use cases. understand the best practices for llm evaluation, as well as some of the future directions like advanced and multi agent llm systems. The following diagram includes many of the metrics used to evaluate llm generated content, and how they can be categorized. figure 1: evaluation metrics for llm content, and how they can be categorized. But now, let’s discuss the four main llm evaluation methods along with their from scratch code implementations to better understand their advantages and weaknesses. understanding the main evaluation methods for llms. Whether you’re integrating a commercial llm into your product or building a custom rag system, this guide will help you understand how to develop and implement the llm evaluation strategy that works best for your application.

Llm Evaluation Solutions Deepchecks But now, let’s discuss the four main llm evaluation methods along with their from scratch code implementations to better understand their advantages and weaknesses. understanding the main evaluation methods for llms. Whether you’re integrating a commercial llm into your product or building a custom rag system, this guide will help you understand how to develop and implement the llm evaluation strategy that works best for your application. Unlock effective llm evaluation. explore key metrics, techniques, tools & benchmarks to build reliable, accurate, and high performing large language models. Whether you’re just starting with llm evaluation or looking to refine your existing process, these insights and best practices can help you build a more effective and efficient evaluation system. In this guide, we’ll walk you through the principles and practices of llm eval, shedding light on why traditional methods are falling short and how to do it right. By defining scope and objectives up front, these evaluation suites can be tailored around operational goals and requirements, and through continuous evaluation and iterative refinement, the evaluation suites can mature with their corresponding generative ai systems.

Comments are closed.