Evaluating Llm Applications

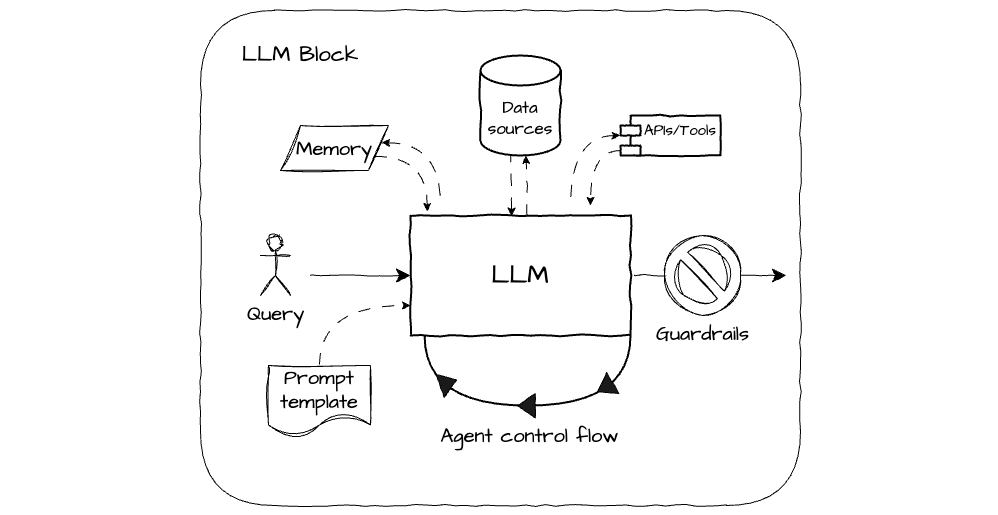

рџ ќ Guest Post Evaluating Llm Applications By Ksenia Se Explore practical evaluation techniques, such as automated tools, llm judges, and human assessments tailored for domain specific use cases. understand the best practices for llm evaluation, as well as some of the future directions like advanced and multi agent llm systems. Evaluating large language models (llms) is important for ensuring they work well in real world applications. whether fine tuning a model or enhancing a retrieval augmented generation (rag) system, understanding how to evaluate an llm’s performance is key.

(1)-p-500.jpg)

Evaluating Llm Performance At Scale A Guide To Building Automated Llm But now, let’s discuss the four main llm evaluation methods along with their from scratch code implementations to better understand their advantages and weaknesses. there are four common ways of evaluating trained llms in practice: multiple choice, verifiers, leaderboards, and llm judges, as shown in figure 1 below. Recent advances in generative ai have led to remarkable interest in using systems that rely on large language models (llms) for practical applications. This page automatically loads score data from several llm leaderboards and shows an interactive chart that tracks how top benchmark results have changed. the chart groups benchmarks by category, hi. This guide provides a comprehensive overview of llm evaluation, covering essential metrics, methodologies, and best practices to help you make informed decisions about which models best suit your needs.

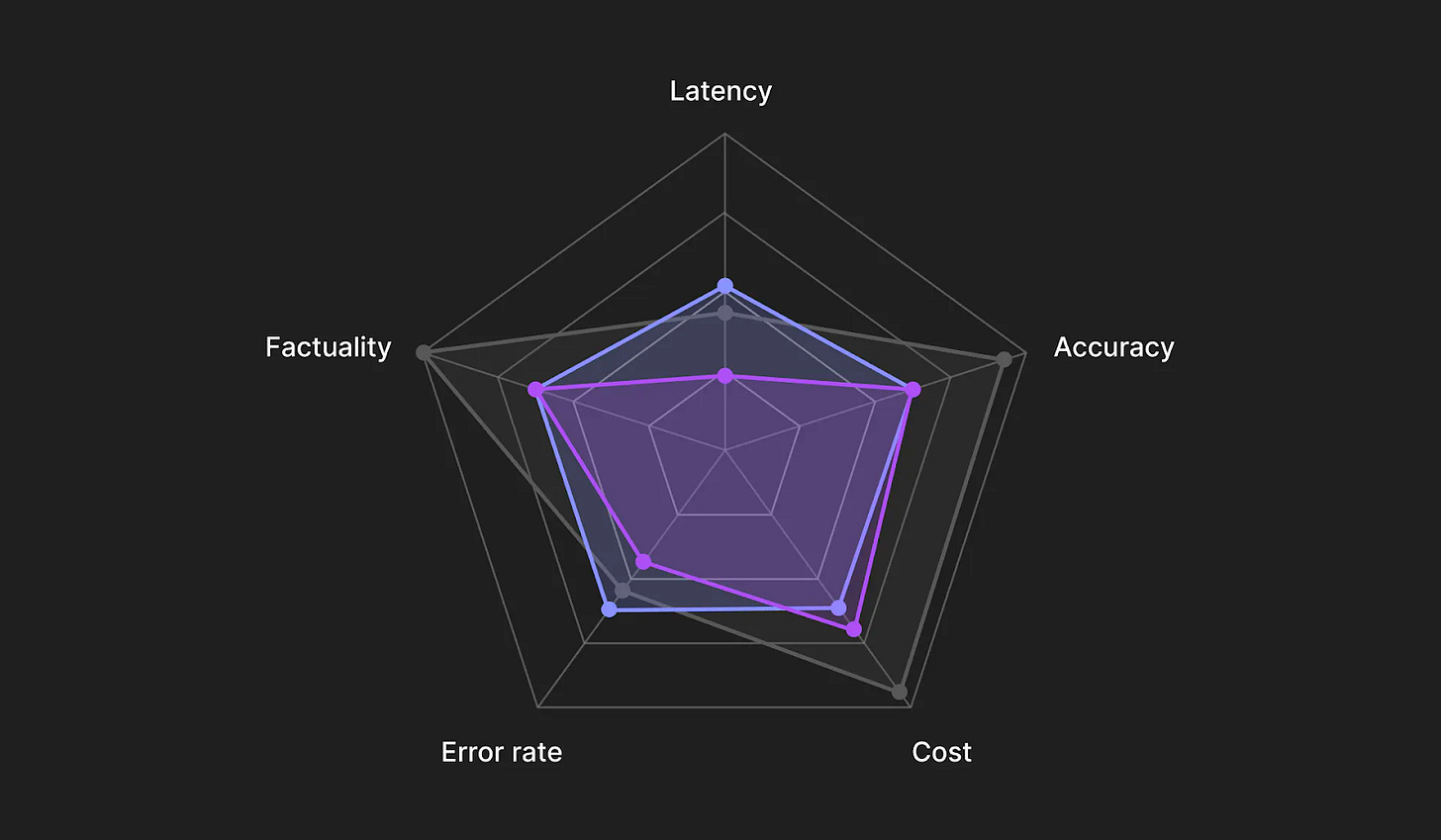

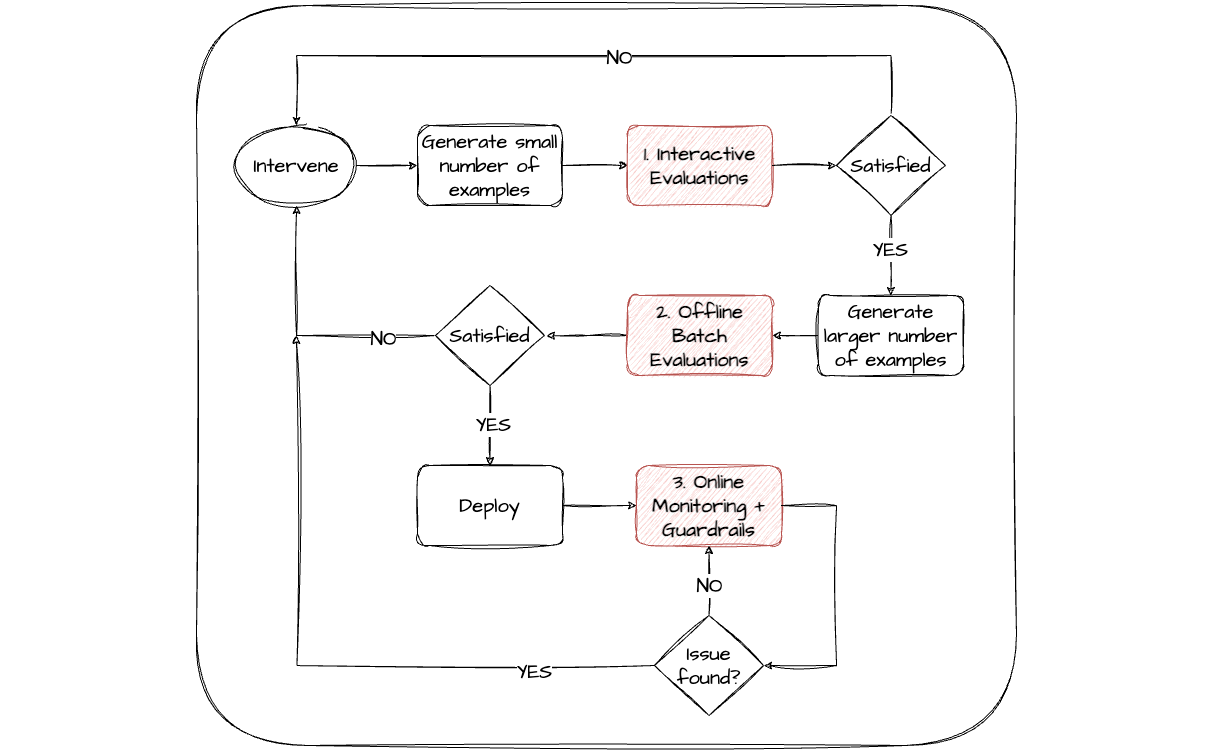

The Challenges Of Evaluating Llm Applications Pdf Evaluation Expert This page automatically loads score data from several llm leaderboards and shows an interactive chart that tracks how top benchmark results have changed. the chart groups benchmarks by category, hi. This guide provides a comprehensive overview of llm evaluation, covering essential metrics, methodologies, and best practices to help you make informed decisions about which models best suit your needs. When evaluating llm applications, the primary focus is on three key areas: the task, historical performance, and golden datasets. task level evaluation ensures that the application is performing well on specific use cases, while historical traces provide insight into how the application has evolved over time. Summary evaluating llm performance involves using benchmarks and metrics to measure accuracy, relevance, and reliability. by combining automatic metrics like bleu and f1 score with human evaluation, developers can ensure high quality ai systems. proper evaluation helps improve model performance, reduce errors, and build trustworthy ai applications in real world scenarios. This whitepaper details the principles, approaches, and applications of evaluating llms, focusing on how to move from a minimum viable product (mvp) to production ready systems. Whether you’re integrating a commercial llm into your product or building a custom rag system, this guide will help you understand how to develop and implement the llm evaluation strategy that works best for your application.

Evaluating Llm Applications When evaluating llm applications, the primary focus is on three key areas: the task, historical performance, and golden datasets. task level evaluation ensures that the application is performing well on specific use cases, while historical traces provide insight into how the application has evolved over time. Summary evaluating llm performance involves using benchmarks and metrics to measure accuracy, relevance, and reliability. by combining automatic metrics like bleu and f1 score with human evaluation, developers can ensure high quality ai systems. proper evaluation helps improve model performance, reduce errors, and build trustworthy ai applications in real world scenarios. This whitepaper details the principles, approaches, and applications of evaluating llms, focusing on how to move from a minimum viable product (mvp) to production ready systems. Whether you’re integrating a commercial llm into your product or building a custom rag system, this guide will help you understand how to develop and implement the llm evaluation strategy that works best for your application.

Evaluating Llm Applications This whitepaper details the principles, approaches, and applications of evaluating llms, focusing on how to move from a minimum viable product (mvp) to production ready systems. Whether you’re integrating a commercial llm into your product or building a custom rag system, this guide will help you understand how to develop and implement the llm evaluation strategy that works best for your application.

Evaluating Llm Applications

Comments are closed.