How To Debug Vllm Source Code On Runpod With Vs Code Remote

Llmops Runpod 사용법 정리 2024ver Vscode 연동 Set up remote development on your pod using vscode or cursor. this guide explains how to connect directly to your pod through vscode or cursor using the remote ssh extension, allowing you to work within your pod’s volume directories as if the files were stored on your local machine. The vllm worker is fully compatible with openai's api, and you can use it with any openai codebase by changing only 3 lines in total. the supported routes are chat completions and models with both streaming and non streaming.

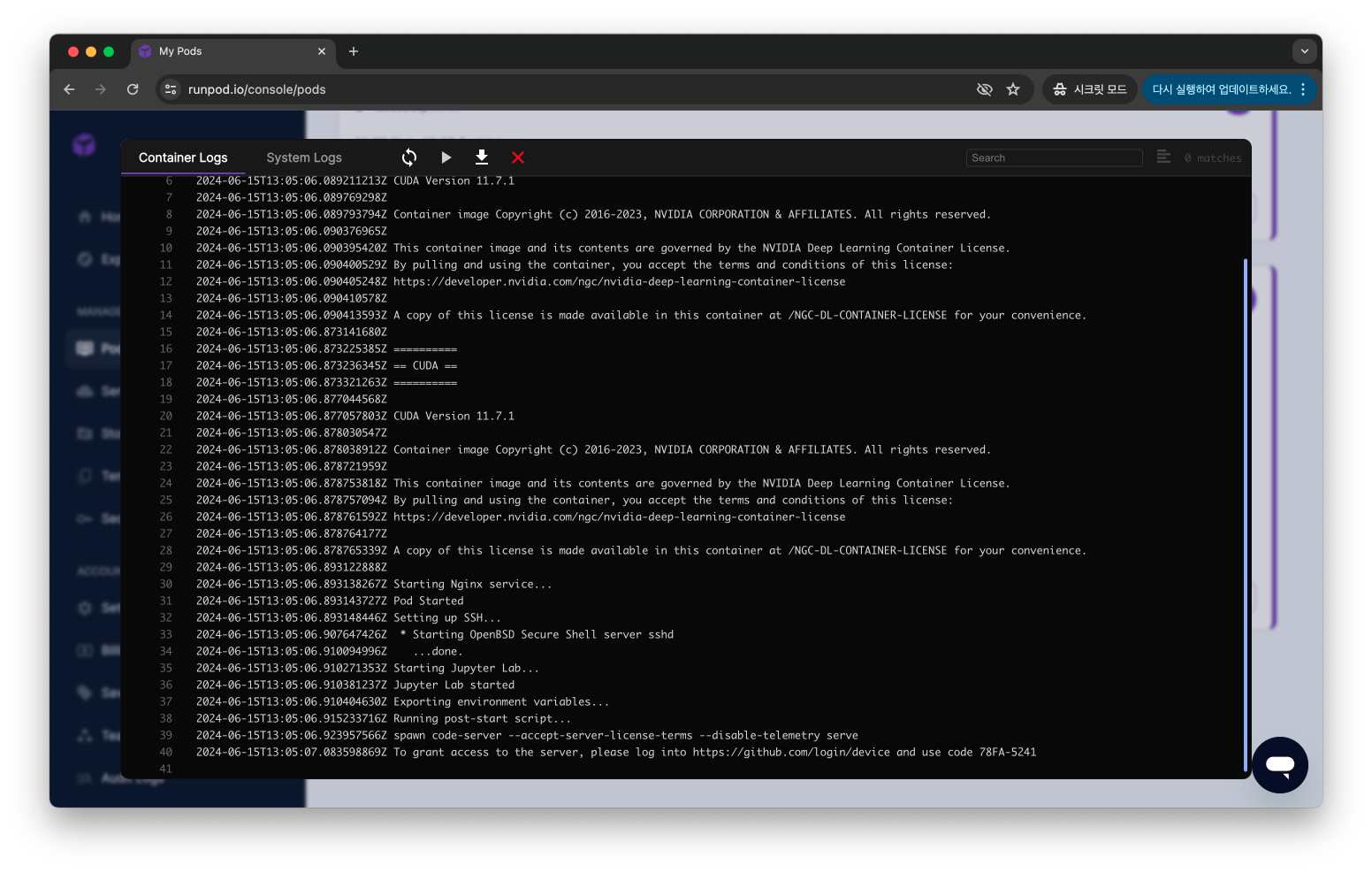

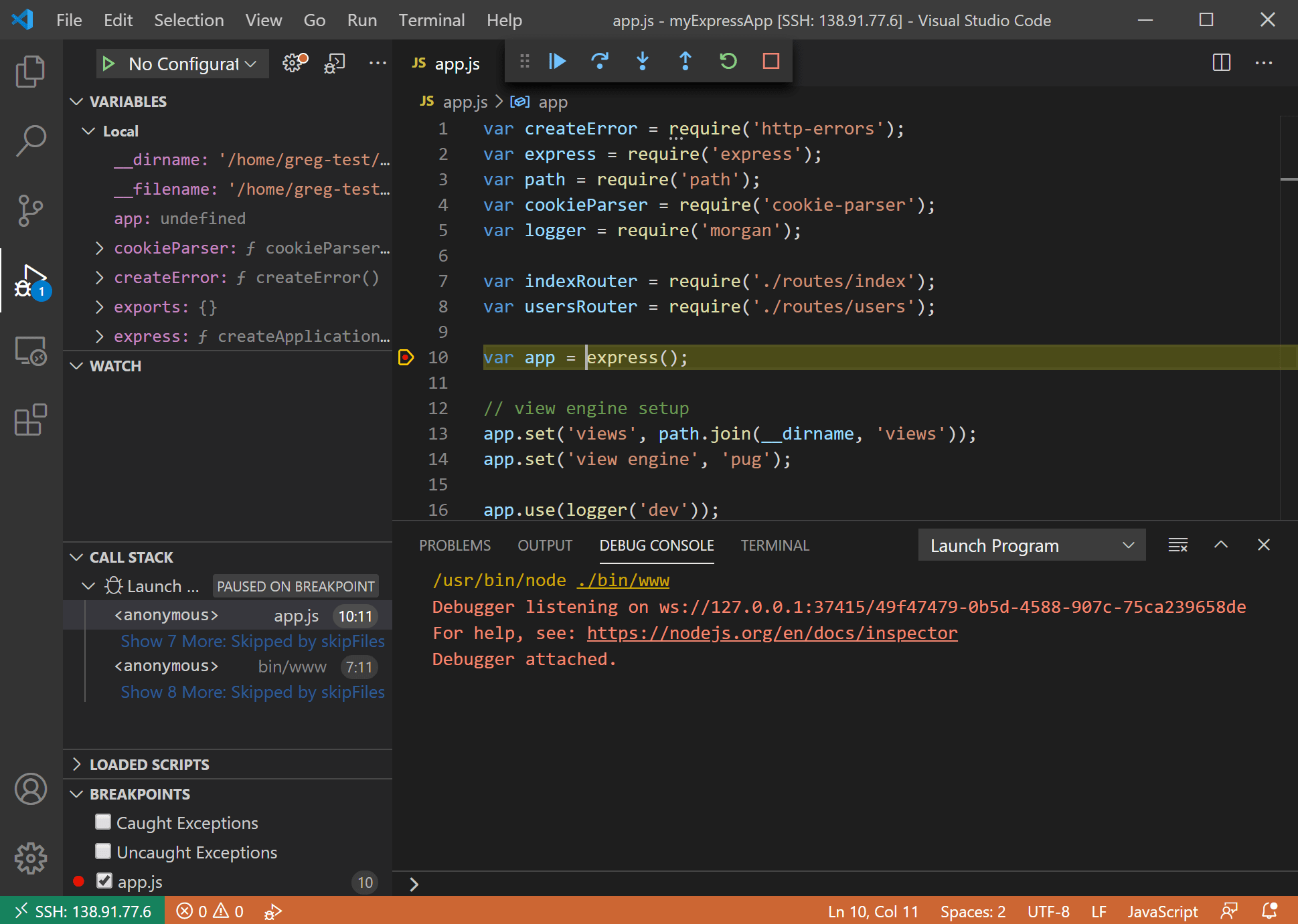

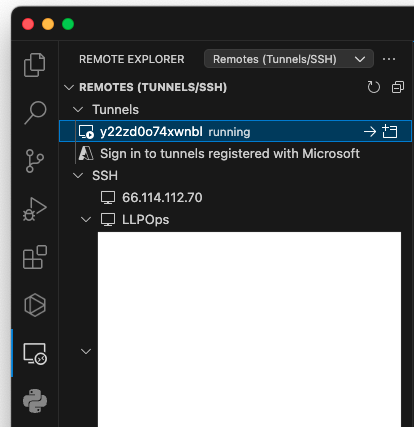

Remote Development Over Ssh This container allows users to develop projects remotely on runpod's gpu infrastructure while using the familiar vs code interface locally. this page documents the configuration and usage of the vs code server container specifically designed for the runpod platform. Many engineers want to join ai infra, but they struggle with complex environments or don't know how to interact with top tier projects like vllm. in my cours. They show you how to pip install vllm and run a quick inference test, then leave you to figure out docker packaging, gpu selection, network volume caching, and the server flags that actually determine whether your endpoint handles 5 concurrent requests or 500. It seems like the execution of vllm is managed through a compiled engine, which makes it challenging to directly debug within an ide using breakpoints. when debugging some custom funcs to the vllm, is there any way that we could disable compilation and just set breakpoints like normal python scripts?.

Llmops Runpod 사용법 정리 2024ver Vscode 연동 They show you how to pip install vllm and run a quick inference test, then leave you to figure out docker packaging, gpu selection, network volume caching, and the server flags that actually determine whether your endpoint handles 5 concurrent requests or 500. It seems like the execution of vllm is managed through a compiled engine, which makes it challenging to directly debug within an ide using breakpoints. when debugging some custom funcs to the vllm, is there any way that we could disable compilation and just set breakpoints like normal python scripts?. This guide will walk you through using the vs code server template on runpod, enabling you to leverage gpu instances for your development needs. by the end of this tutorial, you will be able to interact with your code directly from your locally installed vs code. Shows how to create a seamless cloud development environment for ai by using vs code remote with runpod. explains how to connect vs code to runpod’s gpu instances so you can write and run machine learning code in the cloud with a local like experience. This guide will walk you through using the vs code server template on runpod, enabling you to leverage gpu instances for your development needs. by the end of this tutorial, you will be able to interact with your code directly from your locally installed vs code. Fortunately, you can connect your vscode ide to your runpod instance via ssh. this will allow you to edit files and code on your remote instance directly from vscode.

Comments are closed.