Llmops Runpod %ec%82%ac%ec%9a%a9%eb%b2%95 %ec%a0%95%eb%a6%ac 2024ver Vscode %ec%97%b0%eb%8f%99

Runpod Blog When selecting a gpu on runpod to host a llm, it's crucial to balance vram capacity, tensor processing capabilities, cost, and your desired inference performance. Ai infrastructure with on demand gpus and serverless compute. run training, inference, and batch workloads on the cloud with runpod.

.webp)

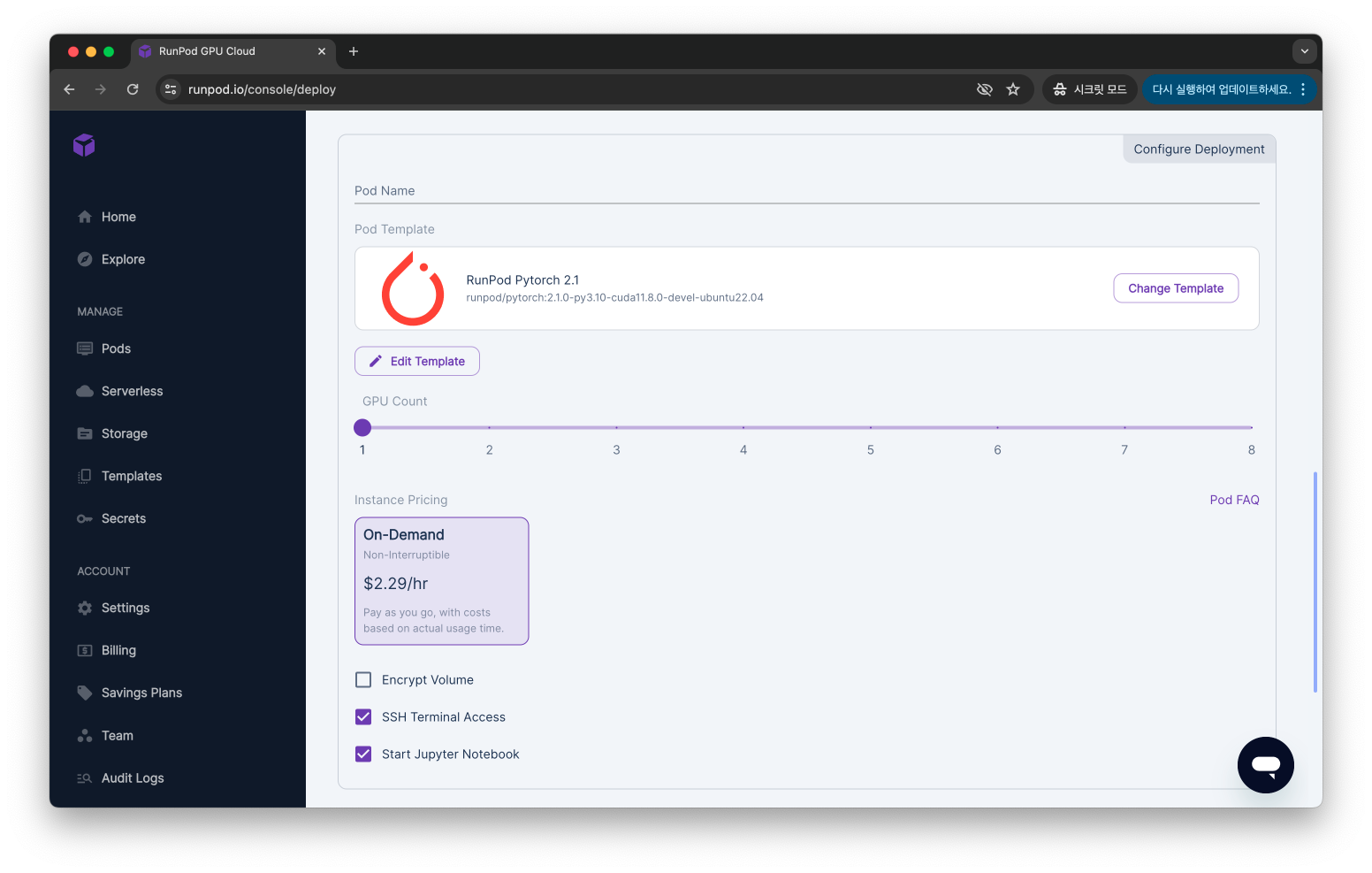

Ai Guides Tutorials Gpu Infrastructure Insights Runpod Blog Runpod is a great tool for those who want to explore the capabilities of llms without having access to the latest gpus. in this post, i’ll be documenting how to run llms on runpod, along with some tips for overcoming common issues. In this comprehensive tutorial, i walk you through the process of deploying and using any open source large language models (llms) utilizing runpod's powerful gpu services. For those chasing ai dreams without enterprise cloud budgets, runpod is exactly that moment. it isn’t a theoretical solution. it’s a practical, hands on shift in how compute power is accessed and. This document provides technical instructions for setting up a runpod gpu cloud environment for llm fine tuning experiments. it covers runpod account configuration, gpu instance selection, environment preparation, and integration with the fine tuning workflow.

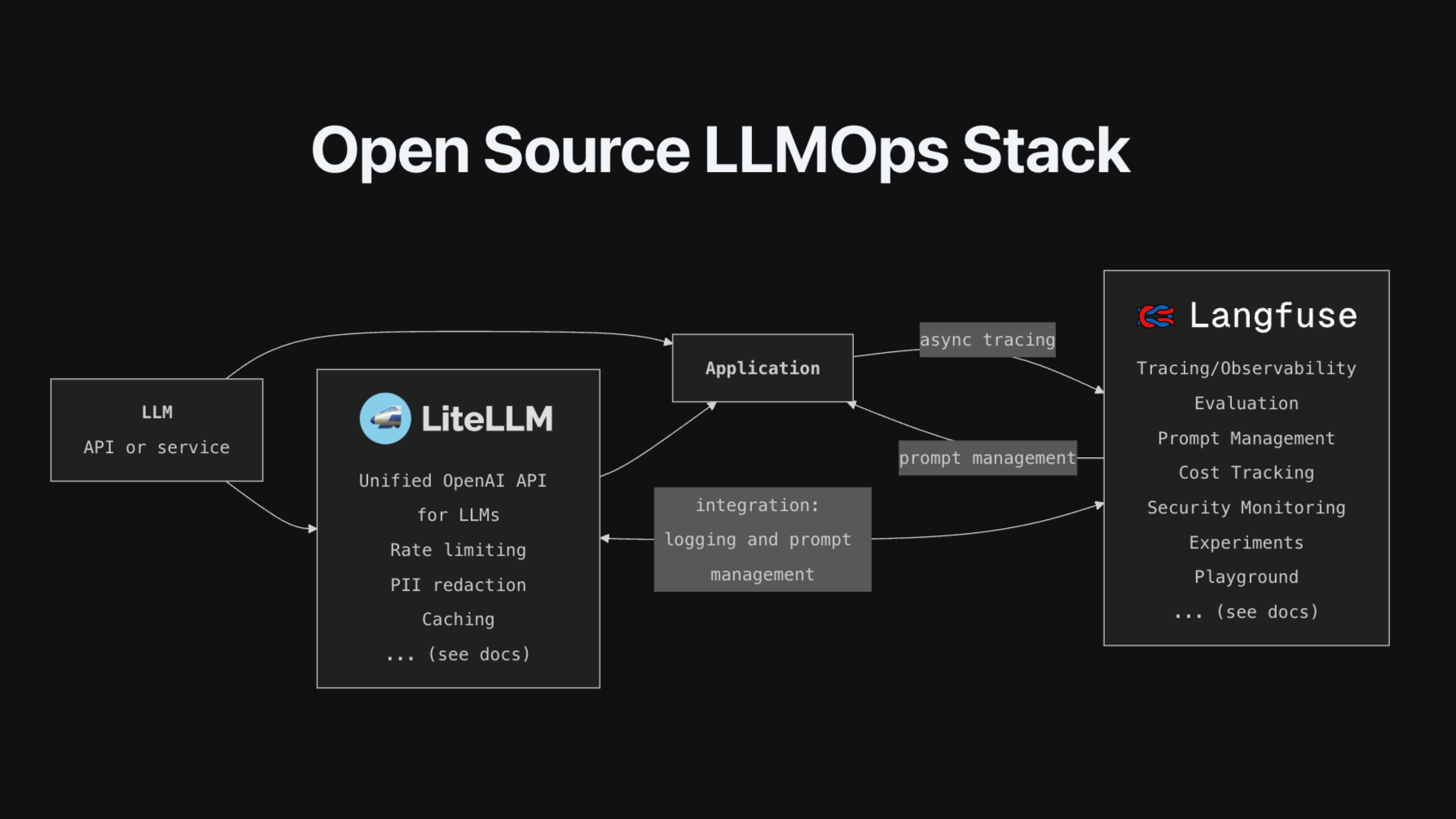

Open Source Llmops Stack For those chasing ai dreams without enterprise cloud budgets, runpod is exactly that moment. it isn’t a theoretical solution. it’s a practical, hands on shift in how compute power is accessed and. This document provides technical instructions for setting up a runpod gpu cloud environment for llm fine tuning experiments. it covers runpod account configuration, gpu instance selection, environment preparation, and integration with the fine tuning workflow. Runpod provides an easy way to deploy and manage your own llm with minimal setup, giving you control over your data and costs. in this guide, we’ll cover the essentials of setting up your llm on runpod, from installation to optimization, so you can get started quickly. Learn llms with runpod for executing resource intensive large language models because of affordable pricing and various gpu possibilities. Most easy way of deploying your openai compatible llm in 2 minutes using runpod as infrastructure and vllm for inferencing. runpod is a cloud platform that provides affordable, on demand gpu and cpu compute for ai, machine learning, and development workflows. In conclusion, llmops plays a crucial role in streamlining the deployment and management of large language models for real world applications. azure machine learning offers a comprehensive platform for implementing llmops, addressing the risks and challenges associated with llms.

The New Runpod Io Clearer Faster Built For What S Next Runpod Blog Runpod provides an easy way to deploy and manage your own llm with minimal setup, giving you control over your data and costs. in this guide, we’ll cover the essentials of setting up your llm on runpod, from installation to optimization, so you can get started quickly. Learn llms with runpod for executing resource intensive large language models because of affordable pricing and various gpu possibilities. Most easy way of deploying your openai compatible llm in 2 minutes using runpod as infrastructure and vllm for inferencing. runpod is a cloud platform that provides affordable, on demand gpu and cpu compute for ai, machine learning, and development workflows. In conclusion, llmops plays a crucial role in streamlining the deployment and management of large language models for real world applications. azure machine learning offers a comprehensive platform for implementing llmops, addressing the risks and challenges associated with llms.

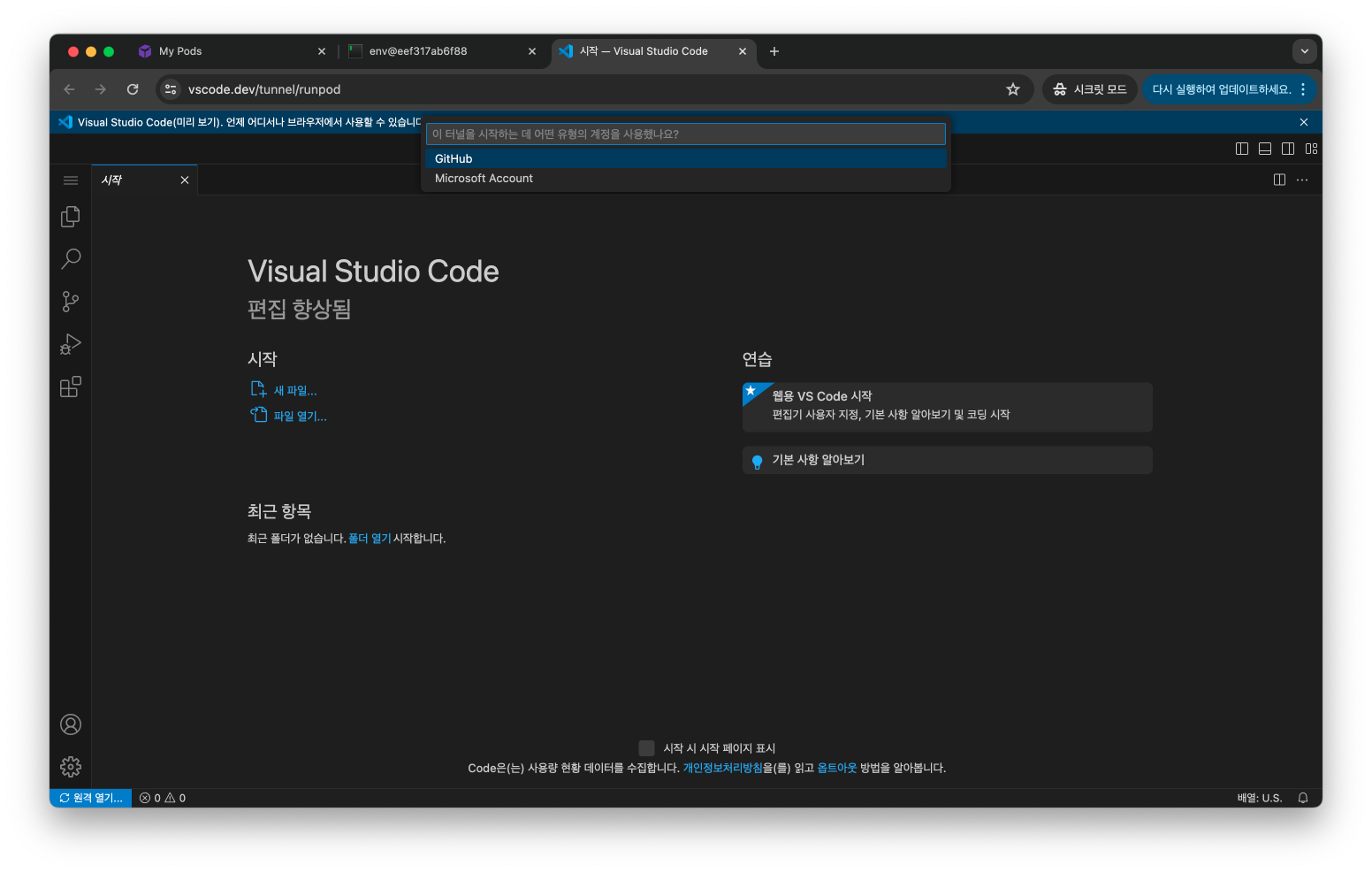

Llmops Runpod 사용법 정리 2024ver Vscode 연동 Most easy way of deploying your openai compatible llm in 2 minutes using runpod as infrastructure and vllm for inferencing. runpod is a cloud platform that provides affordable, on demand gpu and cpu compute for ai, machine learning, and development workflows. In conclusion, llmops plays a crucial role in streamlining the deployment and management of large language models for real world applications. azure machine learning offers a comprehensive platform for implementing llmops, addressing the risks and challenges associated with llms.

Llmops Runpod 사용법 정리 2024ver Vscode 연동

Comments are closed.