Github Allenai Molmo2 Code For The Molmo2 Vision Language Model

Github Aaltoml Bayesvlm Code For Post Hoc Probabilistic Vision This repository is for training and using ai2's open vision language models, molmo2 and molmopoint. molmo2 is state of the art among open source models and demonstrates exceptional new capabilities in point driven grounding in single image, multi image, and video tasks as shown below. This repository is for training and using ai2's open vision language model, molmo2. molmo2 is state of the art among open source models and demonstrates exceptional new capabilities in point driven grounding in single image, multi image, and video tasks as shown below.

Github Alikalik9 Openllm Chat To Various Large Language Models Code for the molmo vision language model. contribute to allenai molmo development by creating an account on github. Open, state of the art models for image and video understanding are critical for building systems that anyone can reuse, customize, and improve. we invite you to download the molmo 2 models and datasets, explore our cookbooks and examples, and read the technical report. Code for the molmo vision language model. contribute to allenai molmo development by creating an account on github. Molmo2 8b is based on qwen3 8b and uses siglip 2 as vision backbone. it outperforms others in the class of open weight and data models on short videos, counting, and captioning, and is competitive on long videos. ai2 is commited to open science. the molmo2 datasets are available here.

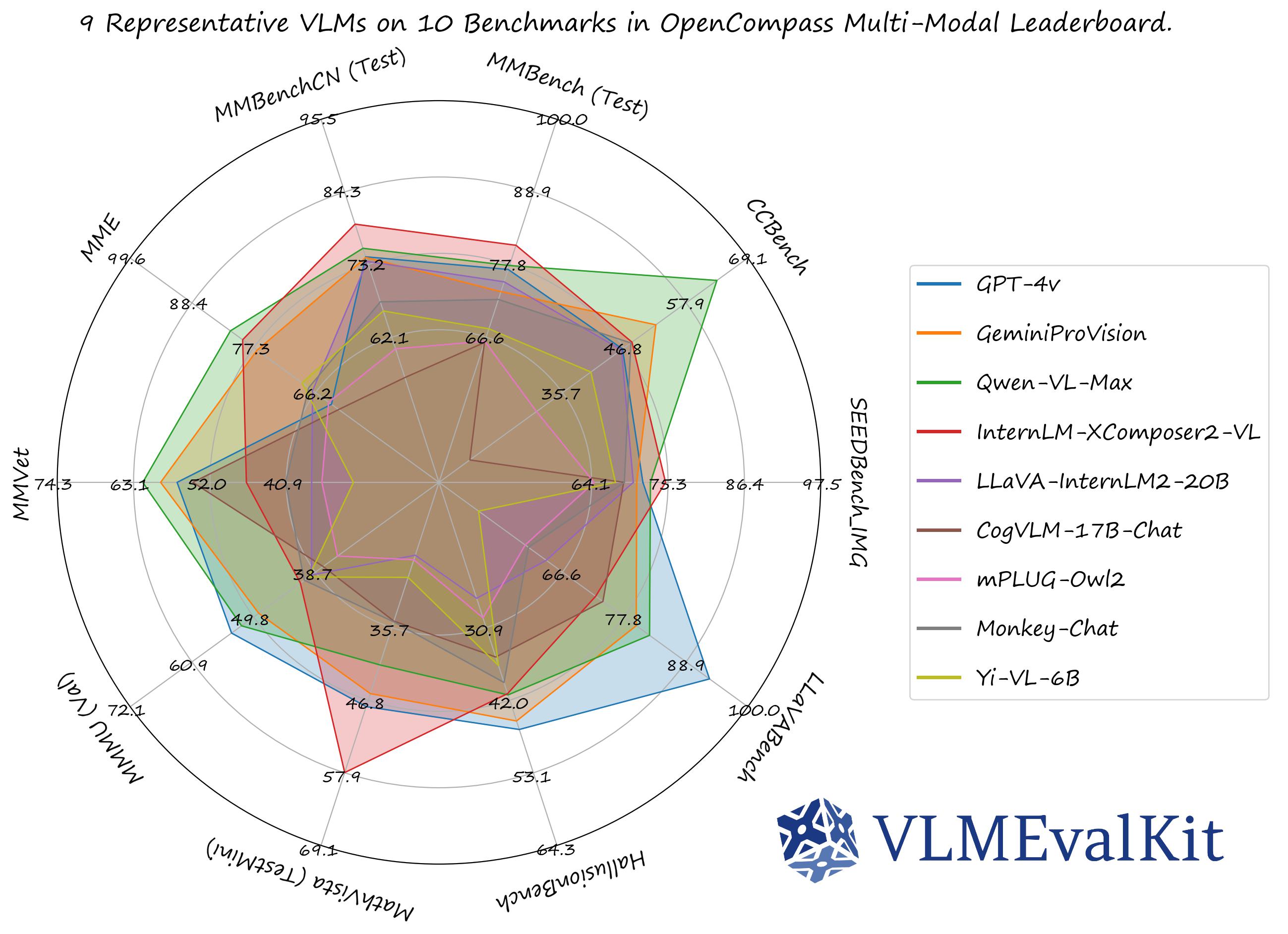

Github Xiaoachen98 Vlmevalkit Code for the molmo vision language model. contribute to allenai molmo development by creating an account on github. Molmo2 8b is based on qwen3 8b and uses siglip 2 as vision backbone. it outperforms others in the class of open weight and data models on short videos, counting, and captioning, and is competitive on long videos. ai2 is commited to open science. the molmo2 datasets are available here. This work presents molmo2, a series of open source vision language models (vlms) designed to achieve state of the art performance in the open source domain. molmo2 demonstrates exceptional point driven grounding capabilities across single image, multi image, and video tasks. This page documents the molmo2 vision language model architecture and its integration into the sage framework. molmo2 is a multimodal model that processes images and videos alongside text to perform visual question answering and reasoning tasks. Sample code and api for allenai: molmo2 8b molmo2 8b is an open vision language model developed by the allen institute for ai (ai2) as part of the molmo2 family, supporting image, video, and multi image understanding and grounding. We present molmo2, a new family of vlms that are state of the art among open source models and demonstrate exceptional new capabilities in point driven grounding in single image, multi image, and video tasks.

Github Allenai Molmo2 Code For The Molmo2 Vision Language Model This work presents molmo2, a series of open source vision language models (vlms) designed to achieve state of the art performance in the open source domain. molmo2 demonstrates exceptional point driven grounding capabilities across single image, multi image, and video tasks. This page documents the molmo2 vision language model architecture and its integration into the sage framework. molmo2 is a multimodal model that processes images and videos alongside text to perform visual question answering and reasoning tasks. Sample code and api for allenai: molmo2 8b molmo2 8b is an open vision language model developed by the allen institute for ai (ai2) as part of the molmo2 family, supporting image, video, and multi image understanding and grounding. We present molmo2, a new family of vlms that are state of the art among open source models and demonstrate exceptional new capabilities in point driven grounding in single image, multi image, and video tasks.

Github Allenai Molmo Code For The Molmo Vision Language Model Sample code and api for allenai: molmo2 8b molmo2 8b is an open vision language model developed by the allen institute for ai (ai2) as part of the molmo2 family, supporting image, video, and multi image understanding and grounding. We present molmo2, a new family of vlms that are state of the art among open source models and demonstrate exceptional new capabilities in point driven grounding in single image, multi image, and video tasks.

Comments are closed.