Issue In Using Given Code For Pretraining The Model Issue 19

Github Falsegod7 Trainig Model Code Model Selection First of all, thanks for pointing out the problem with the validation output of the pretraindataset, as i revised the code for training and testing after i pretrained the model, so there might be some incompatibilities with the pre training process. One of the base datasets, a source dataset, is used to train a machine learning model. the trained model then forecasts a ts in a target dataset. the source and the target datasets are distinct: they do not contain ts whose values are linear transformations of each other.

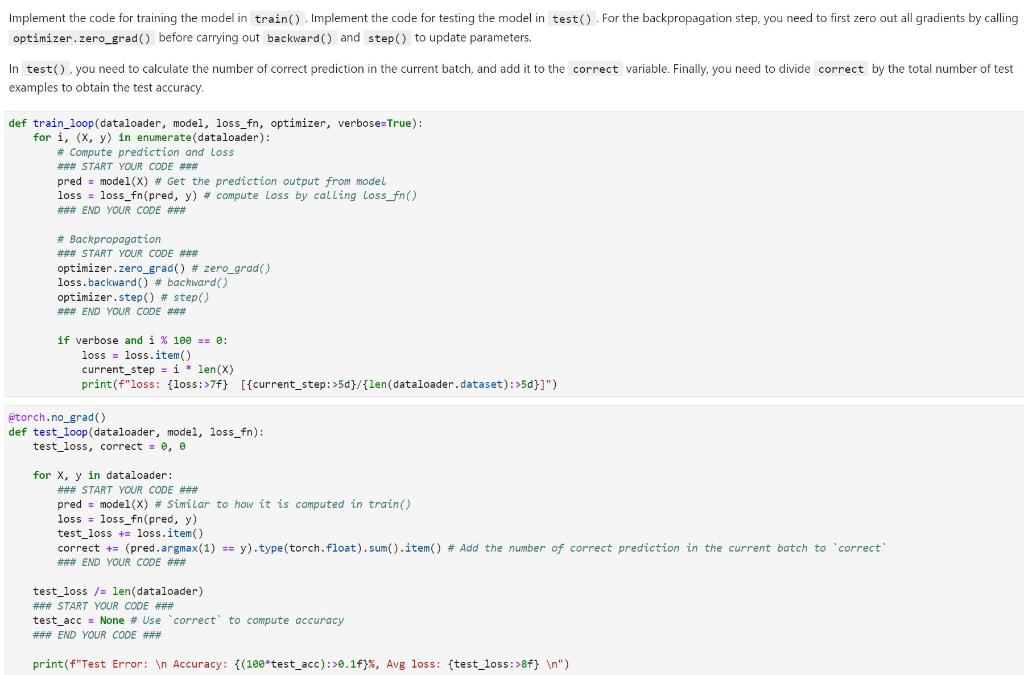

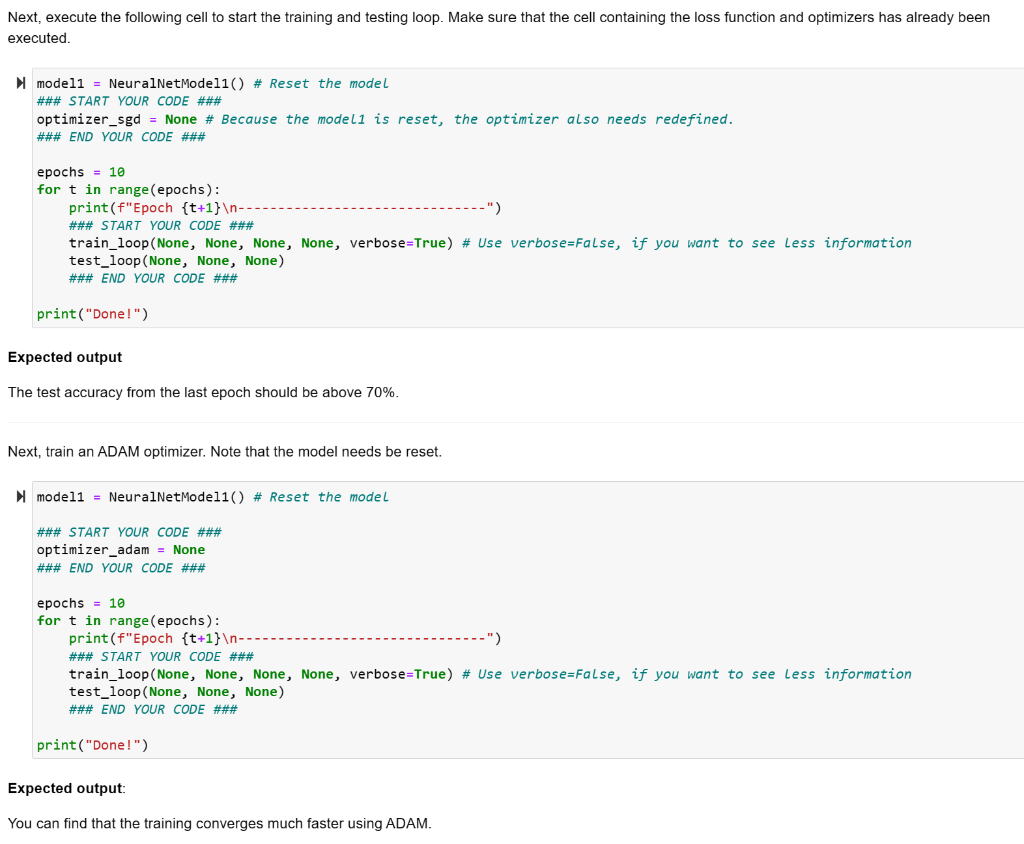

Solved Implement The Code For Training The Model In Train Chegg In this section, we will show you what you can do to debug these kinds of issues. the problem when you encounter an error in trainer.train() is that it could come from multiple sources, as the trainer usually puts together lots of things. My main goal of using autotrain was to overcome the issue of limited computational resources. but unfortunately, it seems that i cannot escape from renting a cloud based gpu. If the model isn’t retrained regularly, its performance will degrade. solution: implement continuous monitoring and periodic retraining to adapt to changing data distributions. This article is here to help by walking you through the steps to debug machine learning models written in python using pytorch library.

Solved Points 6 Implement The Code For Training The Model Chegg If the model isn’t retrained regularly, its performance will degrade. solution: implement continuous monitoring and periodic retraining to adapt to changing data distributions. This article is here to help by walking you through the steps to debug machine learning models written in python using pytorch library. But as far i know, if i compile a model, all the previous trained data will be lost, and the model will be trained from scratch. i don't want this to happen because i want to use this model for further re training purposes. In this work, we investigate how different repository processing strategies affect in context learning in opencoder, a 1.5b parameter model. we extend its context window from 4,096 to 16,384 tokens by training on additional 1b tokens of curated repository level data. So, after being fascinated by chatgpt, i decided to take a deep exploration into how it works, which eventually led me on a path to develop a transformer model from scratch. To isolate the model downloading and loading issue, you can use the load format dummy argument to skip loading the model weights. this way, you can check if the model downloading and loading is the bottleneck.

Comments are closed.