Github Allenai Molmo Code For The Molmo Vision Language Model Github

논문 요약 Molmo And Pixmo Open Weights And Open Data For State Of The Code for the molmo vision language model. contribute to allenai molmo development by creating an account on github. Code for the molmo vision language model. contribute to allenai molmo development by creating an account on github.

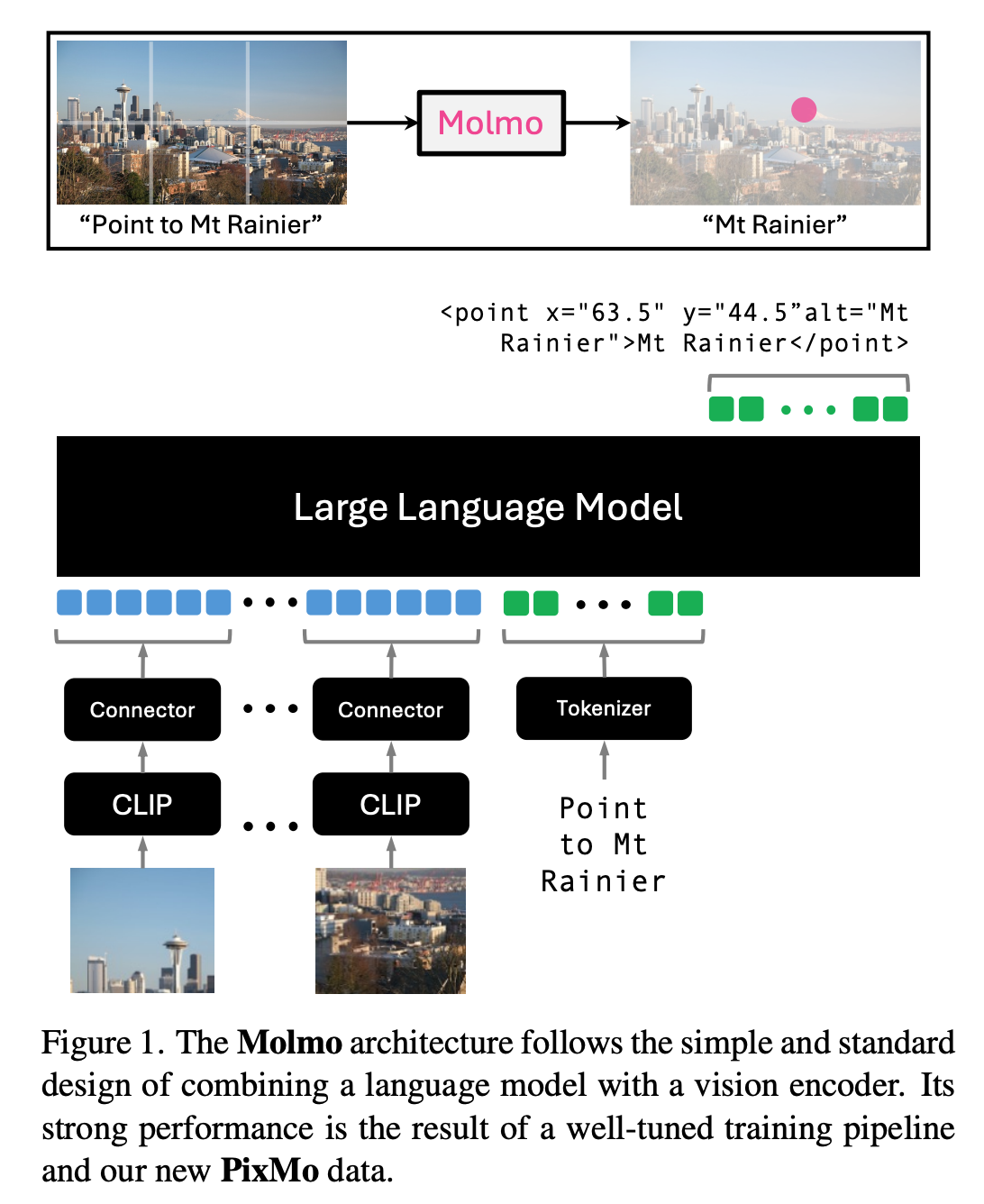

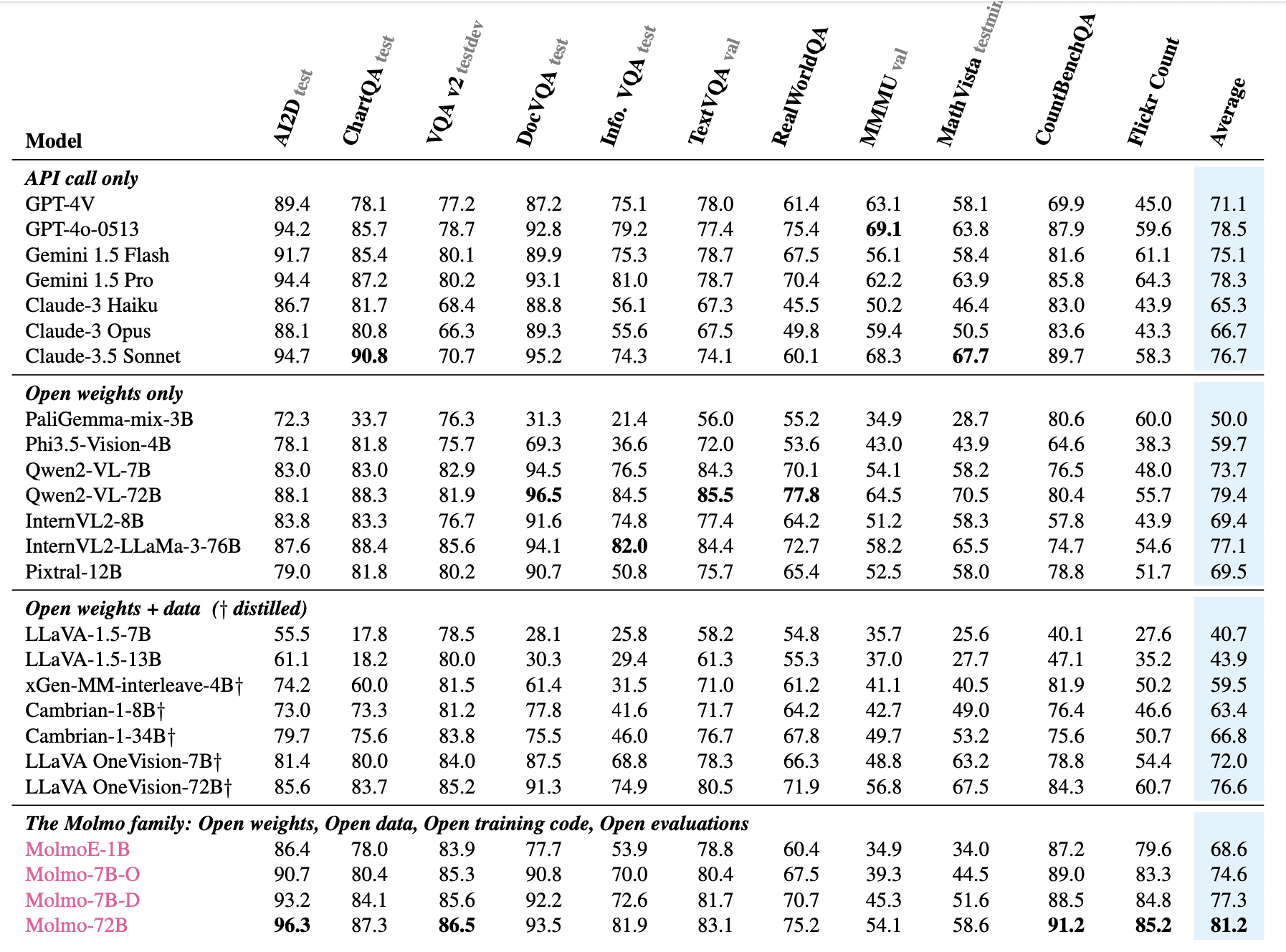

Github Allenai Molmo2 Code For The Molmo2 Vision Language Model Molmo 2 o (7b) pairs molmo 2's vision and video grounding with olmo, our fully open llm, so every component – from language backbone to vision encoder to training checkpoints – can be inspected, modified, and adapted. Try molmo using our public demo showcasing the molmo 7b d model. this codebase is based on the olmo codebase with the addition of vision encoding and integrating generative evaluations. Molmo 7b o is based on olmo 7b 1024 (a preview of next generation of olmo models) and uses openai clip as vision backbone. it performs comfortably between gpt 4v and gpt 4o on both academic benchmarks and human evaluation. Molmo provides the codebase for training and deploying state of the art multimodal open language models (vlms). it targets researchers and developers working with vision language tasks, offering a foundation for building and evaluating models that understand and generate content based on both images and text.

Github Allenai Molmo Code For The Molmo Vision Language Model Molmo 7b o is based on olmo 7b 1024 (a preview of next generation of olmo models) and uses openai clip as vision backbone. it performs comfortably between gpt 4v and gpt 4o on both academic benchmarks and human evaluation. Molmo provides the codebase for training and deploying state of the art multimodal open language models (vlms). it targets researchers and developers working with vision language tasks, offering a foundation for building and evaluating models that understand and generate content based on both images and text. Discover molmo ai, the state of the art open source multimodal ai model. powerful, free, and easy to use. learn how molmo compares to other ai models. This page documents the molmo2 vision language model architecture and its integration into the sage framework. molmo2 is a multimodal model that processes images and videos alongside text to perform visual question answering and reasoning tasks. Developers, researchers, and ai enthusiasts can now access molmo ai’s source code, training data, and model weights, empowering them to contribute to and build upon its capabilities. This work presents molmo2, a series of open source vision language models (vlms) designed to achieve state of the art performance in the open source domain. molmo2 demonstrates exceptional point driven grounding capabilities across single image, multi image, and video tasks.

Github Allenai Molmo Code For The Molmo Vision Language Model Github Discover molmo ai, the state of the art open source multimodal ai model. powerful, free, and easy to use. learn how molmo compares to other ai models. This page documents the molmo2 vision language model architecture and its integration into the sage framework. molmo2 is a multimodal model that processes images and videos alongside text to perform visual question answering and reasoning tasks. Developers, researchers, and ai enthusiasts can now access molmo ai’s source code, training data, and model weights, empowering them to contribute to and build upon its capabilities. This work presents molmo2, a series of open source vision language models (vlms) designed to achieve state of the art performance in the open source domain. molmo2 demonstrates exceptional point driven grounding capabilities across single image, multi image, and video tasks.

Molmo Open Source Multimodal Vision Language Models Outperform Gemini Developers, researchers, and ai enthusiasts can now access molmo ai’s source code, training data, and model weights, empowering them to contribute to and build upon its capabilities. This work presents molmo2, a series of open source vision language models (vlms) designed to achieve state of the art performance in the open source domain. molmo2 demonstrates exceptional point driven grounding capabilities across single image, multi image, and video tasks.

Comments are closed.