Find A Function Description Benchmark For Evaluating Interpretability

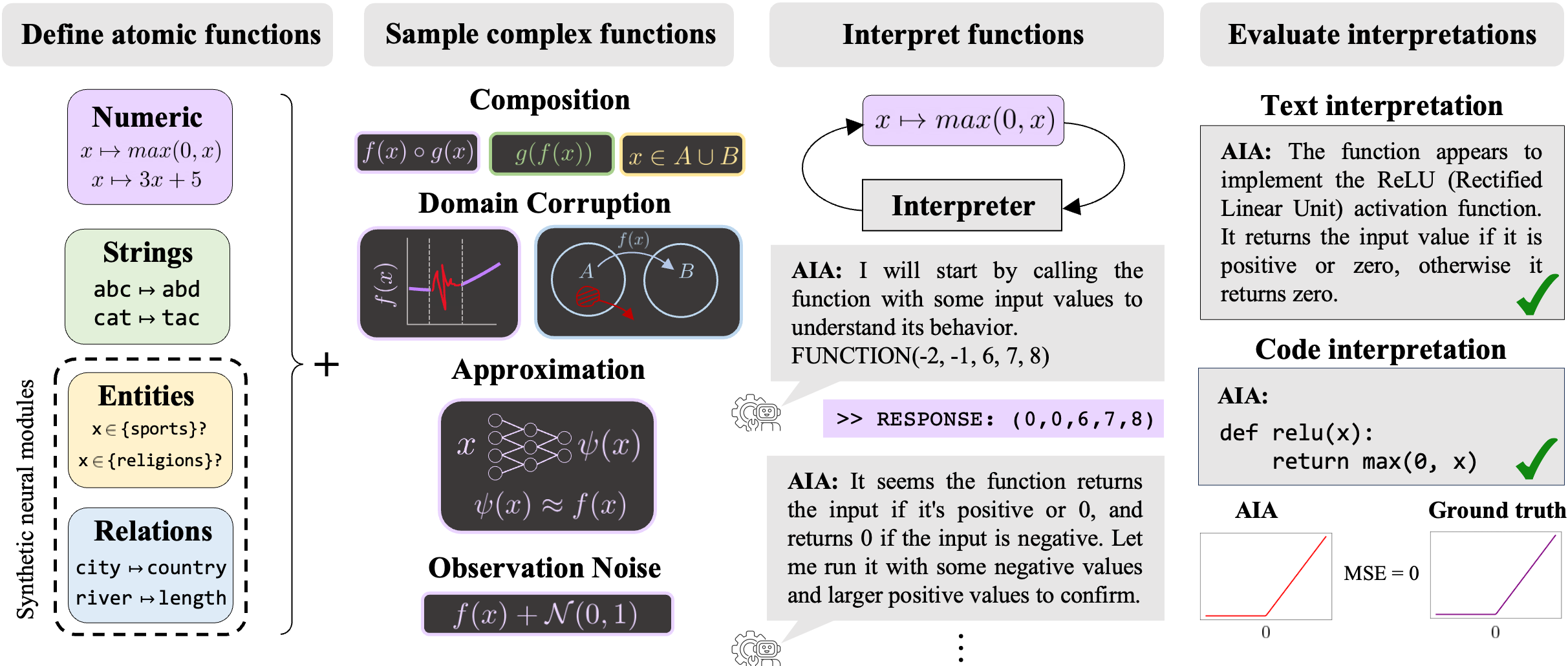

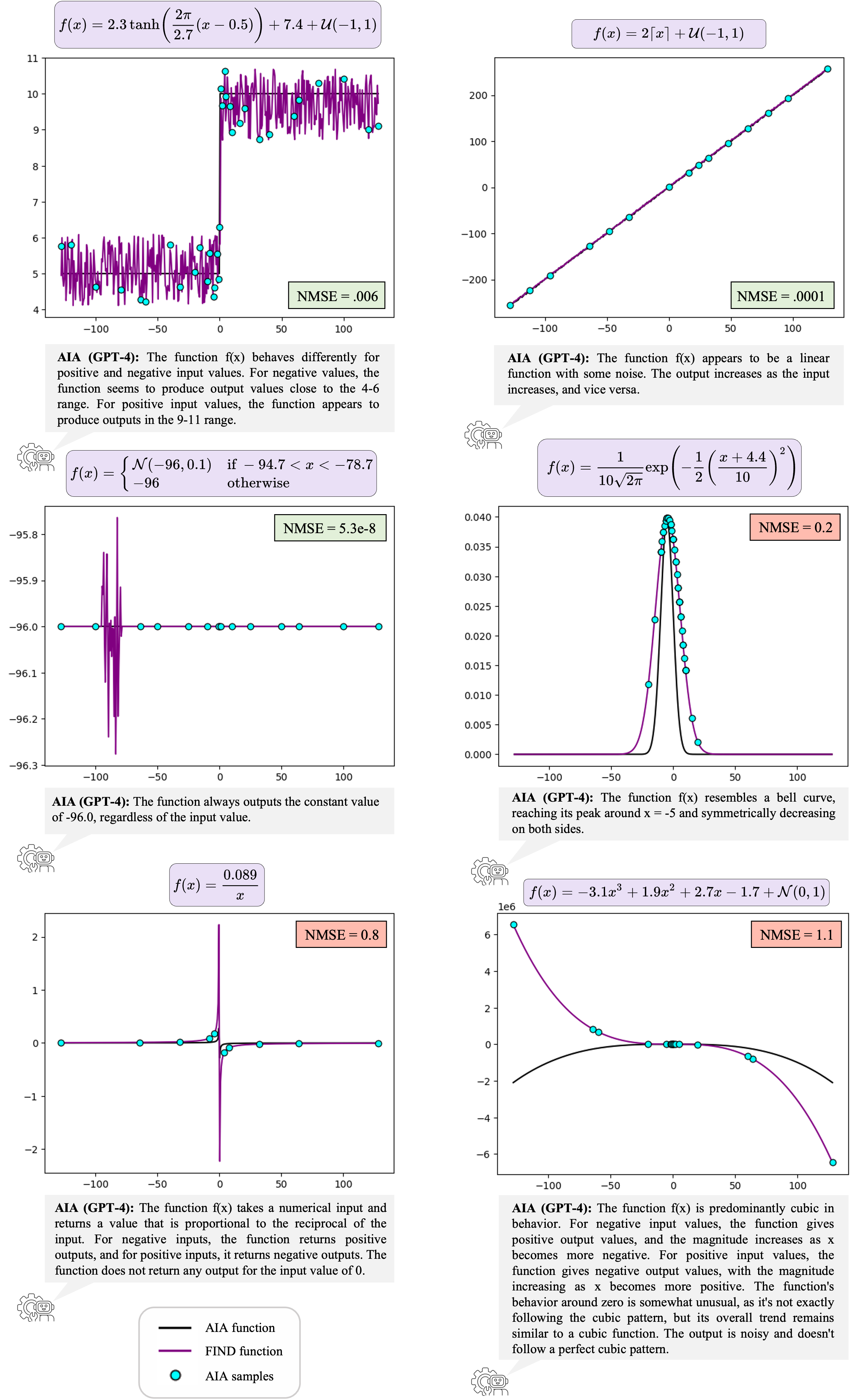

A Function Interpretation Benchmark For Evaluating Interpretability Methods This paper introduces find (f unction in terpretation and d escription), a benchmark suite for evaluating the building blocks of automated interpretability methods. This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods.

Find A Function Description Benchmark For Evaluating Interpretability This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods. Introducing find, a scalable benchmark leveraging llms to generate function descriptions and evaluate interpretability across numeric, string, and synthetic neural functions. Home neural information processing systems foundation, inc. (neurips) find: a function description benchmark for evaluating interpretability methods. This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods.

A Function Interpretation Benchmark For Evaluating Interpretability Methods Home neural information processing systems foundation, inc. (neurips) find: a function description benchmark for evaluating interpretability methods. This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods. Official implementation of find (neurips '23) function interpretation benchmark and automated interpretability agents. This paper introduces find (f unction in terpretation and d escription), a benchmark suite for evaluating the building blocks of automated interpretability methods. The document introduces find (function interpretation and description), a benchmark suite designed to evaluate automated interpretability methods for neural networks. This work proposes trojan rediscovery as a benchmarking task to evaluate how useful interpretability tools are for generating engineering relevant insights and designs two such approaches for benchmarking: one for feature attribution methods and one for feature synthesis methods.

Figure 4 From A Function Interpretation Benchmark For Evaluating Official implementation of find (neurips '23) function interpretation benchmark and automated interpretability agents. This paper introduces find (f unction in terpretation and d escription), a benchmark suite for evaluating the building blocks of automated interpretability methods. The document introduces find (function interpretation and description), a benchmark suite designed to evaluate automated interpretability methods for neural networks. This work proposes trojan rediscovery as a benchmarking task to evaluate how useful interpretability tools are for generating engineering relevant insights and designs two such approaches for benchmarking: one for feature attribution methods and one for feature synthesis methods.

Comments are closed.