Figure 4 From A Function Interpretation Benchmark For Evaluating

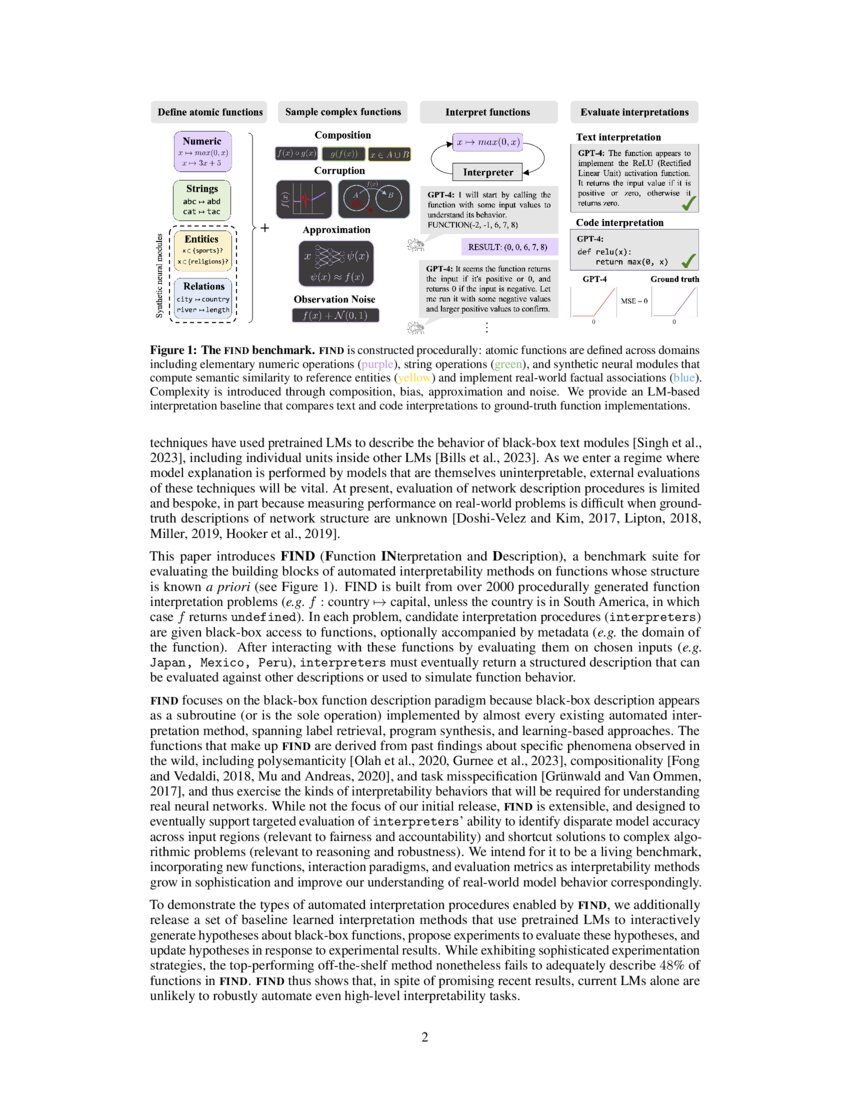

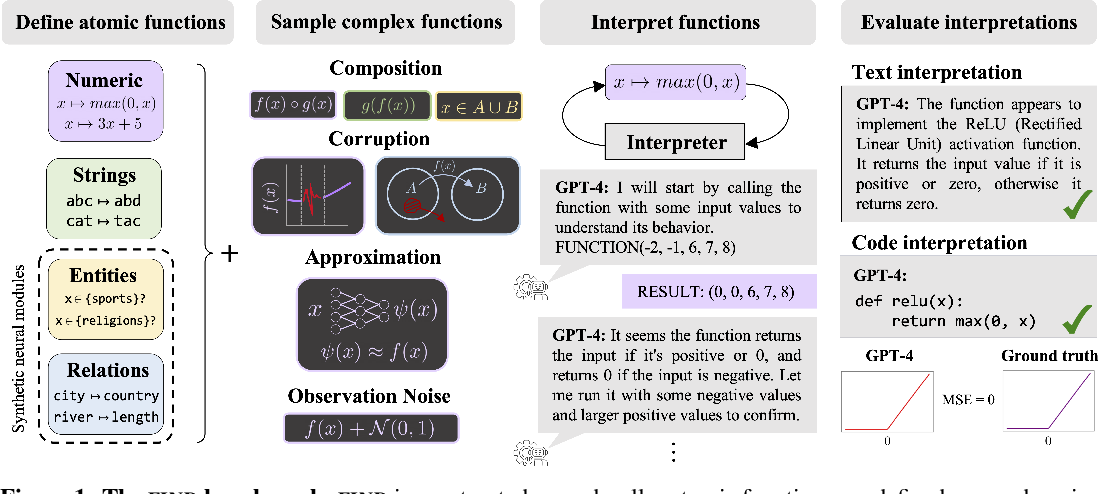

A Function Interpretation Benchmark For Evaluating Interpretability Complexity is introduced through composition, bias, approximation and noise. we provide an lm based interpretation baseline that compares text and code interpretations to ground truth function implementations. The find repository contains the utilities necessary for reproducing benchmark results for the lm baselines reported in the paper, and running and evaluating interpretation of the find functions with other interpreters defined by the user.

A Function Interpretation Benchmark For Evaluating Interpretability We evaluate new and existing methods that use language models (lms) to produce code based and language descriptions of function behavior. This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods. The document introduces find (function interpretation and description), a benchmark suite designed to evaluate automated interpretability methods for neural networks. To run the interpretation, run cd . src run interpretations and follow the instructions on the readme file. the code will also allow you to add your own interpreter model.

A Function Interpretation Benchmark For Evaluating Interpretability The document introduces find (function interpretation and description), a benchmark suite designed to evaluate automated interpretability methods for neural networks. To run the interpretation, run cd . src run interpretations and follow the instructions on the readme file. the code will also allow you to add your own interpreter model. Find is introduced, a benchmark suite for evaluating the building blocks of automated interpretability methods and shows that find will be useful for characterizing the performance of more sophisticated interpretability methods before they are applied to real world models. Find is an interactive dataset for evaluating ai interpretability methods on black box functions. this dataset contains all function files for the find benchmark and json files with associated metadata. This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods. Grade acknowledges that alternative terms or expressions to what grade called quality of evidence are often appropriate. therefore, we interpret and will use the phrases quality of evidence, strength of evidence, certainty in evidence or confidence in estimates interchangeably.

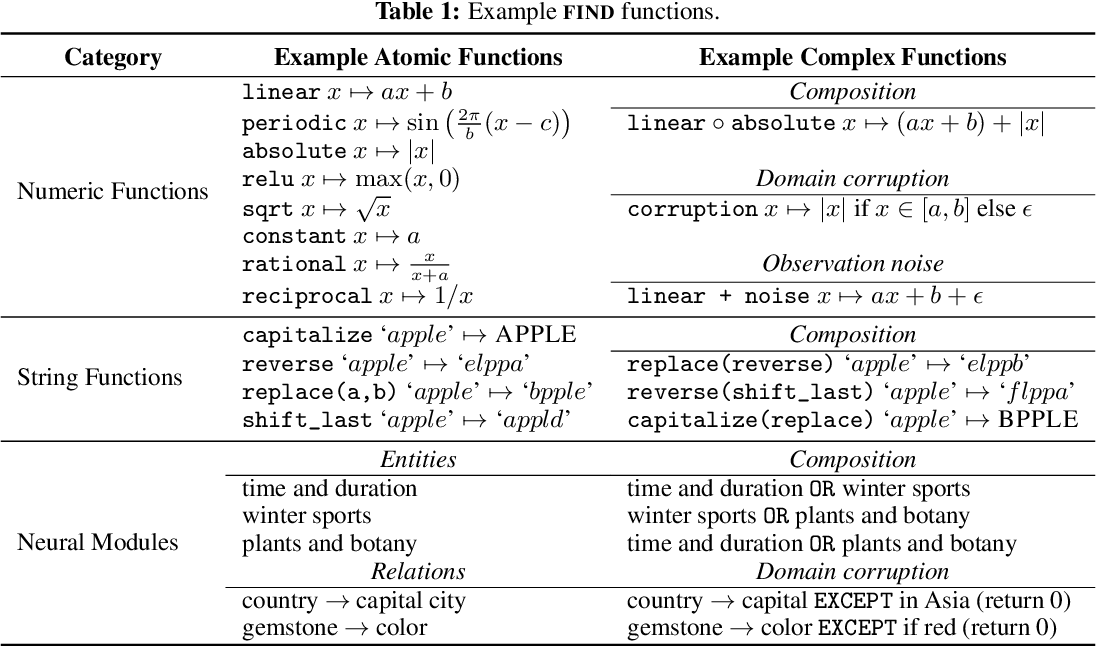

Standard Benchmark Function Download Table Find is introduced, a benchmark suite for evaluating the building blocks of automated interpretability methods and shows that find will be useful for characterizing the performance of more sophisticated interpretability methods before they are applied to real world models. Find is an interactive dataset for evaluating ai interpretability methods on black box functions. this dataset contains all function files for the find benchmark and json files with associated metadata. This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods. Grade acknowledges that alternative terms or expressions to what grade called quality of evidence are often appropriate. therefore, we interpret and will use the phrases quality of evidence, strength of evidence, certainty in evidence or confidence in estimates interchangeably.

Figure 4 From A Function Interpretation Benchmark For Evaluating This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods. Grade acknowledges that alternative terms or expressions to what grade called quality of evidence are often appropriate. therefore, we interpret and will use the phrases quality of evidence, strength of evidence, certainty in evidence or confidence in estimates interchangeably.

Comments are closed.