Figure 2 From A Function Interpretation Benchmark For Evaluating

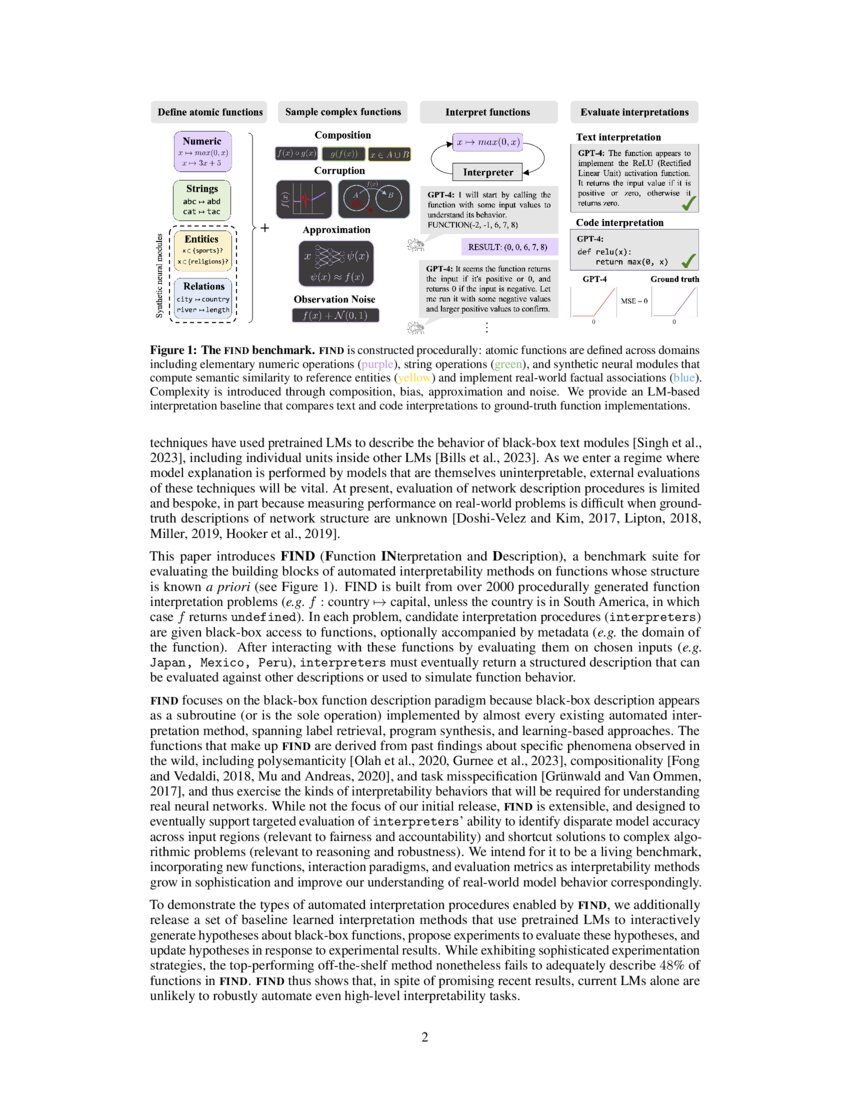

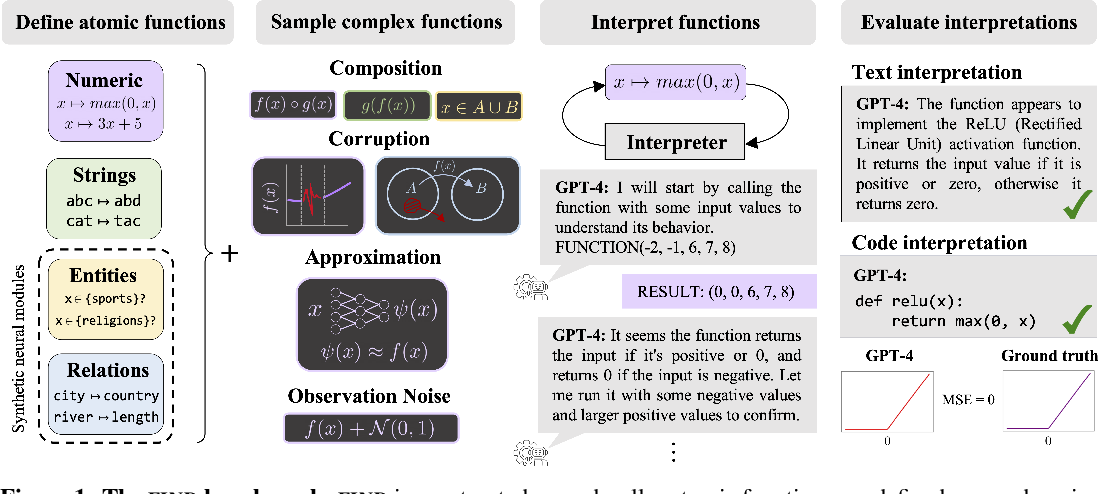

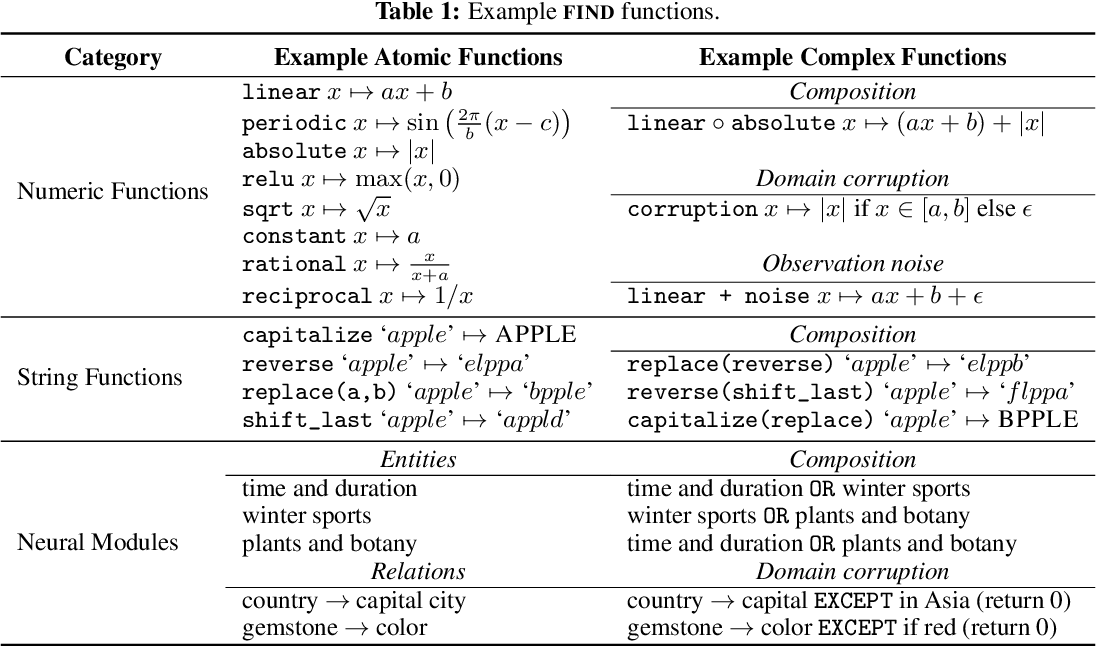

A Function Interpretation Benchmark For Evaluating Interpretability The functions are procedurally constructed across textual and numeric domains, and involve a range of real world complexities, including noise, composition, approximation, and bias. we evaluate methods that use pretrained language models (lms) to produce code based and natural language descriptions of function behavior. The find repository contains the utilities necessary for reproducing benchmark results for the lm baselines reported in the paper, and running and evaluating interpretation of the find functions with other interpreters defined by the user.

A Function Interpretation Benchmark For Evaluating Interpretability This work proposes trojan rediscovery as a benchmarking task to evaluate how useful interpretability tools are for generating engineering relevant insights and designs two such approaches for benchmarking: one for feature attribution methods and one for feature synthesis methods. The document introduces find (function interpretation and description), a benchmark suite designed to evaluate automated interpretability methods for neural networks. This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods. This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods.

A Function Interpretation Benchmark For Evaluating Interpretability This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods. This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods. To run the interpretation, run cd . src run interpretations and follow the instructions on the readme file. the code will also allow you to add your own interpreter model. Find is an interactive dataset for evaluating ai interpretability methods on black box functions. this dataset contains all function files for the find benchmark and json files with associated metadata. This paper introduces find (function interpretation and description), a benchmark suite for evaluating the building blocks of automated interpretability methods. This work proposes trojan rediscovery as a benchmarking task to evaluate how useful interpretability tools are for generating engineering relevant insights and designs two such approaches for benchmarking: one for feature attribution methods and one for feature synthesis methods.

Comments are closed.