Factuality Evaluation Metrics

Factuality Evaluation Metrics Evaluating the factual accuracy of llms requires a set of tailored metrics that help identify factual errors, measure the reliability of outputs, and guide improvements to enhance accuracy. below are some commonly used llm factuality evaluation metrics:. Factuality based metrics like srlscore (semantic role labeling) and qafacteval evaluate whether generated text contains incorrect information that does not hold true to the source text.

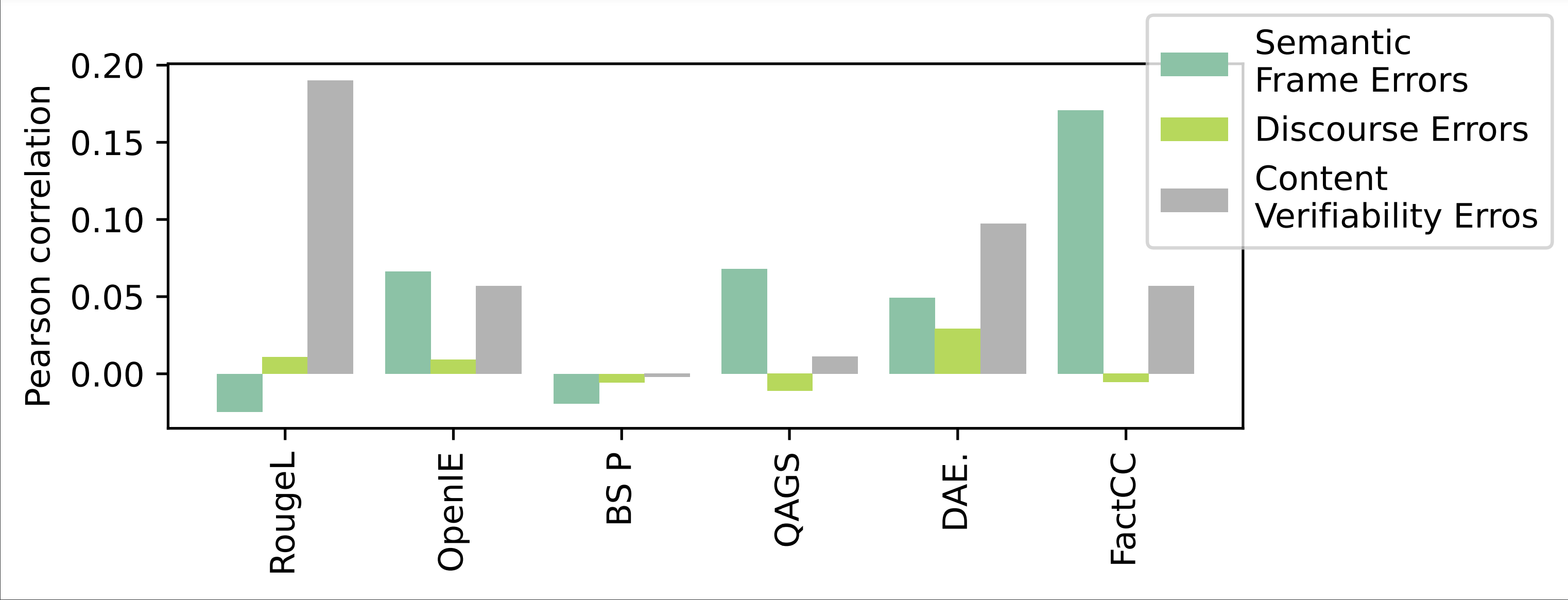

Underline Are Factuality Checkers Reliable Adversarial Meta We first propose a linguistically grounded and operational typology of factual errors which can be used in human evaluation of summarization. we find that decomposing the concept of factuality in (relatively) well defined and grounded categories makes the final binary decision more objective. Learn how to evaluate large language models (llms) effectively. this guide covers automatic & human aligned metrics (bleu, rouge, factuality, toxicity), rag, code generation, and w&b guardrail examples. In this work, we stress test a range of automatic factuality metrics, including specialized models and llm based prompting methods, to probe what they actually capture. Factuality is the measure of how accurately an llm's response aligns with established facts or reference information. simply put, it answers the question: "is what the ai saying actually true?".

Toward Robust Hyper Detailed Image Captioning A Multiagent Approach In this work, we stress test a range of automatic factuality metrics, including specialized models and llm based prompting methods, to probe what they actually capture. Factuality is the measure of how accurately an llm's response aligns with established facts or reference information. simply put, it answers the question: "is what the ai saying actually true?". This article explores scalable methodologies, metrics, and benchmarks designed to rigorously assess the factual accuracy of neural generated outputs across diverse domains. In this paper, we challenge this optimism in regards to factuality evalua tion. we re evaluate five state of the art fac tuality metrics on a collection of 11 datasets for summarization, retrieval augmented gener ation, and question answering. Today, we’re introducing facts grounding, a comprehensive benchmark for evaluating the ability of llms to generate responses that are not only factually accurate with respect to given inputs, but also sufficiently detailed to provide satisfactory answers to user queries. Learn how to measure factuality and faithfulness in rag systems. compare ragas, factscore, and safe frameworks to eliminate llm hallucinations and ensure accuracy.

External Sentence Level Factuality Evaluation Guidelines Pdf This article explores scalable methodologies, metrics, and benchmarks designed to rigorously assess the factual accuracy of neural generated outputs across diverse domains. In this paper, we challenge this optimism in regards to factuality evalua tion. we re evaluate five state of the art fac tuality metrics on a collection of 11 datasets for summarization, retrieval augmented gener ation, and question answering. Today, we’re introducing facts grounding, a comprehensive benchmark for evaluating the ability of llms to generate responses that are not only factually accurate with respect to given inputs, but also sufficiently detailed to provide satisfactory answers to user queries. Learn how to measure factuality and faithfulness in rag systems. compare ragas, factscore, and safe frameworks to eliminate llm hallucinations and ensure accuracy.

Comments are closed.