Evaluation Factuality And Halllucination

Factuality Evaluation Metrics This review systematically analyzes how llm generated content is evaluated for factual accuracy by exploring key challenges such as hallucinations, dataset limitations, and the reliability of evaluation metrics. Recent studies have demonstrated that large language models (llms) are susceptible to being misled by false premise questions (fpqs), leading to errors in factual knowledge, known as factuality hallucination.

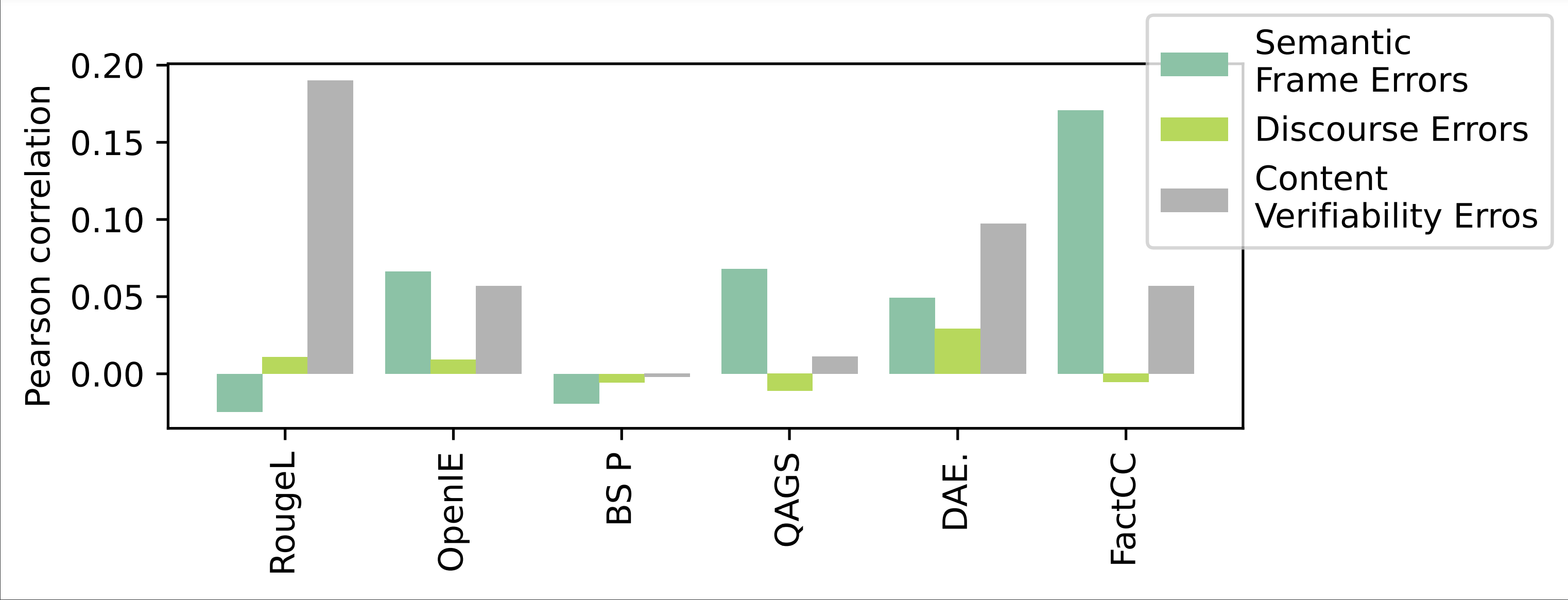

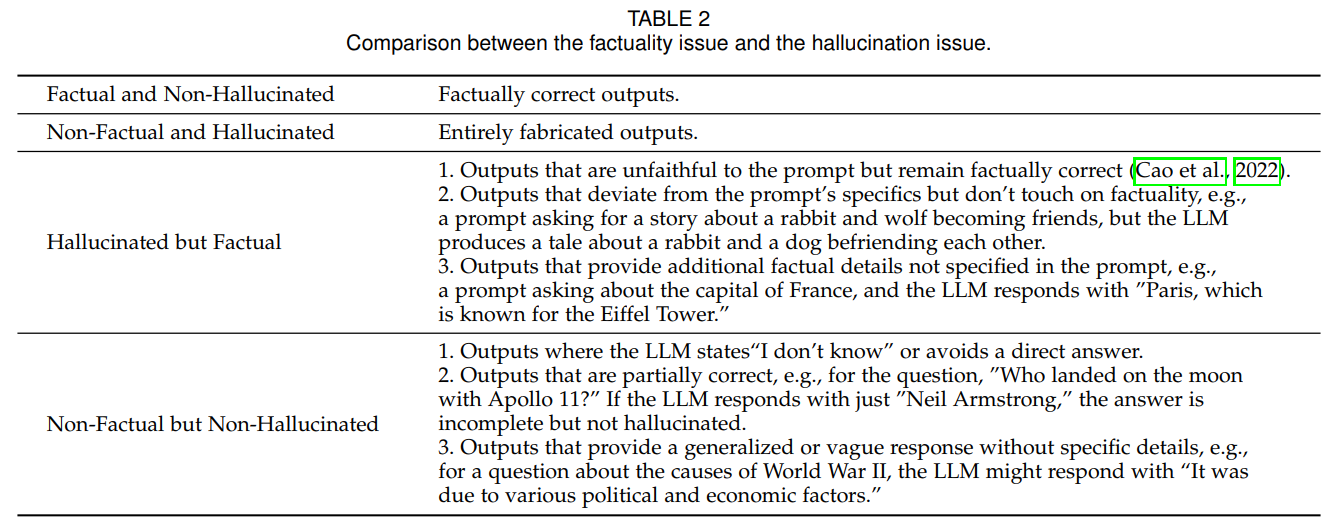

关于 Factuality 和 Hallucination 的疑问 Issue 5 Wangcunxiang Llm At its core, halucheck integrates autofactnli, an automated hallucination detection pipeline that decomposes responses into atomic facts, evaluates their factuality against external knowledge sources, and visualizes areas of potential inaccuracy. In this survey, we propose a redefined taxonomy of hallucination tailored specifically for applications involving llms. we categorize hallucination into two primary types: factuality hallucination and faithfulness hallucination. To mitigate this issue, we introduce a novel decoding method that incorporates both factual and hallucination prompts (dfhp). it applies contrastive decoding to highlight the disparity in output probabilities between factual prompts and hallucination prompts. 5.4 factuality scoring with nli models natural language inference (nli) models (like deberta fine tuned on nli benchmarks) can evaluate whether a hypothesis (the model's answer) is entailed by a premise (the source document). libraries like trulens, ragas, and deepeval provide ready to use hallucination metrics built on this approach.

Factuality In Llms Key Metrics And Improvement Strategies To mitigate this issue, we introduce a novel decoding method that incorporates both factual and hallucination prompts (dfhp). it applies contrastive decoding to highlight the disparity in output probabilities between factual prompts and hallucination prompts. 5.4 factuality scoring with nli models natural language inference (nli) models (like deberta fine tuned on nli benchmarks) can evaluate whether a hypothesis (the model's answer) is entailed by a premise (the source document). libraries like trulens, ragas, and deepeval provide ready to use hallucination metrics built on this approach. This review systematically analyzes how llm generated content is evaluated for factual accuracy by exploring key challenges such as hallucinations, dataset limitations, and the reliability of. To evaluate factual hallucination induced by false premise questions in llms, we develop an auto mated and scalable pipeline to construct fpqs by editing the triplets in a kg and utilizing gpts to generate data. To bridge this gap, we introduce wildhallucinations, a benchmark that evaluates factuality. it does so by prompting llms to generate information about entities mined from user chatbot. It proposes five research questions that guide the analysis of the recent literature from 2020 to 2025, focusing on evaluation methods and mitigation techniques. instruction tuning, multi agent reasoning, and rag frameworks for external knowledge access are also reviewed.

Underline Evaluation Guidlines To Deal With Implicit Phenomena To This review systematically analyzes how llm generated content is evaluated for factual accuracy by exploring key challenges such as hallucinations, dataset limitations, and the reliability of. To evaluate factual hallucination induced by false premise questions in llms, we develop an auto mated and scalable pipeline to construct fpqs by editing the triplets in a kg and utilizing gpts to generate data. To bridge this gap, we introduce wildhallucinations, a benchmark that evaluates factuality. it does so by prompting llms to generate information about entities mined from user chatbot. It proposes five research questions that guide the analysis of the recent literature from 2020 to 2025, focusing on evaluation methods and mitigation techniques. instruction tuning, multi agent reasoning, and rag frameworks for external knowledge access are also reviewed.

Comments are closed.