Exploring Simple Siamese Representation Learning

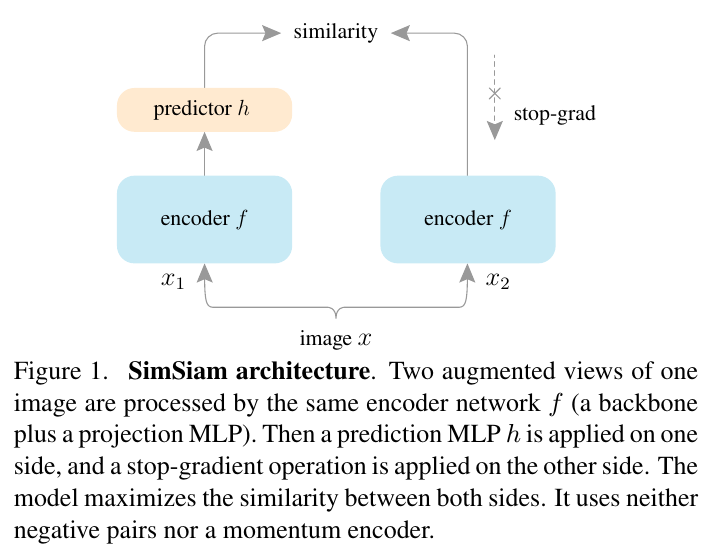

Exploring Simple Siamese Representation Learning Youtube The authors report empirical results that simple siamese networks can learn meaningful representations without negative samples, large batches, or momentum encoders. they propose a "simsiam" method and explain the role of stop gradient operation in preventing collapsing solutions. Siamese networks have become a common structure in various recent models for unsupervised visual representation learning. these models maximize the similarity b.

Exploring Simple Siamese Representation Learning 知乎 This paper presents dense siamese network (dens esiam), a simple unsupervised learning framework for dense prediction tasks. it learns visual representations by maximizing the similarity between two…. Simsiam: exploring simple siamese representation learning this is a pytorch implementation of the simsiam paper:. Simsiam is a method that directly maximizes the similarity of two augmentations of one image, without using negative pairs, large batches, or momentum encoders. it achieves competitive results on imagenet and downstream tasks, and suggests that siamese networks can be an essential inductive bias for unsupervised representation learning. In this paper, we focus on four representative pairwise learning methods, moco [13,14,34], byol [24], simsiam [12] and dino [8]. we have a detailed explanation and comparison of these methods.

Simsiam Loss Simsiam is a method that directly maximizes the similarity of two augmentations of one image, without using negative pairs, large batches, or momentum encoders. it achieves competitive results on imagenet and downstream tasks, and suggests that siamese networks can be an essential inductive bias for unsupervised representation learning. In this paper, we focus on four representative pairwise learning methods, moco [13,14,34], byol [24], simsiam [12] and dino [8]. we have a detailed explanation and comparison of these methods. The paper proposes a simple baseline model for unsupervised representation learning using siamese networks and contrastive loss. it compares with related methods and analyzes the role of stop gradient operation in preventing collapsing. In this paper, we report surprising empirical results that simple siamese networks can learn meaningful representations even using none of the following: (i) negative sample pairs, (ii) large batches, (iii) momentum encoders. Simsiam is a method that directly maximizes the similarity of two augmentations of one image, without using negative pairs, large batches, or momentum encoders. it achieves competitive results on imagenet and downstream tasks, and suggests that siamese networks can be an essential inductive bias for unsupervised representation learning. The authors report that simple siamese networks can learn meaningful representations without negative samples, large batches, or momentum encoders. they propose a "simsiam" method that uses a stop gradient operation to prevent collapsing solutions and achieve competitive results on imagenet and downstream tasks.

Comments are closed.