Exploring Simple Siamese Representation Learning And Beyond

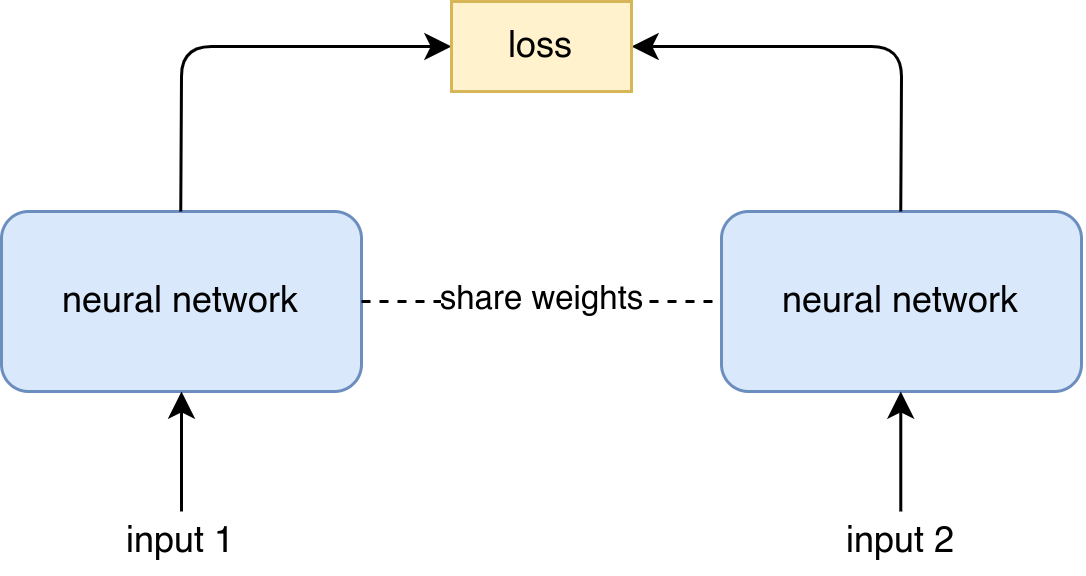

W 840 In this paper, we report surprising empirical results that simple siamese networks can learn meaningful representations even using none of the following: (i) negative sample pairs, (ii) large batches, (iii) momentum encoders. Siamese networks have become a common structure in various recent models for unsupervised visual representation learning. these models maximize the similarity b.

Exploring Simple Siamese Representation Learning Pdf Siamese networks have become a common structure in various recent models for unsupervised visual representation learning. these models maximize the similarity between two augmentations of one image, subject to certain conditions for avoiding collapsing solutions. While easy invariance like “translation” can be baked into “convolutions” as inductive biases, more complex transformations (e.g., color, scale, rotation) are harder to design the counterparts. In this paper, we report surprising empirical results that simple siamese networks can learn meaningful representations even using none of the following: (i) negative sample pairs, (ii) large batches, (iii) momentum encoders. Byol, simsiam, nnclr, and swav share the general notion of contrastive learning using a siamese architecture, however the specific details of implementation and theoretical motivation creates.

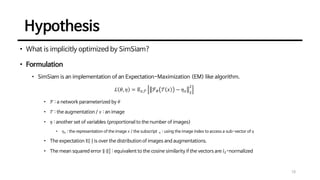

Exploring Simple Siamese Representation Learning Pdf In this paper, we report surprising empirical results that simple siamese networks can learn meaningful representations even using none of the following: (i) negative sample pairs, (ii) large batches, (iii) momentum encoders. Byol, simsiam, nnclr, and swav share the general notion of contrastive learning using a siamese architecture, however the specific details of implementation and theoretical motivation creates. In this paper, we report surprising empirical results that simple siamese networks can learn meaningful representations even using none of the following: (i) negative sample pairs, (ii) large batches, (iii) momentum encoders. Simsiam is a simple but effective contrastive learning method contribution: find the key for model collapse, and simplify the designs kaiming’s philosophy: only simple designs can capture the essence, and transfer well. The script uses all the default hyper parameters as described in the paper, and uses the default augmentation recipe from moco v2. the above command performs pre training with a non decaying predictor learning rate for 100 epochs, corresponding to the last row of table 1 in the paper.

Comments are closed.