Edge Ai Distributed Inference Orchestration

Distributed Inference Of Deep Learning Models Iqua Our proposed orchestration model thus aligns with emerging objectives in the development of 6g networks by enabling intelligent, distributed ai processing at the edge. Learn how aws iot greengrass and lambda@edge support edge ai and enable low latency inference and location aware ai deployments.

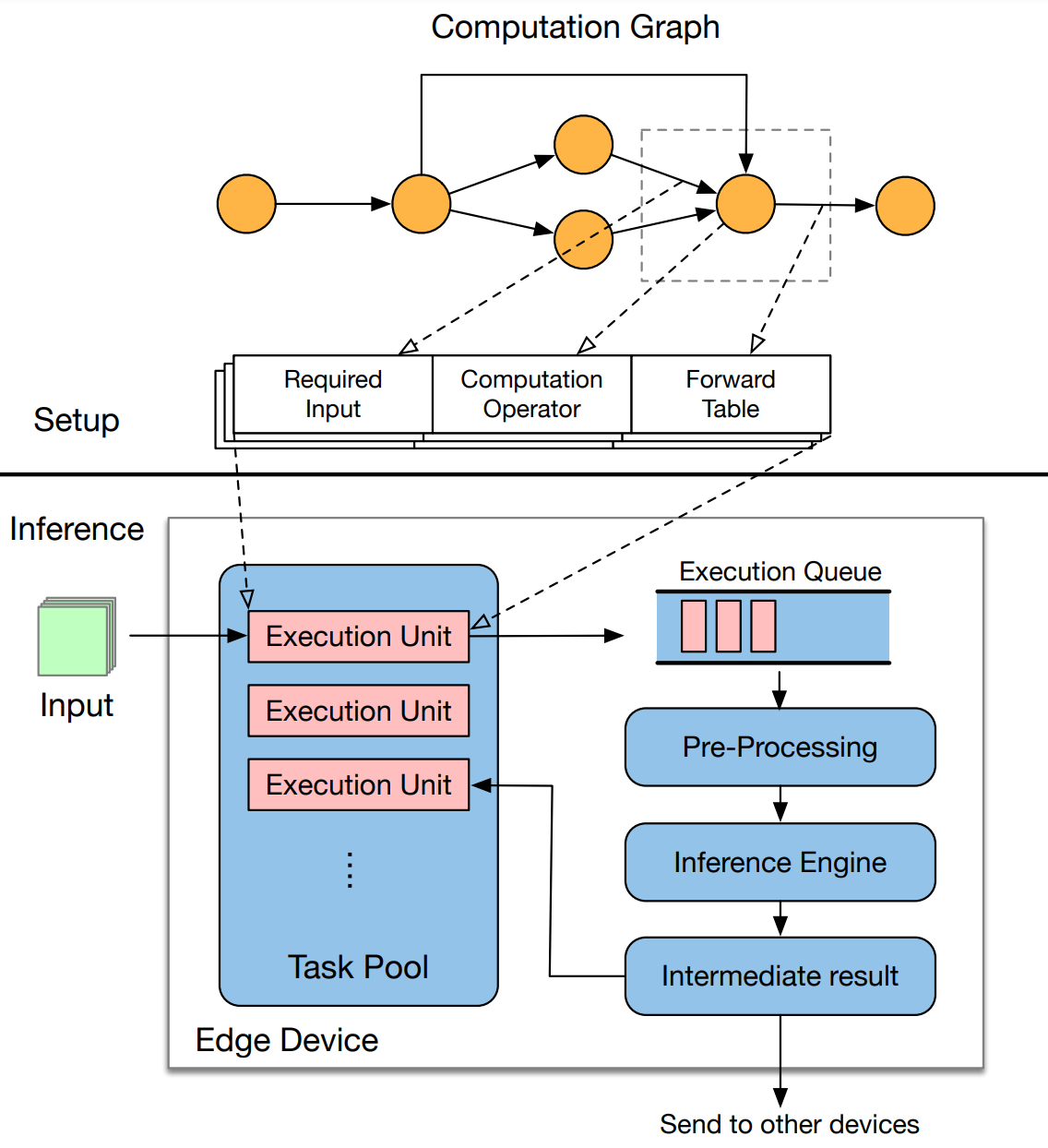

Distributed Edge Inference Changes Everything Akamai Operating this continuum reliably depends on effective orchestration across heterogeneous edge resources. recent technological advances now provide multiple approaches to orchestrating models, data, and compute across this distributed edge fabric. In this context, effective orchestration of edge artificial intelligence (ai) is essential to address challenges such as distributed data sources and dependencies between inference and training processes. This paper explores cloud edge ai orchestration as a transformative framework for enabling distributed intelligence and accelerating real time decision making in enterprise settings. In this paper, a distributed inference optimization framework for transformer decoder only based llms (dio llms) is proposed in edge cloud collaborative networks.

Supercharge Ai With Distributed Postgresql This paper explores cloud edge ai orchestration as a transformative framework for enabling distributed intelligence and accelerating real time decision making in enterprise settings. In this paper, a distributed inference optimization framework for transformer decoder only based llms (dio llms) is proposed in edge cloud collaborative networks. Powering the intelligent edge with open innovation. how open source ai tools are redefining scalability, orchestration, and inference in next gen edge data centers. in the era of. Addressing this fundamental physics problem, akamai technologies recently announced its global scale implementation of the nvidia ai grid reference architecture via its akamai inference cloud. by overlaying an intelligent orchestration control plane onto geographically distributed nodes, akamai's platform can dynamically route inference workloads based on latency, cost, and resource availability. The work presents a detailed investigation and analysis of the schemes centered around the above listed layers of the proposed edge ai framework. In this guide, we'll cover the benefits of edge ai solutions and the challenges with implementing them. we'll also cover ways ahead can help build, orchestrate, and manage large scale edge fleets.

Comments are closed.