Dynamic Resource Allocation Spark Performance Tuning Spark Tutorial

Dynamic Resource Allocation Spark Interview Series By Nitesh Understand how spark's dynamic resource allocation works and how it is used to optimize spark jobs. We’ll define dynamic allocation, detail its configuration in scala, and provide a practical example—a sales data analysis with variable workload phases—to illustrate its impact on resource efficiency.

Spark Performance Tuning Best Practices Spark By Examples By enabling dynamic allocation, you can significantly improve cluster efficiency, minimize costs, and enhance application performance—especially in multi tenant environments. By understanding how executors, memory, and cores interact, and by using spark submit wisely, you can dramatically improve performance and stability of your spark applications. Understand how to configure dynamic resource allocation in spark using pyspark. also understand the difference between static and dynamic resource allocation. One of the key aspects of optimization is tuning executors, which includes configuring the number of executors, executor cores, and executor memory. this guide breaks down the process step by step, ensuring your spark applications run efficiently.

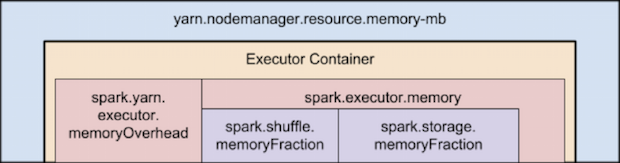

Tuning Resource Allocation Understand how to configure dynamic resource allocation in spark using pyspark. also understand the difference between static and dynamic resource allocation. One of the key aspects of optimization is tuning executors, which includes configuring the number of executors, executor cores, and executor memory. this guide breaks down the process step by step, ensuring your spark applications run efficiently. This guide provides a structured learning path for spark and pyspark, focusing on two core areas: spark architecture and spark tuning. it is intended for data engineers and practitioners who want to gain a deeper understanding of how spark works and how to optimize its performance. This allocation optimizes memory usage and performance in spark applications while ensuring system resources are not exhausted. apache spark’s dynamic allocation feature enables it to automatically adjust the number of executors used in a spark application based on the workload. Implementing these advanced strategies will help you maximize the efficiency and speed of your spark performance tuning efforts, ensuring that your applications run as optimally as possible. From understanding key configurations to identifying common pitfalls, and applying advanced tuning strategies — this guide will help you unlock spark’s full potential.

Comments are closed.