Spark Memory Allocation Spark Performance Tuning

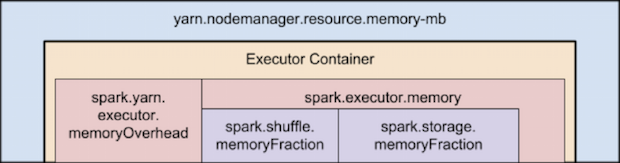

Spark Performance Tuning Best Practices Spark By Examples This section will start with an overview of memory management in spark, then discuss specific strategies the user can take to make more efficient use of memory in his her application. In this guide, we will break down spark's memory model on yarn into simple concepts, complete with step by step calculations and real world tuning strategies.

Tuning Resource Allocation With practical examples in scala and pyspark, you’ll learn how to fine tune memory settings to build robust, high performance spark applications. spark operates by distributing data across a cluster’s executors, where it’s processed in partitions—logical chunks of data handled in parallel. From understanding key configurations to identifying common pitfalls, and applying advanced tuning strategies — this guide will help you unlock spark’s full potential. In this post, we’ll walk through best practices for optimizing spark resource allocation , focusing on how to effectively use the spark submit command to configure executors, memory,. Discover why your spark cluster is losing money with a deep dive into spark memory management. uncover the complexities of memory allocation, off heap memory, and task management for optimal performance.

Spark Out Of Memory Issue Memory Tuning And Management In Pyspark By In this post, we’ll walk through best practices for optimizing spark resource allocation , focusing on how to effectively use the spark submit command to configure executors, memory,. Discover why your spark cluster is losing money with a deep dive into spark memory management. uncover the complexities of memory allocation, off heap memory, and task management for optimal performance. Since spark 1.6.0, a new memory manager has been adopted to replace the static memory manager and provide spark with d ynamic memory allocation. it allocates a region of memory as a unified memory container that is shared by storage and execution. A practical guide to tuning spark executor memory, cores, and driver settings on dataproc clusters for optimal job performance. Spark tuning basics. spark performance won’t be fully optimized without proper tuning settings, including estimating memory consumption, partition size, and number of cores. Optimizing spark for performance is crucial in real world applications. tuning spark involves understanding memory management, task parallelism, and reducing shuffling and overhead. this section focuses on the techniques and configurations that can enhance spark's performance.

Spark Out Of Memory Issue Memory Tuning And Management In Pyspark By Since spark 1.6.0, a new memory manager has been adopted to replace the static memory manager and provide spark with d ynamic memory allocation. it allocates a region of memory as a unified memory container that is shared by storage and execution. A practical guide to tuning spark executor memory, cores, and driver settings on dataproc clusters for optimal job performance. Spark tuning basics. spark performance won’t be fully optimized without proper tuning settings, including estimating memory consumption, partition size, and number of cores. Optimizing spark for performance is crucial in real world applications. tuning spark involves understanding memory management, task parallelism, and reducing shuffling and overhead. this section focuses on the techniques and configurations that can enhance spark's performance.

Comments are closed.