Decoding Vision Language Models A Developer S Guide

Vision Language Models How They Work Overcoming Key Challenges Encord That trajectory makes now the right time to build fluency with vlm architecture and tooling. this guide walks through how vlms work, which architectures matter, what tools are available today, and how to start building. every section maps to a real pain point developers hit when they first approach this space. This article serves as a comprehensive guide for developers looking to understand and implement vision language models (vlms). it delves into the fundamental concepts of vlms, explaining how they bridge the gap between visual and textual data through multimodal learning.

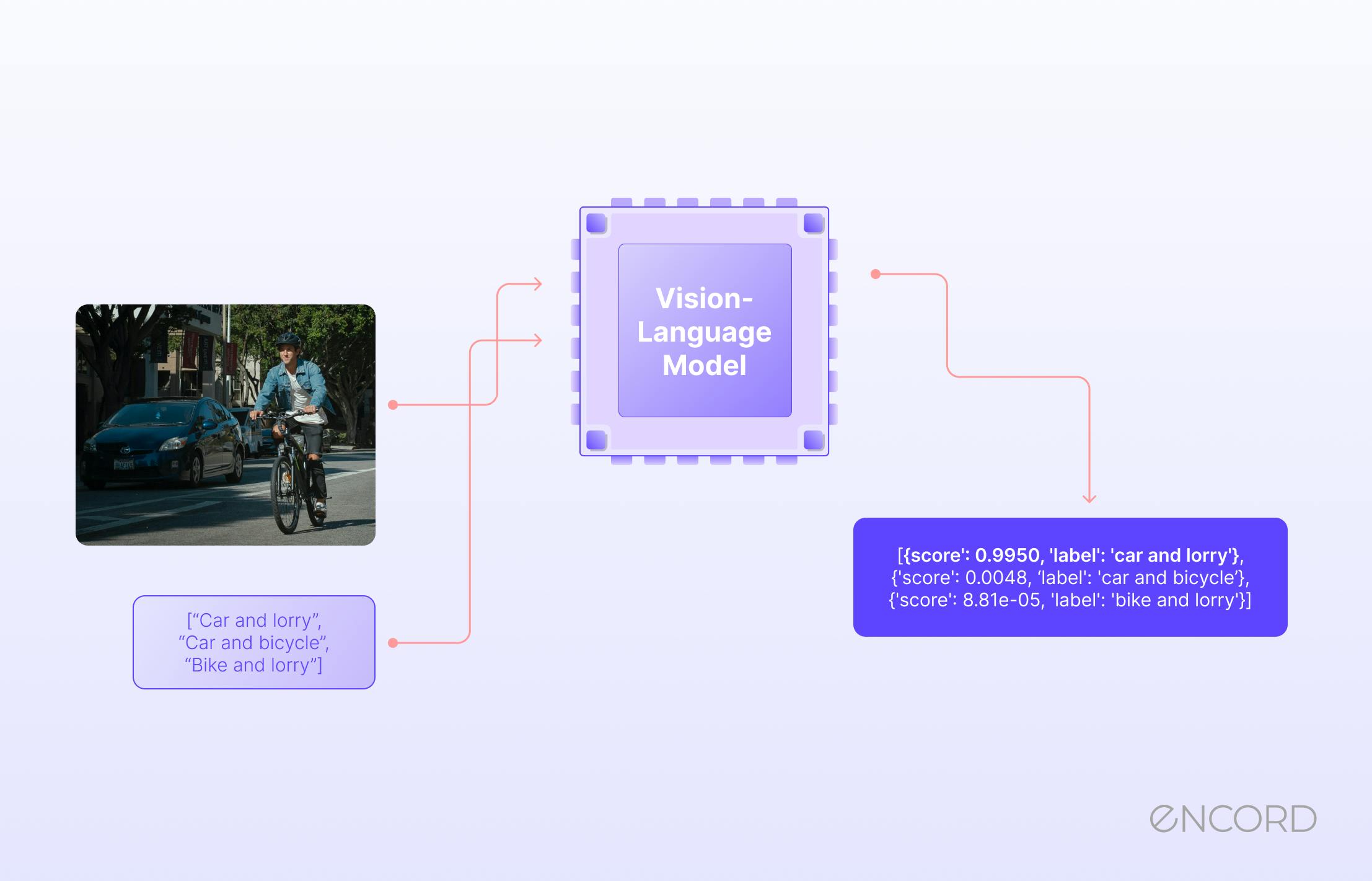

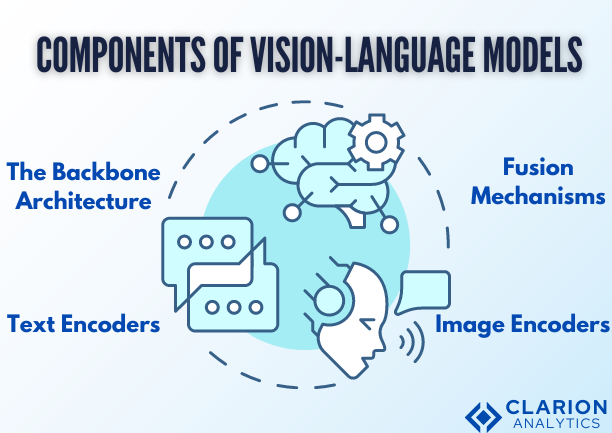

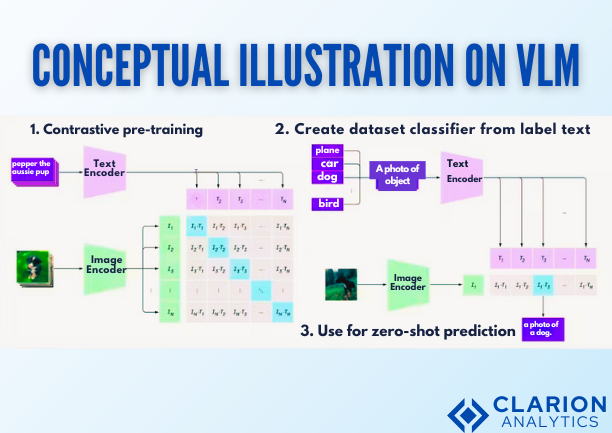

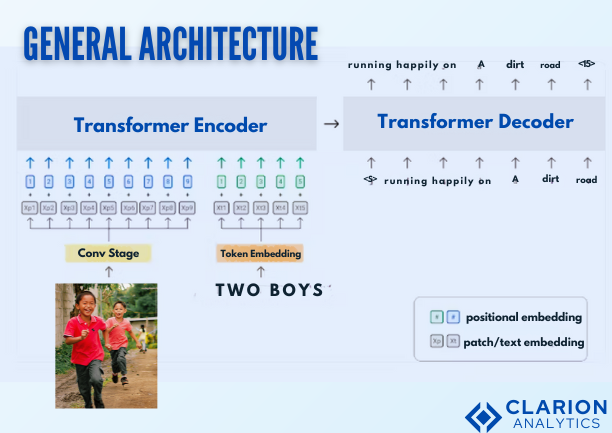

Decoding Vision Language Models A Developer S Guide You're not alone. we're in an exciting era where machines can make sense of both images and language, and at the center of this shift are foundation models in computer vision. Vision language models are but one subtype of the growing number of versatile and powerful multimodal ai models that are now emerging. but as with developing and deploying any ai model, there are always challenges when it comes to potential bias, cost, complexity, and hallucinations. A typical vlm architecture consists of an image encoder to extract visual features, a projection layer to align visual and textual representations, and a language model to process or generate text. This comprehensive guide walks through building a vision language model from architecture to training, with practical insights, working code, and the engineering decisions that matter.

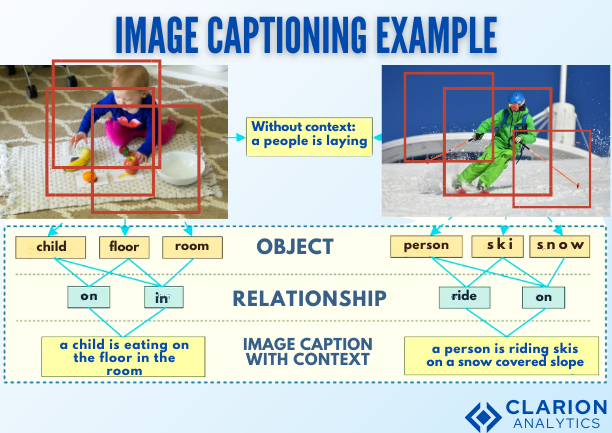

Decoding Vision Language Models A Developer S Guide A typical vlm architecture consists of an image encoder to extract visual features, a projection layer to align visual and textual representations, and a language model to process or generate text. This comprehensive guide walks through building a vision language model from architecture to training, with practical insights, working code, and the engineering decisions that matter. This tutorial provides a systematic introduction to vision language action (vla) models, designed for beginners looking to explore this exciting intersection of computer vision, natural language processing, robotics, and artificial intelligence. Vlms map connections between visual features and textual descriptions. they integrate vision encoders and language models to perform multimodal tasks like image captioning, vqa and image generation from text. they are built using transformer based architectures trained on large image–text datasets. First, we introduce what vlms are, how they work, and how to train them. then, we present and discuss approaches to evaluate vlms. although this work primarily focuses on mapping images to language, we also discuss extending vlms to videos. Vision language models (vlms) have evolved to understand multi image and video inputs, enabling advanced vision language tasks such as visual question answering, captioning, search, and summarization.

Decoding Vision Language Models A Developer S Guide This tutorial provides a systematic introduction to vision language action (vla) models, designed for beginners looking to explore this exciting intersection of computer vision, natural language processing, robotics, and artificial intelligence. Vlms map connections between visual features and textual descriptions. they integrate vision encoders and language models to perform multimodal tasks like image captioning, vqa and image generation from text. they are built using transformer based architectures trained on large image–text datasets. First, we introduce what vlms are, how they work, and how to train them. then, we present and discuss approaches to evaluate vlms. although this work primarily focuses on mapping images to language, we also discuss extending vlms to videos. Vision language models (vlms) have evolved to understand multi image and video inputs, enabling advanced vision language tasks such as visual question answering, captioning, search, and summarization.

Decoding Vision Language Models A Developer S Guide First, we introduce what vlms are, how they work, and how to train them. then, we present and discuss approaches to evaluate vlms. although this work primarily focuses on mapping images to language, we also discuss extending vlms to videos. Vision language models (vlms) have evolved to understand multi image and video inputs, enabling advanced vision language tasks such as visual question answering, captioning, search, and summarization.

Comments are closed.