Introduction To Vision Language Models

Vision Language Models How They Work Overcoming Key Challenges Encord First, we introduce what vlms are, how they work, and how to train them. then, we present and discuss approaches to evaluate vlms. although this work primarily focuses on mapping images to language, we also discuss extending vlms to videos. To enable the functionality of vision language models (vlms), a meaningful combination of both text and images is essential for joint learning. how can we do that? one simple common way is given image text pairs: extract image and text features using text and image encoders. for images it can be cnn or transformer based architectures.

Large Vision Language Models Pre Training Prompting And Applications “when and why vision language models behave like bags of words and what to do about it?” questions?. First, we introduce what vlms are, how they work, and how to train them. then, we present and discuss approaches to evaluate vlms. although this work primarily focuses on mapping images to language, we also discuss extending vlms to videos. Learn about vision language models (vlms), the cutting edge ai technology that combines image understanding with natural language processing for seamless multimodal intelligence. In this blog post i aim to provide a structured, technical introduction to them: what they are, how they work, notable architectures, how to effectively prompt and fine tune them — and how to use.

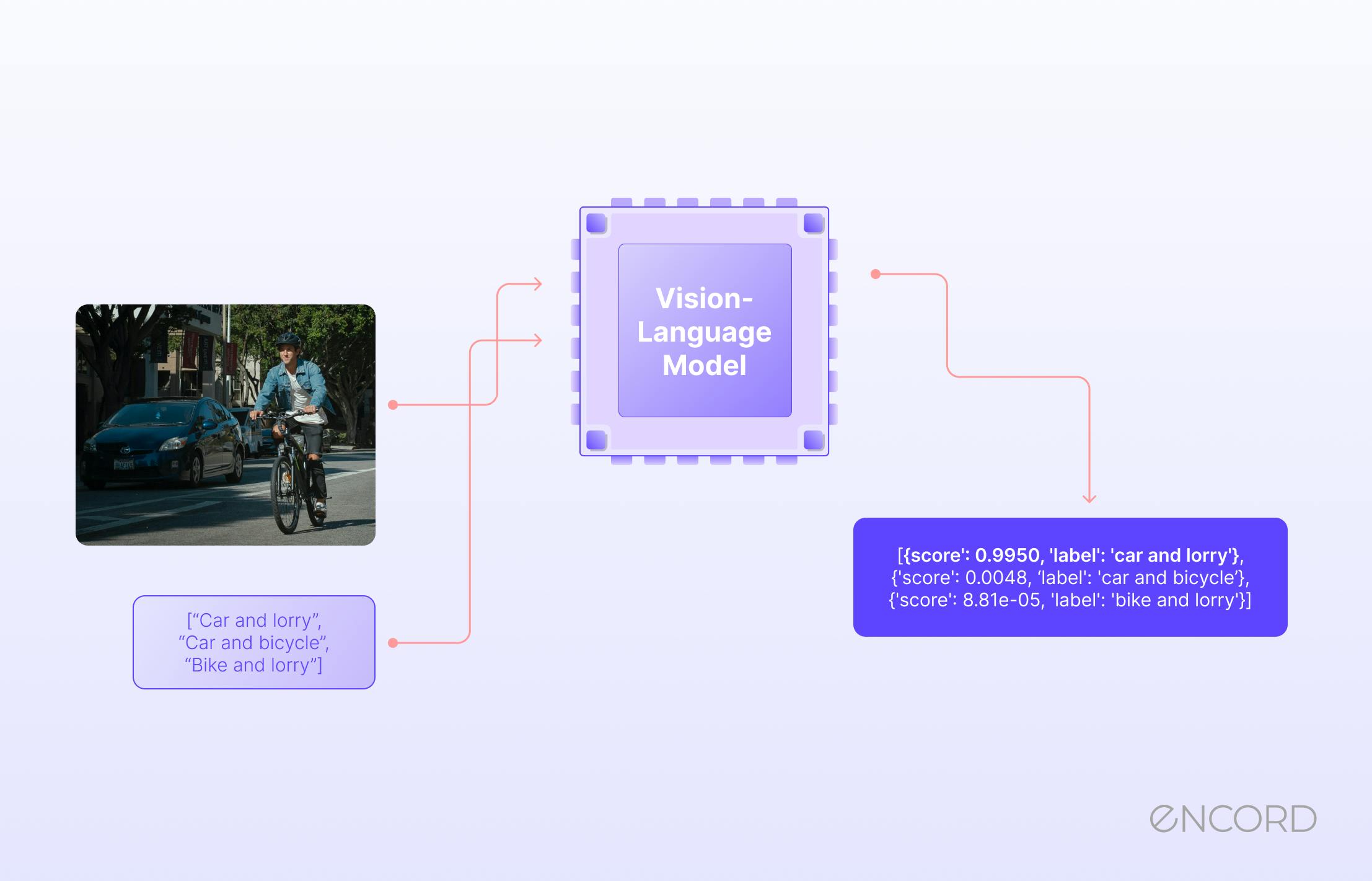

Introduction To Vision Language Models Learn about vision language models (vlms), the cutting edge ai technology that combines image understanding with natural language processing for seamless multimodal intelligence. In this blog post i aim to provide a structured, technical introduction to them: what they are, how they work, notable architectures, how to effectively prompt and fine tune them — and how to use. This tutorial provides a systematic introduction to vision language action (vla) models, designed for beginners looking to explore this exciting intersection of computer vision, natural language processing, robotics, and artificial intelligence. This introduction to vlms is presented which will help anyone who would like to enter the field and introduces what vlms are, how they work, and how to train them. following the recent popularity of large language models (llms), several attempts have been made to extend them to the visual domain. Vision language models (vlms) are ai systems that combine computer vision and natural language processing to understand and generate language grounded in visual information. We explore the vision language modeling paradigm, highlight key challenges in feature alignment, scalability, and data and evaluation, and review notable progress in the field.

Introduction To Vision Language Models This tutorial provides a systematic introduction to vision language action (vla) models, designed for beginners looking to explore this exciting intersection of computer vision, natural language processing, robotics, and artificial intelligence. This introduction to vlms is presented which will help anyone who would like to enter the field and introduces what vlms are, how they work, and how to train them. following the recent popularity of large language models (llms), several attempts have been made to extend them to the visual domain. Vision language models (vlms) are ai systems that combine computer vision and natural language processing to understand and generate language grounded in visual information. We explore the vision language modeling paradigm, highlight key challenges in feature alignment, scalability, and data and evaluation, and review notable progress in the field.

Comments are closed.