Comparison Of Blip2 Captioning Models With 1 Click Windows Runpod

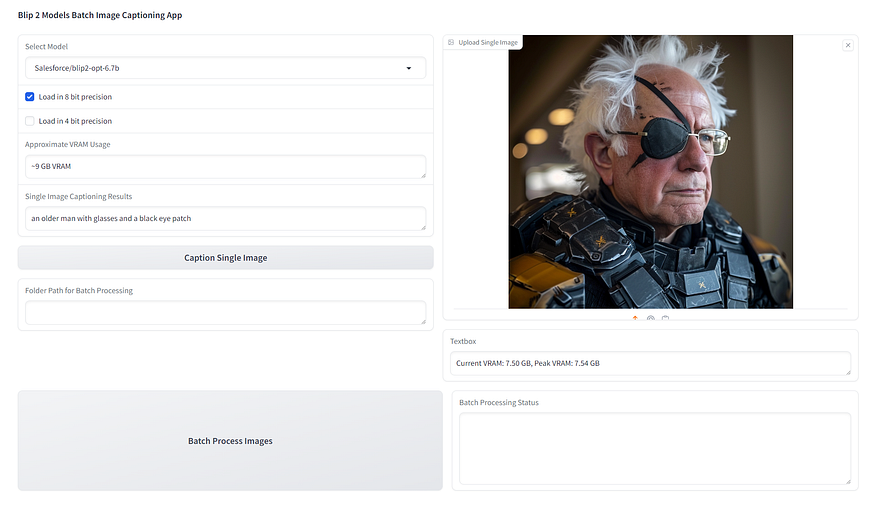

Comparison Of Blip2 Captioning Models With 1 Click Windows Runpod All precisions are working on windows as well with our special installers. 16 bit mode works fastest meanwhile 8 bit mode works slowest. 4 bit mode is slower than 16 bit precision but faster than 8 bit precision. I have recently coded from a scratch gradio app for the famous blip2 captioning models.

Building An Ocr System Using Runpod Serverless These interfaces support batch captioning for various image vision models, offering remarkable precision and speed. All precisions are working on windows as well with our special installers. 16 bit mode works fastest meanwhile 8 bit mode works slowest. 4 bit mode is slower than 16 bit precision but faster than 8 bit precision. All precisions are working on windows as well with our special installers. 16 bit mode works fastest meanwhile 8 bit mode works slowest. 4 bit mode is slower than 16 bit precision but faster than 8 bit precision. look at all the information below. 1 click install and use sota image captioning models on your computer. supports 8 bit loading as well. 90 clip vision and 5 caption models.

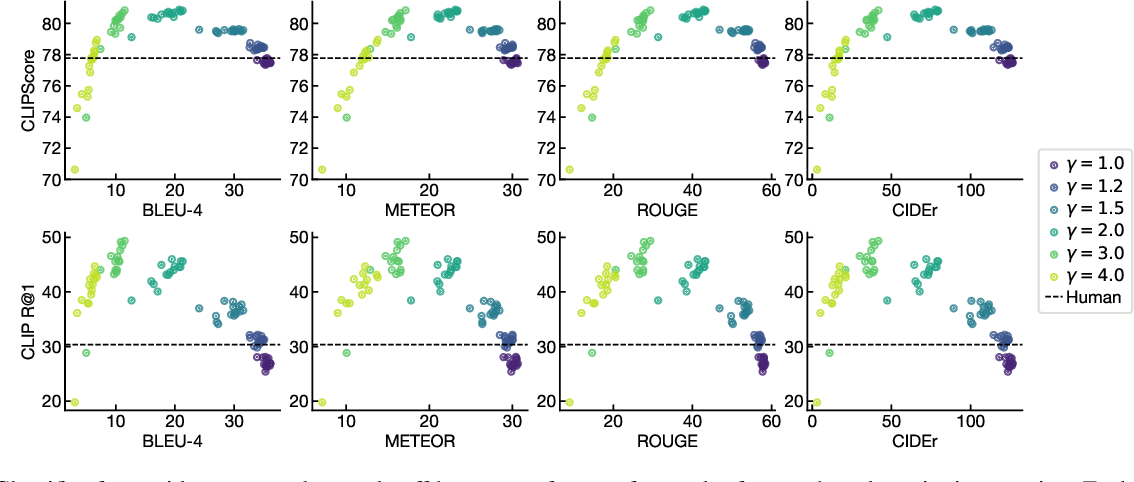

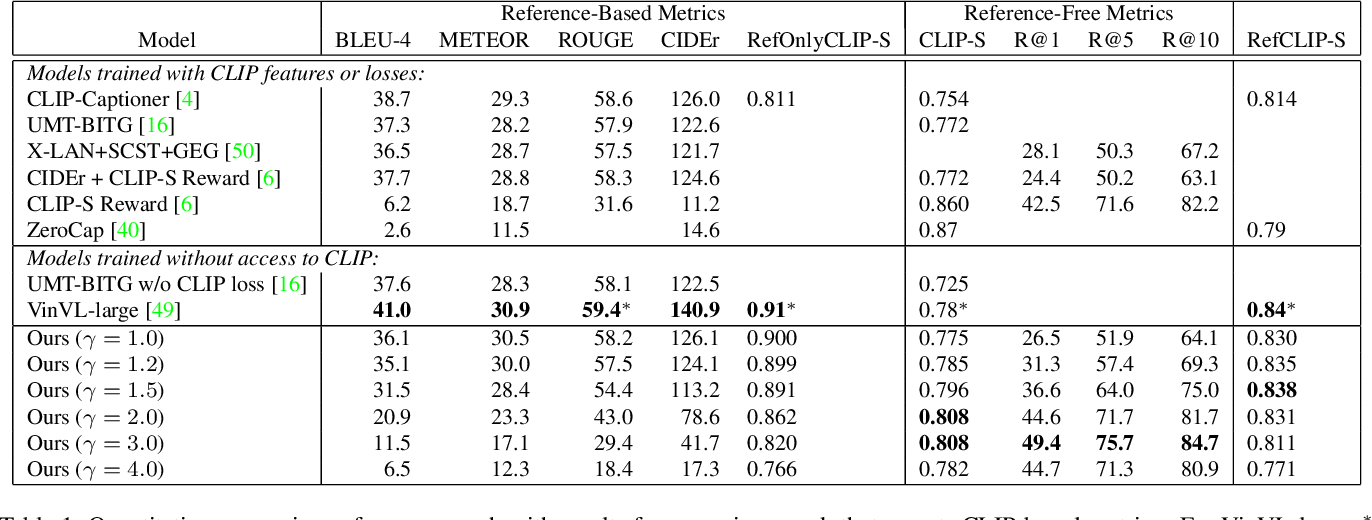

Guiding Image Captioning Models Toward More Specific Captions Paper All precisions are working on windows as well with our special installers. 16 bit mode works fastest meanwhile 8 bit mode works slowest. 4 bit mode is slower than 16 bit precision but faster than 8 bit precision. look at all the information below. 1 click install and use sota image captioning models on your computer. supports 8 bit loading as well. 90 clip vision and 5 caption models. This guide introduces blip 2 from salesforce research that enables a suite of state of the art visual language models that are now available in 🤗 transformers. we'll show you how to use it for image captioning, prompted image captioning, visual question answering, and chat based prompting. This document covers the implementation of image captioning using salesforce's blip 2 (bootstrapping language image pre training) model through hugging face transformers. When using pre alpha or alpha one, batch processing was slower on single gpu and was loading model again. this issue fixed. all apps has the following amazing features. if any of them are broken please report and let me know. using 4 bit quantization reduces vram usage but also slows down. Just three months ago, in july, i spent a good deal of time testing image captioning models. cutting edge models for the time, like microsoft azure computer vision, were able to produce fairly reasonable captions most of the time.

Guiding Image Captioning Models Toward More Specific Captions Paper This guide introduces blip 2 from salesforce research that enables a suite of state of the art visual language models that are now available in 🤗 transformers. we'll show you how to use it for image captioning, prompted image captioning, visual question answering, and chat based prompting. This document covers the implementation of image captioning using salesforce's blip 2 (bootstrapping language image pre training) model through hugging face transformers. When using pre alpha or alpha one, batch processing was slower on single gpu and was loading model again. this issue fixed. all apps has the following amazing features. if any of them are broken please report and let me know. using 4 bit quantization reduces vram usage but also slows down. Just three months ago, in july, i spent a good deal of time testing image captioning models. cutting edge models for the time, like microsoft azure computer vision, were able to produce fairly reasonable captions most of the time.

Nielsr Comparing Captioning Models Blip 2 Comparison When using pre alpha or alpha one, batch processing was slower on single gpu and was loading model again. this issue fixed. all apps has the following amazing features. if any of them are broken please report and let me know. using 4 bit quantization reduces vram usage but also slows down. Just three months ago, in july, i spent a good deal of time testing image captioning models. cutting edge models for the time, like microsoft azure computer vision, were able to produce fairly reasonable captions most of the time.

Multimodalart Blip Image Captioning Large Endpoint Hugging Face

Comments are closed.