Caption Images Or Learn How To Prompt With Clip Vision Of Sdxl And Blip V2 Windows And Runpod

Thesudio Clip Vision Sdxl Vit H Safetensors Hugging Face 1 click install and use sota image captioning models on your computer. supports 8 bit loading as well. 90 clip vision and 5 caption models. The real reason windows hate is exploding: it's not just the ui—it's the end of personal computing i hacked this temu router. what i found should be illegal.

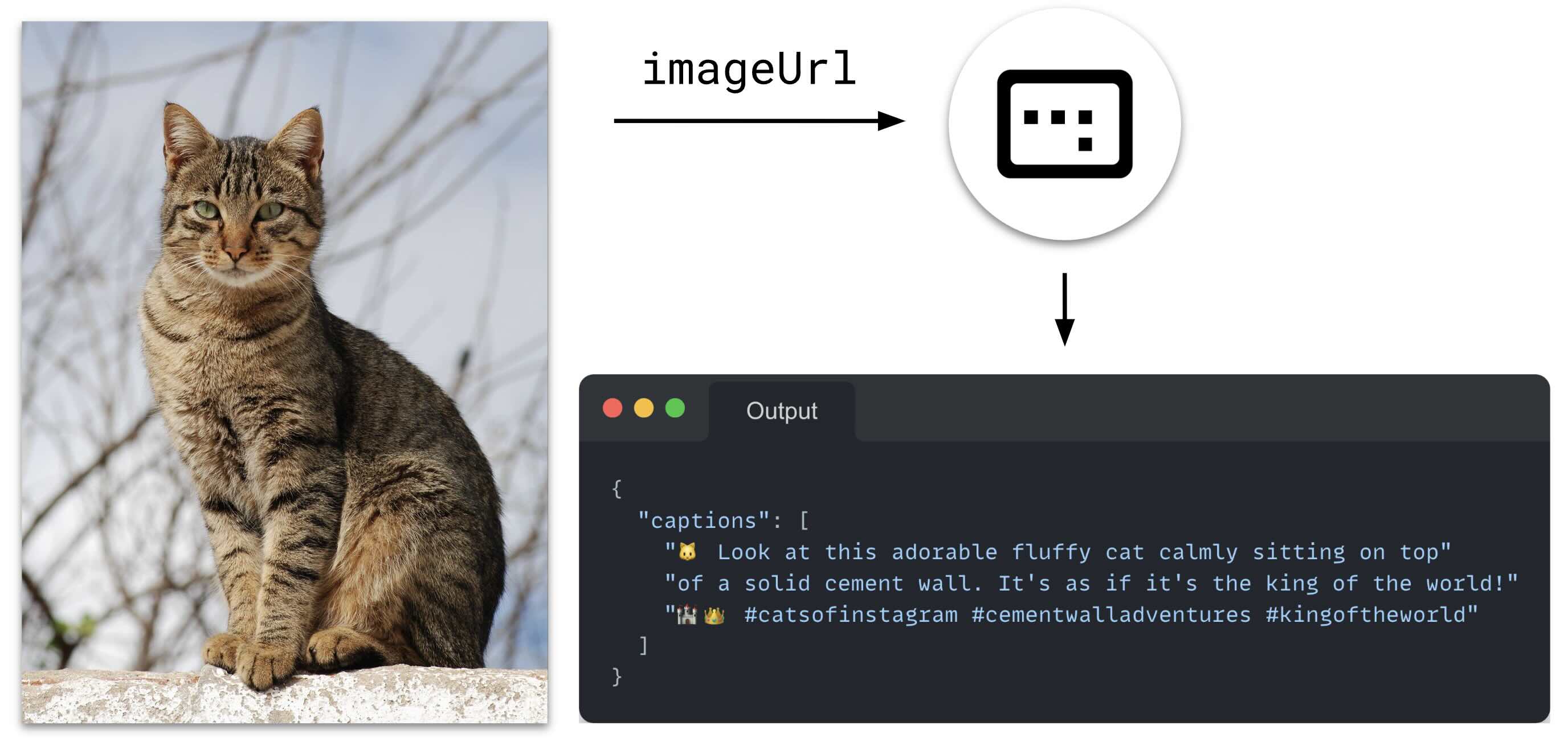

Sdxl Lora Training Using Runpod Io Weird Wonderful Ai Art Sota (the very best) image captioning models script for stable diffusion and more. 1 click install and use sota image captioning models on your computer. supports 8 bit loading as well. 90 clip vision and 5 caption models. Runpod network volumes can attach to multiple pods simultaneously. do env setup and model transfer on a cheap cpu pod, then rent the 4090 only for actual training. also records four sd scripts gotchas hit while training an illustrious xl lora. Learn how to effectively caption images using clip vision on windows and runpod with sdxl and blip v2. Clip (contrastive language–image pre training) is a neural network that maps visual concepts to natural languages. a clip model is trained with a massive number of image and caption pairs.

Github Simonw Blip Caption Generate Captions For Images With Learn how to effectively caption images using clip vision on windows and runpod with sdxl and blip v2. Clip (contrastive language–image pre training) is a neural network that maps visual concepts to natural languages. a clip model is trained with a massive number of image and caption pairs. The batch image captioning models we have right now as follows: cogvml with quantization 4 bit, 8 bit, 16 bit llava including 34b with quantization such as 4 bit, 8 bit, 16 bit blip2 models. 1 click install and use sota image captioning models on your computer. supports 8 bit loading as well. 90 clip vision and 5 caption models. github is where people build software. more than 150 million people use github to discover, fork, and contribute to over 420 million projects. Scribe download scripts from here ⤵️ patreon posts sota very best 90744385 sota (the very best) image captioning models script for stable diffusion and more 1 click install and use sota image captioning models on your computer. This guide will cover training an sdxl lora. it's meant to get you to a high quality lora that you can use with sdxl models as fast as possible. "fast" is relative of course. gathering a high quality training dataset will take quite a bit of time.

Using The Blip Model For Image Captioning The batch image captioning models we have right now as follows: cogvml with quantization 4 bit, 8 bit, 16 bit llava including 34b with quantization such as 4 bit, 8 bit, 16 bit blip2 models. 1 click install and use sota image captioning models on your computer. supports 8 bit loading as well. 90 clip vision and 5 caption models. github is where people build software. more than 150 million people use github to discover, fork, and contribute to over 420 million projects. Scribe download scripts from here ⤵️ patreon posts sota very best 90744385 sota (the very best) image captioning models script for stable diffusion and more 1 click install and use sota image captioning models on your computer. This guide will cover training an sdxl lora. it's meant to get you to a high quality lora that you can use with sdxl models as fast as possible. "fast" is relative of course. gathering a high quality training dataset will take quite a bit of time.

Comments are closed.