Best Platform For Python Apps Deployment Hugging Face Spaces With Docker

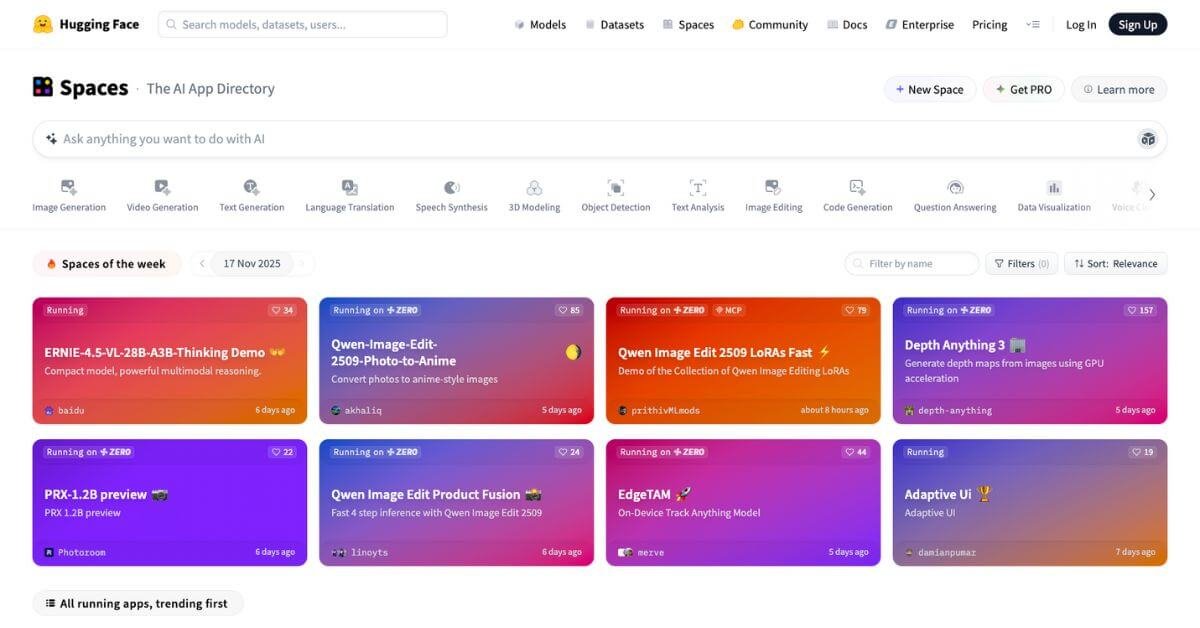

Python Docker Hugging Face A Hugging Face Space By Gnharishkumar Learn about the hugging face hub and how to use its docker spaces to build machine learning apps effortlessly. Enter docker for consistent packaging and hugging face spaces for accessible, free deployment. hugging face spaces isn’t just for ml demos; it’s a powerful platform that supports.

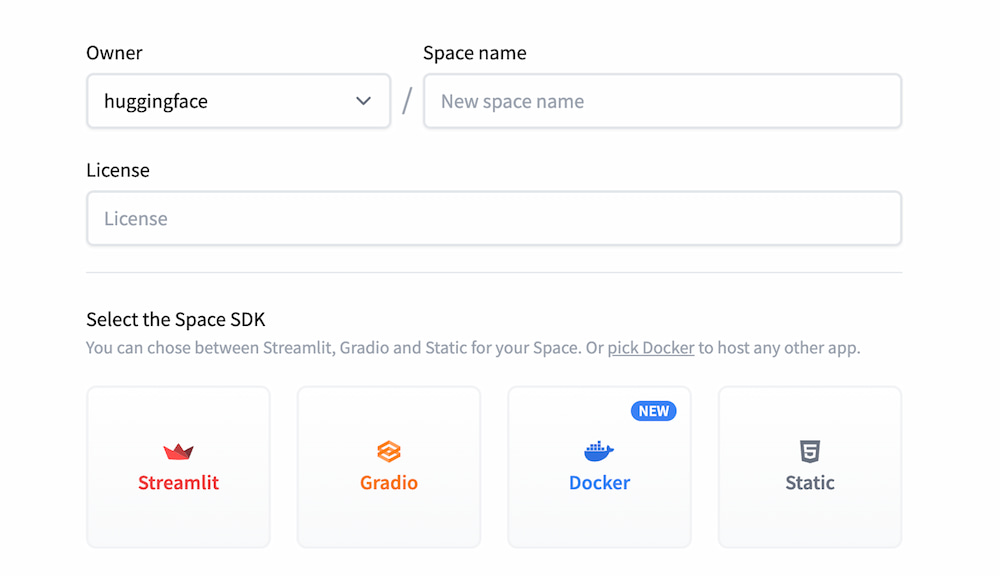

Deploy Langflow On Hugging Face Spaces Langflow Documentation In this tutorial, i will guide you through the process of deploying a fastapi application using docker and deploying your api on huggingface. fastapi is a modern, fast web framework for building apis with python 3.7 based on standard python type hints. Learn how to use docker to build and deploy an llm application on the hugging face cloud. supporting tools include gradio, llamaindex, llamaparse, and groq. This blog post will guide you through creating a simple text generation model using hugging face transformers library, building a fastapi application to expose a rest api for generating text, and deploying it using a docker container. Docker spaces allow users to go beyond the limits of what was previously possible with the standard sdks. from fastapi and go endpoints to phoenix apps and ml ops tools, docker spaces can help in many different setups.

Your First Docker Space Text Generation With T5 This blog post will guide you through creating a simple text generation model using hugging face transformers library, building a fastapi application to expose a rest api for generating text, and deploying it using a docker container. Docker spaces allow users to go beyond the limits of what was previously possible with the standard sdks. from fastapi and go endpoints to phoenix apps and ml ops tools, docker spaces can help in many different setups. Container hosting: hugging face spaces with docker# container platforms let you deploy applications in isolated, portable environments that include all dependencies. they’re perfect for ml applications that need custom environments, specific packages, or persistent state. Enter hugging face spaces – a game changer fusing git based repos, auto scaling compute, and one click ml demos. at its core, spaces leverages containerization (docker) and orchestration principles from cloud computing, but optimized for ml workflows. In this tutorial, you will learn how to use docker to create a container with all the necessary code and artifacts to load hugging face models and to expose them as web service endpoints using flask. In this guide, we’ll walk through the process of packaging a hugging face model into a docker container, setting it up for inference, and deploying it on a gpu with runpod.

Hugging Face Spaces Ai Deployment Tool For Ml Demos Developers Container hosting: hugging face spaces with docker# container platforms let you deploy applications in isolated, portable environments that include all dependencies. they’re perfect for ml applications that need custom environments, specific packages, or persistent state. Enter hugging face spaces – a game changer fusing git based repos, auto scaling compute, and one click ml demos. at its core, spaces leverages containerization (docker) and orchestration principles from cloud computing, but optimized for ml workflows. In this tutorial, you will learn how to use docker to create a container with all the necessary code and artifacts to load hugging face models and to expose them as web service endpoints using flask. In this guide, we’ll walk through the process of packaging a hugging face model into a docker container, setting it up for inference, and deploying it on a gpu with runpod.

Comments are closed.