Build Machine Learning Apps With Hugging Face Docker

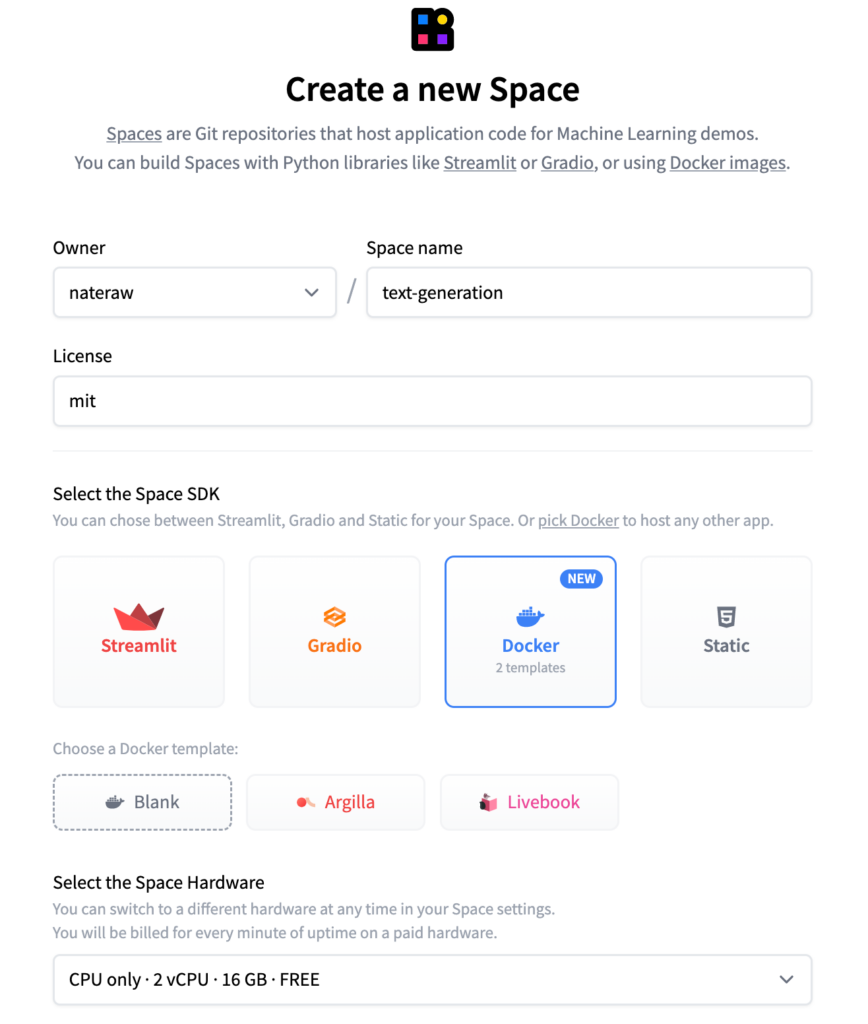

Build Machine Learning Apps With Hugging Face Docker Learn about the hugging face hub and how to use its docker spaces to build machine learning apps effortlessly. This blog post will guide you through creating a simple text generation model using hugging face transformers library, building a fastapi application to expose a rest api for generating text, and deploying it using a docker container.

Build Machine Learning Apps With Hugging Face Docker This comprehensive guide walks you through building a real world mlops project: a production ready multi model nlp api with 4 hugging face models running in docker. We’re on a journey to advance and democratize artificial intelligence through open source and open science. Learn how to use docker to build and deploy an llm application on the hugging face cloud. supporting tools include gradio, llamaindex, llamaparse, and groq. In this tutorial, you will learn how to use docker to create a container with all the necessary code and artifacts to load hugging face models and to expose them as web service endpoints using flask.

Build Machine Learning Apps With Hugging Face Docker Learn how to use docker to build and deploy an llm application on the hugging face cloud. supporting tools include gradio, llamaindex, llamaparse, and groq. In this tutorial, you will learn how to use docker to create a container with all the necessary code and artifacts to load hugging face models and to expose them as web service endpoints using flask. You can use this guide as a starting point to build more complex and exciting applications that leverage the power of machine learning. if you’re interested in learning more about docker templates and seeing curated examples, check out the docker examples page. Today, we will deploy a simple model using hugging face, fastapi, and docker, demonstrating how to achieve this goal efficiently. step 1: choosing our huggingface model. It enables data scientists to quickly build interactive dashboards and machine learning web apps, without requiring front end web development experience. this framework has gained attention and popularity among data scientists and machine learning programmers in recent years. By leveraging tools like fastapi and docker, you can create a scalable and reproducible environment to deploy hugging face transformer models on a linux server. this guide provides a comprehensive walkthrough of setting up a production ready inference service from scratch.

Comments are closed.