Ai Scaling Laws Universal Guide Estimates How Llms Will Perform Based

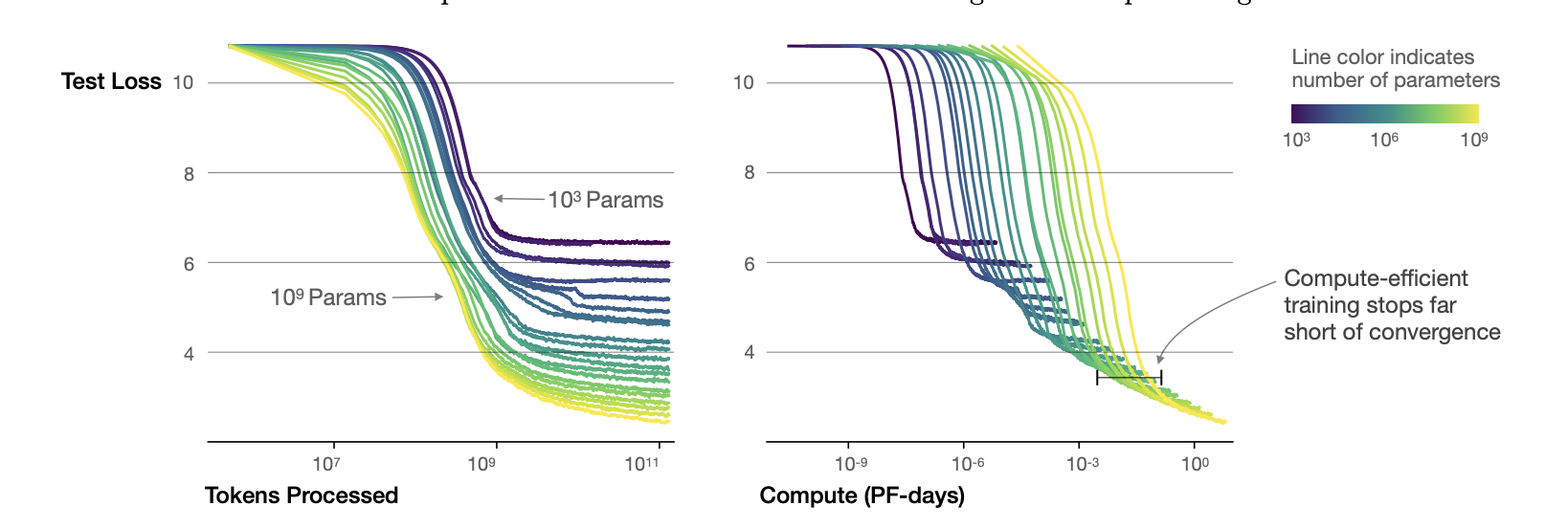

Ai Scaling Laws Universal Guide Estimates How Llms Will Perform Based Mit and mit ibm watson ai lab researchers have developed a universal guide for estimating how large language models (llms) will perform based on smaller models in the same family. From this, the team developed a meta analysis and guide for how to select small models and estimate scaling laws for different llm model families, so that the budget is optimally applied toward generating reliable performance predictions.

Scaling Laws For Llms Foundation Model 4 Climate Notes Together, these guidelines provide a systematic approach to making scaling law estimation more efficient, reliable, and accessible for ai researchers working under varying budget. From this, the team developed a meta analysis and guide for how to select small models and estimate scaling laws for different llm model families, so that the budget is optimally applied toward generating reliable performance predictions. From this, the team developed a meta analysis and guide for how to select small models and estimate scaling laws for different llm model families, so that the budget is optimally applied toward generating reliable performance predictions. Learn how ai scaling laws predict the performance of language models based on their previous versions.

Scaling Ai Applications With Llms From this, the team developed a meta analysis and guide for how to select small models and estimate scaling laws for different llm model families, so that the budget is optimally applied toward generating reliable performance predictions. Learn how ai scaling laws predict the performance of language models based on their previous versions. Ai scaling laws provide a valuable framework for understanding how llms perform based on their size and architecture. by leveraging this universal guide, researchers can make informed decisions about model development and optimization, leading to more efficient and powerful ai systems. Mit ibm watson ai lab researchers have developed a universal guide for estimating how large language models will perform based on smaller models in the same family. When researchers are building large language models (llms), they aim to maximize performance under a particular computational and financial budget. From this, the team developed a meta analysis and guide for how to select small models and estimate scaling laws for different llm model families, so that the budget is optimally applied toward generating reliable performance predictions.

Comments are closed.