What Are Llm Scaling Laws

Llm Scaling Laws A Synthesis Of Hyperparameter Optimization And Long If we train larger models over more data, we get better results. this relationship can be defined more rigorously via a scaling law, which is just an equation that describes how an llm’s test loss will decrease as we increase some quantity of interest (e.g., training compute). We study empirical scaling laws for language model performance on the cross entropy loss. the loss scales as a power law with model size, dataset size, and the amount of compute used for training, with some trends spanning more than seven orders of magnitude.

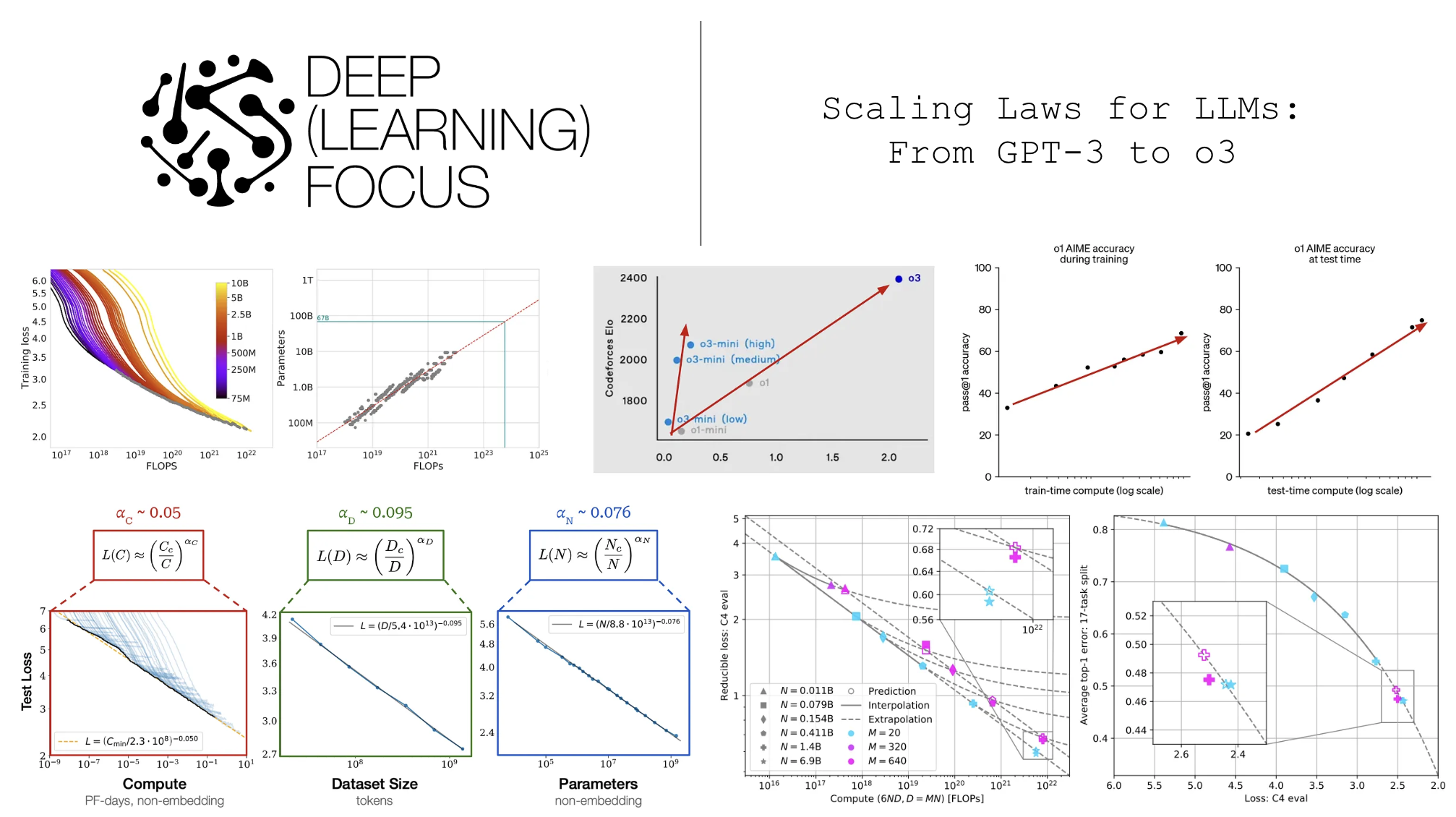

Scaling Laws For Llms From Gpt 3 To O3 In the context of large language models (llms), this typically refers to the relationship between a performance metric, such as out of sample loss, and a single model property, like the number of. The term llm scaling laws refers to empirical regularities that link model performance to the amount of compute, training data, and model parameters used during training. “the ability to perform a task via few shot prompting is emergent when a model has random performance until a certain scale, after which performance increases to well above random.” (wei et al., 2022). Learn why bigger models usually perform better. an introduction to the scaling laws that guide modern large language model development.

Was Just Watching Andrej Karpathy S Video On Llms And Came Across “the ability to perform a task via few shot prompting is emergent when a model has random performance until a certain scale, after which performance increases to well above random.” (wei et al., 2022). Learn why bigger models usually perform better. an introduction to the scaling laws that guide modern large language model development. At the heart of this evolution are scaling laws, which describe the relationships between a model’s performance and its key attributes, i.e. size (number of parameters), training data volume,. We study empirical scaling laws for language model performance on the cross entropy loss. the loss scales as a power law with model size, dataset size, and the amount of compute used for training, with some trends spanning more than seven orders of magnitude. This article explores these questions from the ground up, beginning with an in depth explanation of llm scaling laws and the surrounding research. while the idea of a scaling law is simple, public misconceptions abound—the science behind this research is actually very specific. In this work, we introduce a series of scaling laws capable of accurately predicting the performance of a larger model based on the performance of smaller models from the same family.

知识 Llm中的scaling Laws是什么 Csdn博客 At the heart of this evolution are scaling laws, which describe the relationships between a model’s performance and its key attributes, i.e. size (number of parameters), training data volume,. We study empirical scaling laws for language model performance on the cross entropy loss. the loss scales as a power law with model size, dataset size, and the amount of compute used for training, with some trends spanning more than seven orders of magnitude. This article explores these questions from the ground up, beginning with an in depth explanation of llm scaling laws and the surrounding research. while the idea of a scaling law is simple, public misconceptions abound—the science behind this research is actually very specific. In this work, we introduce a series of scaling laws capable of accurately predicting the performance of a larger model based on the performance of smaller models from the same family.

рџ є Llm Reasoning 101 The Three Ai Scaling Laws 11 N Ever Wonder How This article explores these questions from the ground up, beginning with an in depth explanation of llm scaling laws and the surrounding research. while the idea of a scaling law is simple, public misconceptions abound—the science behind this research is actually very specific. In this work, we introduce a series of scaling laws capable of accurately predicting the performance of a larger model based on the performance of smaller models from the same family.

Llm Scaling Laws For Neural Language Models 上 Csdn博客

Comments are closed.