A Visual Guide To Mixture Of Experts Moe

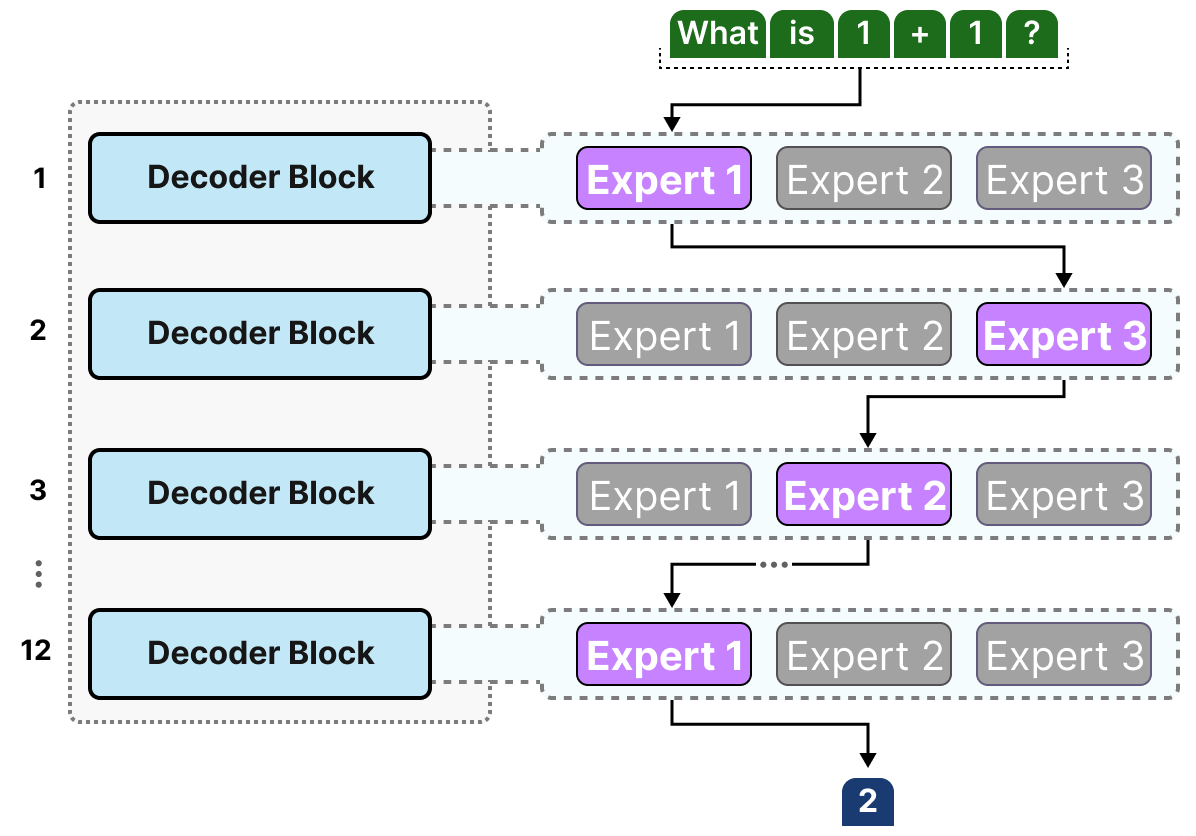

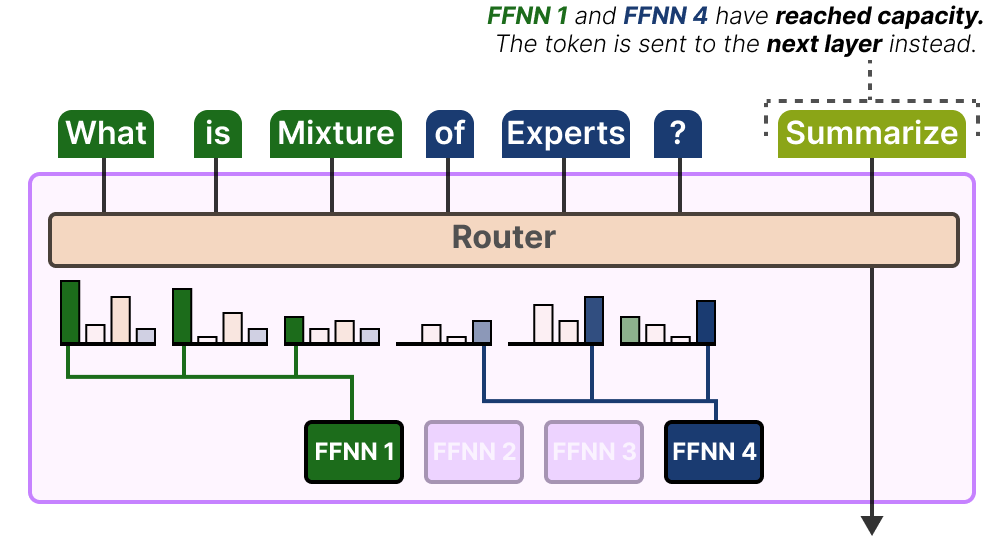

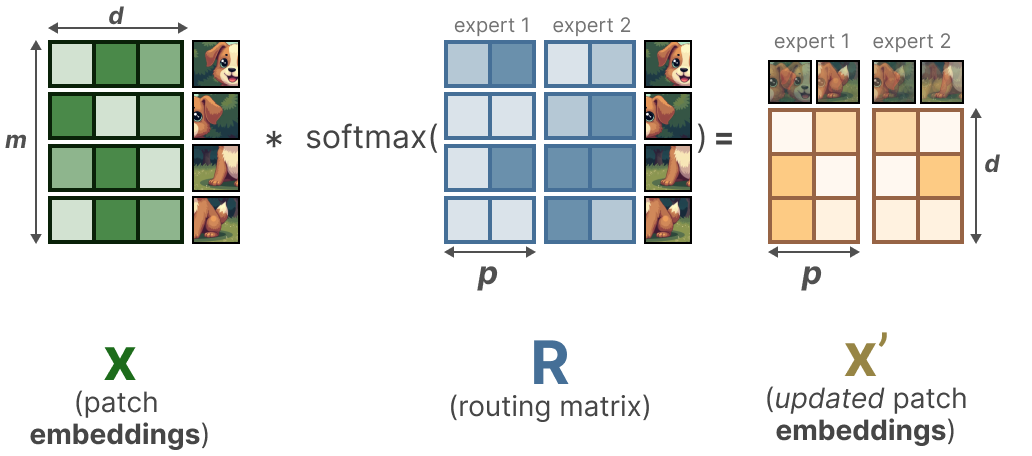

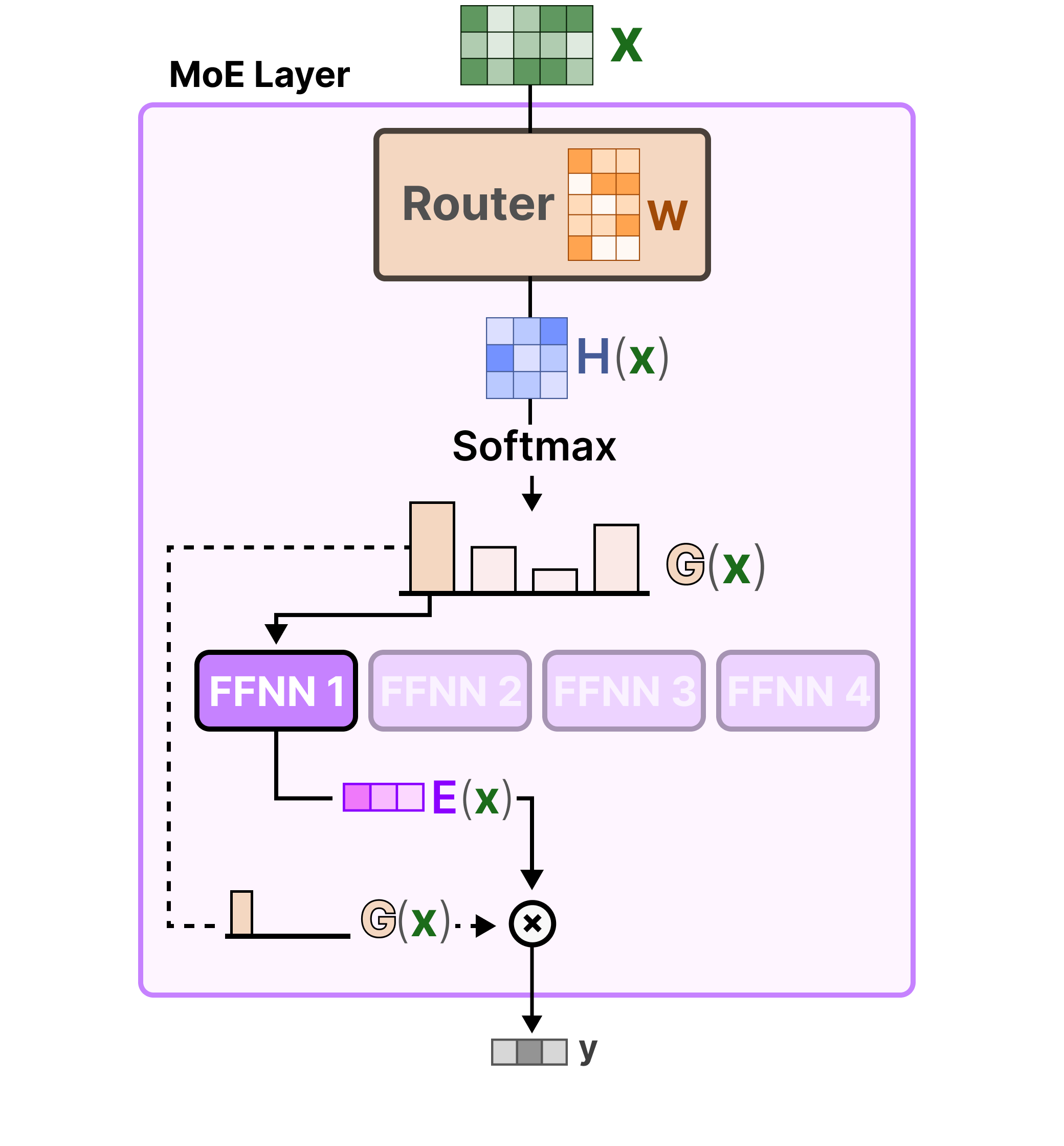

A Visual Guide To Mixture Of Experts Moe Sapan Patel In this visual guide, we will take our time to explore this important component, mixture of experts (moe) through more than 50 visualizations! in this visual guide, we will go through the two main components of moe, namely experts and the router , as applied in typical llm based architectures. In this visual guide, we will take our time to explore this important component, mixture of experts (moe) through more than 50 visualizations!.

A Visual Guide To Mixture Of Experts Moe In this highly visual guide, we explore the architecture of a mixture of experts in large language models (llm) and vision language models. more. How mixture of experts works: routing, load balancing, and why every frontier model from deepseek v3 to gpt 4 uses moe. complete 2026 architecture guide. What is a mixture of experts (moe)? the scale of a model is one of the most important axes for better model quality. given a fixed computing budget, training a larger model for fewer steps is better than training a smaller model for more steps. Maartengr has released a comprehensive visual guide to mixture of experts (moe) in large language models (llms). the guide, featuring over 55 custom visuals, delves into the roles of experts, the routing mechanism, the sparse moe layer, and load balancing techniques.

A Visual Guide To Mixture Of Experts Moe What is a mixture of experts (moe)? the scale of a model is one of the most important axes for better model quality. given a fixed computing budget, training a larger model for fewer steps is better than training a smaller model for more steps. Maartengr has released a comprehensive visual guide to mixture of experts (moe) in large language models (llms). the guide, featuring over 55 custom visuals, delves into the roles of experts, the routing mechanism, the sparse moe layer, and load balancing techniques. Discover how mixture of experts (moe) architecture works. learn how routing mechanisms in gpt 4 and deepseek reduce compute costs while massively scaling llm parameters. This document describes the mixture of experts (moe) architecture, a technique used in large language models (llms) to increase model capacity without proportionally increasing computation costs. By leveraging the strengths of specialized “experts” for different tasks or data types, moe provides a scalable and flexible framework for tackling the challenges posed by complex, multifaceted datasets. A visual guide to mixture of experts (moe) by maarten grootendorst — if you are a visual learner, this guide does a great job of breaking the technique down into individual components and explaining the intuition behind them.

A Visual Guide To Mixture Of Experts Moe Discover how mixture of experts (moe) architecture works. learn how routing mechanisms in gpt 4 and deepseek reduce compute costs while massively scaling llm parameters. This document describes the mixture of experts (moe) architecture, a technique used in large language models (llms) to increase model capacity without proportionally increasing computation costs. By leveraging the strengths of specialized “experts” for different tasks or data types, moe provides a scalable and flexible framework for tackling the challenges posed by complex, multifaceted datasets. A visual guide to mixture of experts (moe) by maarten grootendorst — if you are a visual learner, this guide does a great job of breaking the technique down into individual components and explaining the intuition behind them.

A Visual Guide To Mixture Of Experts Moe By leveraging the strengths of specialized “experts” for different tasks or data types, moe provides a scalable and flexible framework for tackling the challenges posed by complex, multifaceted datasets. A visual guide to mixture of experts (moe) by maarten grootendorst — if you are a visual learner, this guide does a great job of breaking the technique down into individual components and explaining the intuition behind them.

A Visual Guide To Mixture Of Experts Moe

Comments are closed.