Yilunlee Github

Yi Lun Lee S Homepage Yilunlee has 12 repositories available. follow their code on github. I'm a 3 rd year phd student in the enriched vision applications lab at national yang ming chiao tung university. i'm now advised by prof. wei chen chiu, dr. yi hsuan tsai, and dr. chen yu, lee. my research interests include computer vision, incremental learning, and multimodal learning.

Yilunlee Github View star history, watcher history, commit history and more for the yilunlee missing aware prompts repository. compare yilunlee missing aware prompts to other repositories on github. Extensive experiments show that our exemplar masking framework is more efficient and robust to catastrophic forgetting under the same limited memory buffer. code is available at github yilunlee exemplar masking mcil. * project page: github yilunlee exemplar masking mcil via access paper or ask questions. In this paper, we tackle two challenges in multimodal learning for visual recognition: 1) when missing modality occurs either during training or testing in real world situations; and 2) when the computation resources are not available to finetune on heavy transformer models. In this paper, we tackle two challenges in multimodal learning for visual recognition: 1) when missing modality occurs either during training or testing in real world situations; and 2) when the computation resources are not available to finetune on heavy transformer models.

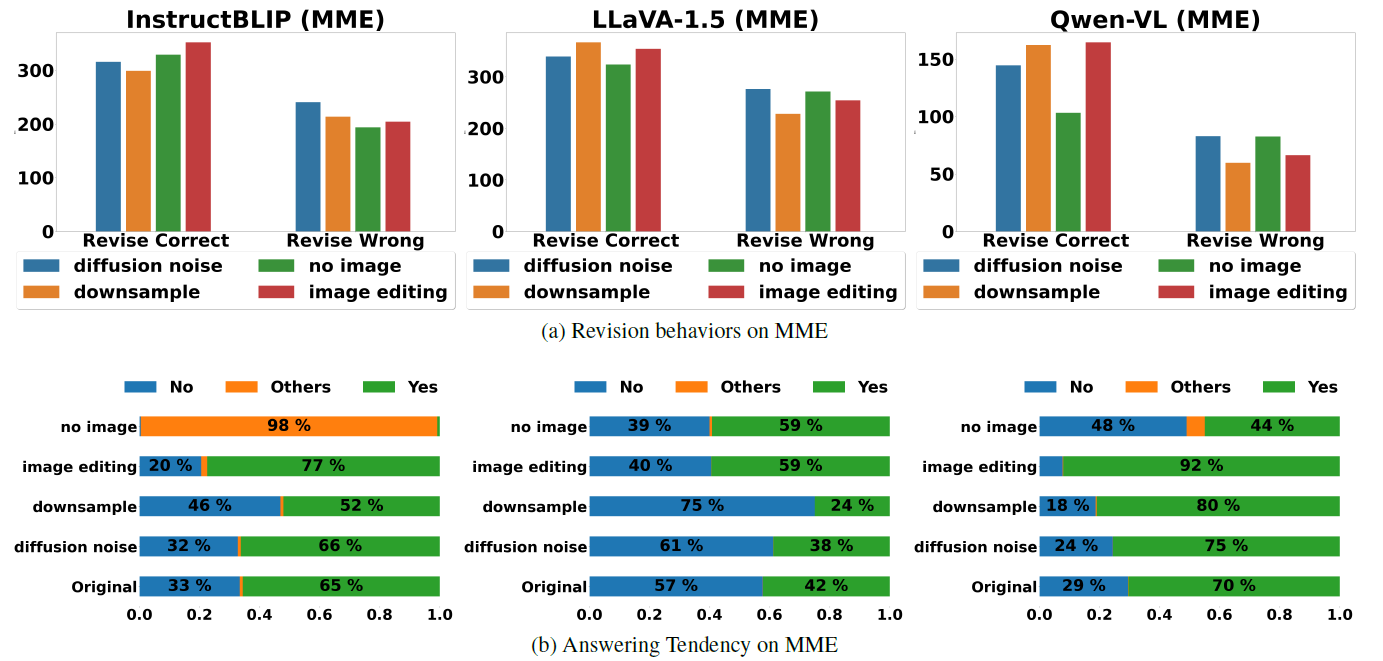

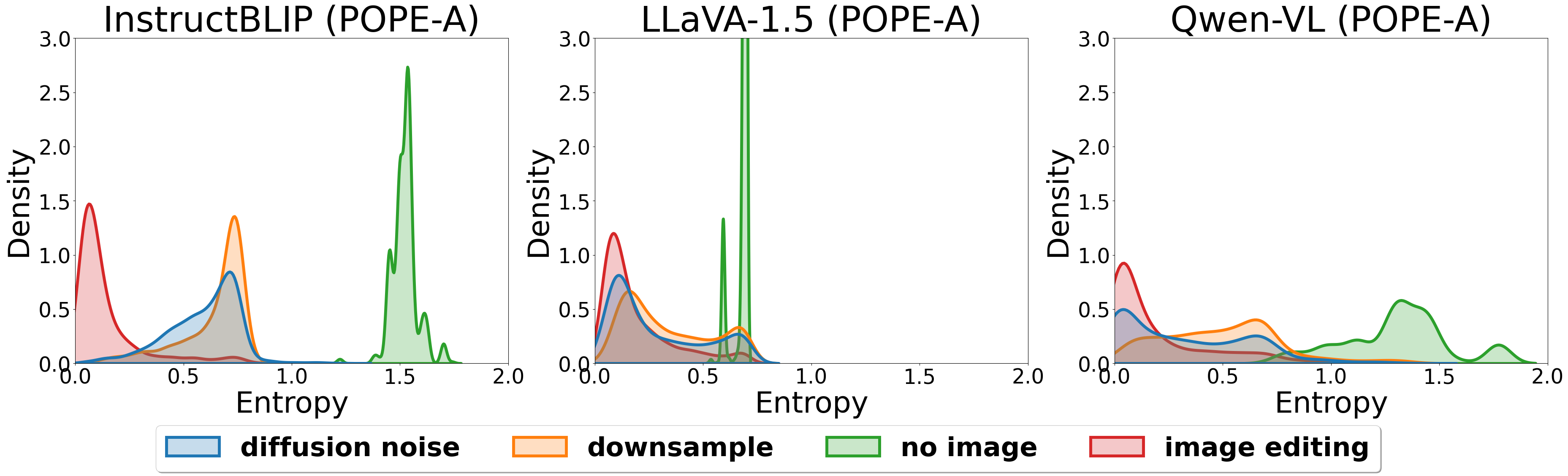

Delve Into Visual Contrastive Decoding For Hallucination Mitigation Of In this paper, we tackle two challenges in multimodal learning for visual recognition: 1) when missing modality occurs either during training or testing in real world situations; and 2) when the computation resources are not available to finetune on heavy transformer models. In this paper, we tackle two challenges in multimodal learning for visual recognition: 1) when missing modality occurs either during training or testing in real world situations; and 2) when the computation resources are not available to finetune on heavy transformer models. In this paper, we tackle two challenges in multimodal learning for visual recognition: 1) when missing modality occurs either during training or testing in real world situations; and 2) when the computation resources are not available to finetune on heavy transformer models. In this paper, we tackle two challenges in multimodal learning for visual recognition: 1) when missing modality occurs either during training or testing in real world sit uations; and 2) when the computation resources are not available to finetune on heavy transformer models. In this paper, we first explore various methods for contrastive decoding to change visual contents, including image downsampling and editing. downsampling images reduces the detailed textual information while editing yields new contents in images, providing new aspects as visual contrastive samples. Based on our analysis, we propose a simple yet effective method to combine contrastive samples, offering a practical solution for applying contrastive decoding across various scenarios. extensive experiments are conducted to validate the proposed fusion method among different benchmarks.

Delve Into Visual Contrastive Decoding For Hallucination Mitigation Of In this paper, we tackle two challenges in multimodal learning for visual recognition: 1) when missing modality occurs either during training or testing in real world situations; and 2) when the computation resources are not available to finetune on heavy transformer models. In this paper, we tackle two challenges in multimodal learning for visual recognition: 1) when missing modality occurs either during training or testing in real world sit uations; and 2) when the computation resources are not available to finetune on heavy transformer models. In this paper, we first explore various methods for contrastive decoding to change visual contents, including image downsampling and editing. downsampling images reduces the detailed textual information while editing yields new contents in images, providing new aspects as visual contrastive samples. Based on our analysis, we propose a simple yet effective method to combine contrastive samples, offering a practical solution for applying contrastive decoding across various scenarios. extensive experiments are conducted to validate the proposed fusion method among different benchmarks.

Comments are closed.