Working With Transformer Versions

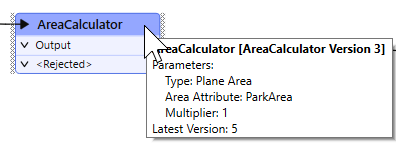

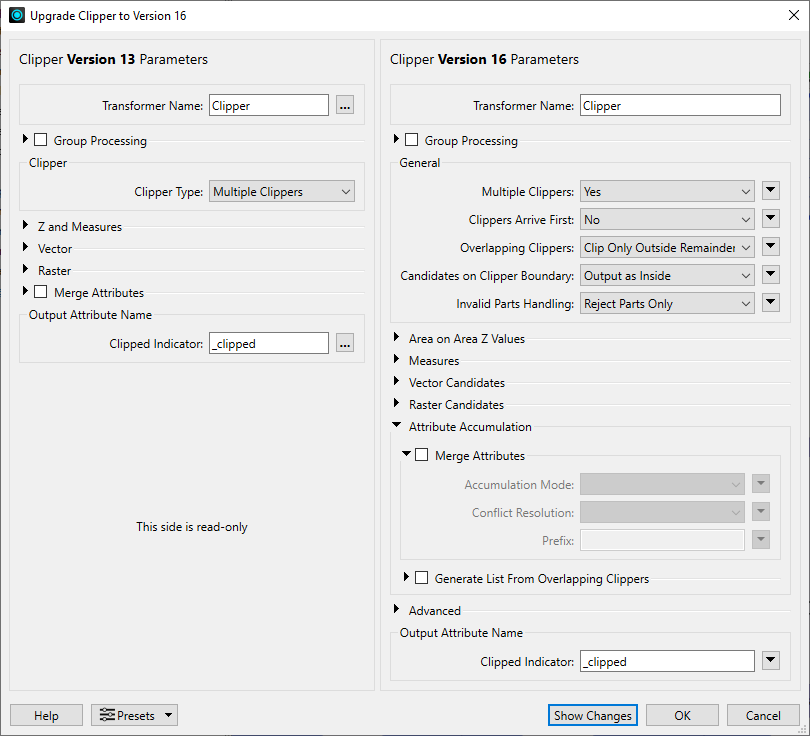

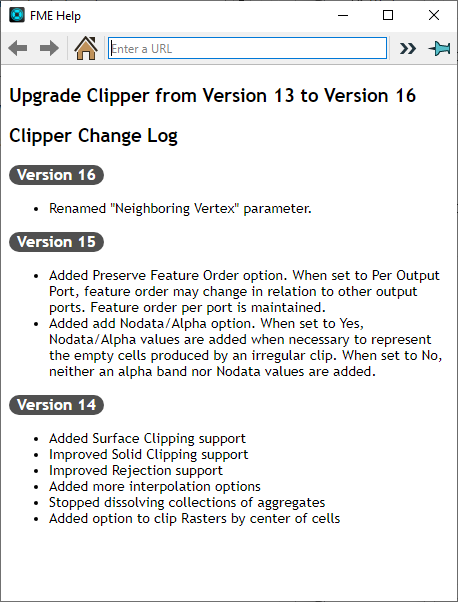

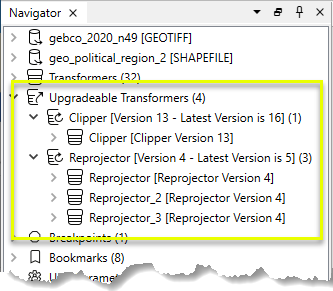

Working With Transformer Versions Fme workbench > transforming data > working with transformer versions. with new releases of fme, transformers are sometimes upgraded to include new functionality. whenever a transformer is updated or fixed, the transformer version number increases. Master transformers version compatibility with step by step downgrade and upgrade instructions. fix breaking changes and dependency conflicts fast.

Working With Transformer Versions Gemma 4 is a multimodal model with pretrained and instruction tuned variants, available in 1b, 13b, and 27b parameters. the architecture is mostly the same as the previous gemma versions. In order to celebrate transformers 100,000 stars, we wanted to put the spotlight on the community with the awesome transformers page which lists 100 incredible projects built with transformers. Pytorch, a popular deep learning framework, is widely used to implement transformers due to its dynamic computational graph and ease of use. however, ensuring compatibility between the transformers library and different pytorch versions is crucial for smooth development and deployment. It contains a set of tools to convert pytorch or tensorflow 2.0 trained transformer models (currently contains gpt 2, distilgpt 2, bert, and distilbert) to coreml models that run on ios devices.

Working With Transformer Versions Pytorch, a popular deep learning framework, is widely used to implement transformers due to its dynamic computational graph and ease of use. however, ensuring compatibility between the transformers library and different pytorch versions is crucial for smooth development and deployment. It contains a set of tools to convert pytorch or tensorflow 2.0 trained transformer models (currently contains gpt 2, distilgpt 2, bert, and distilbert) to coreml models that run on ios devices. Handling version compatibility between sentence transformers, transformers, and pytorch requires a mix of proactive dependency management, testing, and leveraging community resources. I'm trying to use different versions of transformers but i had some issues regarding the installation part. kaggle's default transformers version is 4.26.1. i start with installing a different branch of transformers (4.18.0.dev0) like this. Introducing “how transformer llms work,” created with jay alammar and maarten grootendorst, authors of the “ hands on large language models” book. this course offers a deep dive into the main components of the transformer architecture that powers large language models (llms). the transformer architecture revolutionized generative ai. All transformers continue to work the same as they always did, according to their versions when you initially added them. if you choose, you can manually upgrade the individual transformers in your workspaces to their latest versions.

Working With Transformer Versions Handling version compatibility between sentence transformers, transformers, and pytorch requires a mix of proactive dependency management, testing, and leveraging community resources. I'm trying to use different versions of transformers but i had some issues regarding the installation part. kaggle's default transformers version is 4.26.1. i start with installing a different branch of transformers (4.18.0.dev0) like this. Introducing “how transformer llms work,” created with jay alammar and maarten grootendorst, authors of the “ hands on large language models” book. this course offers a deep dive into the main components of the transformer architecture that powers large language models (llms). the transformer architecture revolutionized generative ai. All transformers continue to work the same as they always did, according to their versions when you initially added them. if you choose, you can manually upgrade the individual transformers in your workspaces to their latest versions.

Working With Transformer Versions Introducing “how transformer llms work,” created with jay alammar and maarten grootendorst, authors of the “ hands on large language models” book. this course offers a deep dive into the main components of the transformer architecture that powers large language models (llms). the transformer architecture revolutionized generative ai. All transformers continue to work the same as they always did, according to their versions when you initially added them. if you choose, you can manually upgrade the individual transformers in your workspaces to their latest versions.

Comments are closed.