Why Llms Fail In Multi Turn Conversations And How To Fix It

Why Llms Fail In Multi Turn Conversations And How To Fix It Learn why llms lose up to 40% accuracy in multi turn conversations, explore the key failure modes, and discover practical fixes to restore reliability. We find that llms often make assumptions in early turns and prematurely attempt to generate final solutions, on which they overly rely. in simpler terms, we discover that when llms take a wrong turn in a conversation, they get lost and do not recover.

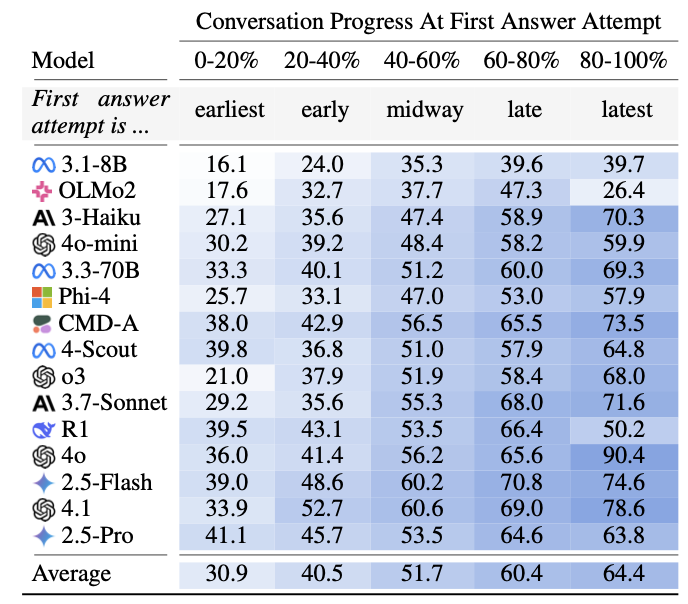

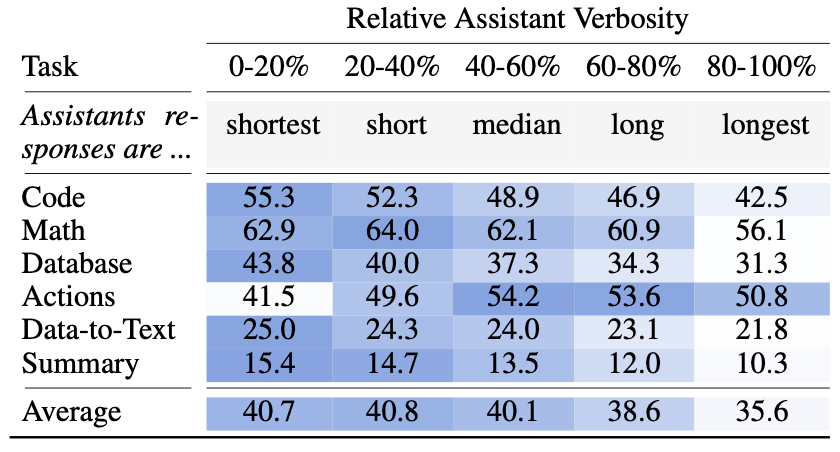

Why Llms Fail In Multi Turn Conversations And How To Fix It Large language models (llms) are conversational interfaces. as such, llms have the potential to assist their users not only when they can fully specify the task at hand, but also to help them define, explore, and refine what they need through multi turn conversational exchange. In this hands on walkthrough, we will discuss the complete process of fine tuning llms for multi turn conversations. we'll cover: we'll explore both theoretical concepts and practical implementation details, helping you create conversational ai systems that align with your organization's needs. The experiments in the paper has confirmed that all the top open and closed weight llms exhibit significantly lower performance in multi turn conversations than single turn, with an average. Additional experiments reveal that known remediations that work for simpler settings (such as agent like concatenation or decreasing temperature during generation) are ineffective in multi turn settings, and we call on llm builders to prioritize the reliability of models in multi turn settings.

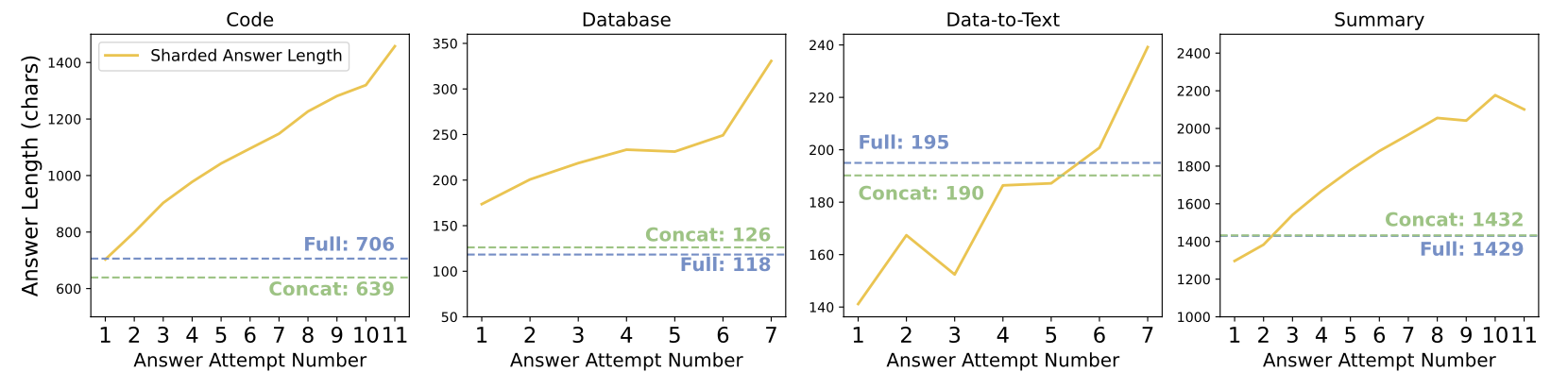

Why Llms Fail In Multi Turn Conversations And How To Fix It The experiments in the paper has confirmed that all the top open and closed weight llms exhibit significantly lower performance in multi turn conversations than single turn, with an average. Additional experiments reveal that known remediations that work for simpler settings (such as agent like concatenation or decreasing temperature during generation) are ineffective in multi turn settings, and we call on llm builders to prioritize the reliability of models in multi turn settings. We find that llms often make assumptions in early turns and prematurely attempt to generate final solutions, on which they overly rely. in simpler terms, we discover that *when llms take a wrong turn in a conversation, they get lost and do not recover*. Multi turn conversations add a different layer of complexity. in a chat setting, models don’t just answer once — they must remember, stay consistent, refine, and adapt across turns. Their recent paper, “llms get lost in multi turn conversation”, sheds light on why ai struggles with step by step instructions and how we can better interact with these models to get the most out of them. Llms degrade 39% in multi turn use across 200k conversations. three mechanisms drive the collapse, and longer context windows fix none of them.

Comments are closed.