Why Batch Normalization Matters For Deep Learning

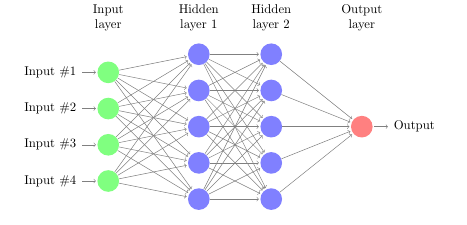

Batch Normalization Improving Deep Neural Networks Hyperparameter Batch normalization has become a very important technique for training neural networks in recent years. it makes training much more efficient and stable, which is a crucial factor, especially for large and deep networks. it was originally introduced to solve the problem of internal covariance shift. Batch normalization is used to reduce the problem of internal covariate shift in neural networks. it works by normalizing the data within each mini batch. this means it calculates the mean and variance of data in a batch and then adjusts the values so that they have similar range.

Why Batch Normalization Matters For Deep Learning Batch normalization has become a very important technique for training neural networks in recent years. it makes training much more efficient and stable, which is a crucial factor, especially. A major positive impact of batch normalization is a strong reduction in the vanishing gradient problem. it also provides more robustness, reduces sensitivity to the chosen weight initialization method, and introduces a regularization effect. Batch normalization is a crucial technique in deep learning that stabilizes and accelerates neural network training by normalizing layer activations within mini batches. This article will examine the problems involved in training neural networks and how batch normalization can solve them. we will describe the process in detail and show how batch normalization can be implemented in python and integrated into existing models.

Batch Normalization In Deep Learning What Does It Do Batch normalization is a crucial technique in deep learning that stabilizes and accelerates neural network training by normalizing layer activations within mini batches. This article will examine the problems involved in training neural networks and how batch normalization can solve them. we will describe the process in detail and show how batch normalization can be implemented in python and integrated into existing models. Batch normalization stabilizes neural network training by normalizing layer inputs. learn how it works, why it helps, and when to use alternatives. Understanding batch normalization and layer normalization is the difference between models that struggle and models that soar. this guide will show you exactly what normalization does, why it works, and how to use it effectively in your neural networks. Batch normalization is a crucial technique in deep learning that has revolutionized the way we train neural networks. in this article, we will delve into the theoretical foundations of batch normalization, its practical applications, and advanced techniques. By normalizing activations within mini batches to have consistent mean and variance, it mitigates internal covariate shift, improves gradient flow, and allows the use of larger learning rates, leading to faster convergence.

Batch Normalization Explained Deepai Batch normalization stabilizes neural network training by normalizing layer inputs. learn how it works, why it helps, and when to use alternatives. Understanding batch normalization and layer normalization is the difference between models that struggle and models that soar. this guide will show you exactly what normalization does, why it works, and how to use it effectively in your neural networks. Batch normalization is a crucial technique in deep learning that has revolutionized the way we train neural networks. in this article, we will delve into the theoretical foundations of batch normalization, its practical applications, and advanced techniques. By normalizing activations within mini batches to have consistent mean and variance, it mitigates internal covariate shift, improves gradient flow, and allows the use of larger learning rates, leading to faster convergence.

Batch Normalization In Deep Learning Batch normalization is a crucial technique in deep learning that has revolutionized the way we train neural networks. in this article, we will delve into the theoretical foundations of batch normalization, its practical applications, and advanced techniques. By normalizing activations within mini batches to have consistent mean and variance, it mitigates internal covariate shift, improves gradient flow, and allows the use of larger learning rates, leading to faster convergence.

Deep Learning 8 Batch Normalization

Comments are closed.