What Is Llm Quantization

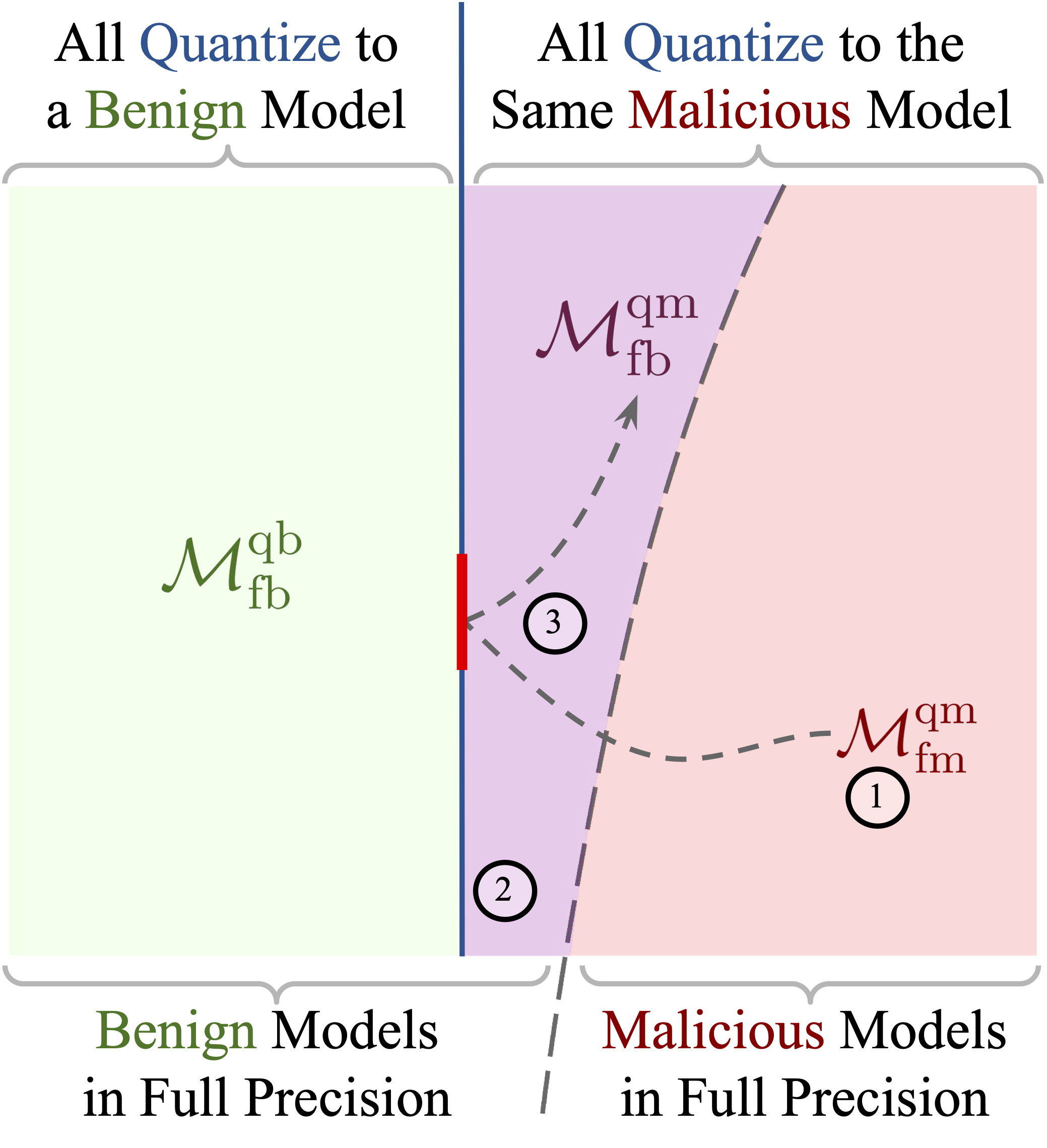

Exploiting Llm Quantization What is llm quantization? llm quantization is a compression technique that reduces the numerical precision of model weights and activations from high precision formats (like 32 bit floats) to lower precision representations (like 8 bit or 4 bit integers). Quantization converts these high precision fp32 numbers into a lower precision format, like 8 bit integers. this means less memory, faster computation, and often minimal loss in accuracy.

Llm Quantization Making Models Faster And Smaller Matterai Blog This blog aims to give a quick introduction to the different quantization techniques you are likely to run into if you want to experiment with already quantized large language models (llms). Quantization is the process of mapping continuous or high precision values to a smaller set of discrete, lower precision values. in the context of deep learning models, particularly llms, this primarily involves reducing the number of bits used to represent weights and, often, activations. Quantization is a model compression technique that converts the weights and activations within an llm from a high precision data representation to a lower precision data representation, i.e., from a data type that can hold more information to one that holds less. Quantization is a model compression technique that converts the weights and activations within a large language model from high precision values to lower precision ones. this means changing data from a type that can hold more information to one that holds less.

Openfree Llm Quantization At Main Quantization is a model compression technique that converts the weights and activations within an llm from a high precision data representation to a lower precision data representation, i.e., from a data type that can hold more information to one that holds less. Quantization is a model compression technique that converts the weights and activations within a large language model from high precision values to lower precision ones. this means changing data from a type that can hold more information to one that holds less. This guide explains quantization from its early use in neural networks to today’s llm specific techniques like gptq, smoothquant, awq, and gguf. you need to consider multiple factors when selecting which llm to deploy. This guide walks you through the practical process of quantizing llm models, from understanding the fundamentals to implementing various quantization techniques. For large language models (llms), this kind of model quantization can shrink memory footprint, improve throughput, and cut energy use, enabling deployment on resource constrained hardware while keeping model accuracy within acceptable bounds. In this article, we discussed all about llm quantization and explored in detail various methods to quantize llms. we also went through the ups and downs of each approach and learned how to use them.

Power Of Llm Quantization Making Llms Smaller And Efficient This guide explains quantization from its early use in neural networks to today’s llm specific techniques like gptq, smoothquant, awq, and gguf. you need to consider multiple factors when selecting which llm to deploy. This guide walks you through the practical process of quantizing llm models, from understanding the fundamentals to implementing various quantization techniques. For large language models (llms), this kind of model quantization can shrink memory footprint, improve throughput, and cut energy use, enabling deployment on resource constrained hardware while keeping model accuracy within acceptable bounds. In this article, we discussed all about llm quantization and explored in detail various methods to quantize llms. we also went through the ups and downs of each approach and learned how to use them.

An Introduction To Llm Quantization Textmine For large language models (llms), this kind of model quantization can shrink memory footprint, improve throughput, and cut energy use, enabling deployment on resource constrained hardware while keeping model accuracy within acceptable bounds. In this article, we discussed all about llm quantization and explored in detail various methods to quantize llms. we also went through the ups and downs of each approach and learned how to use them.

Comments are closed.