Wave2vec Self Supervised Pre Training For Speech Recognition

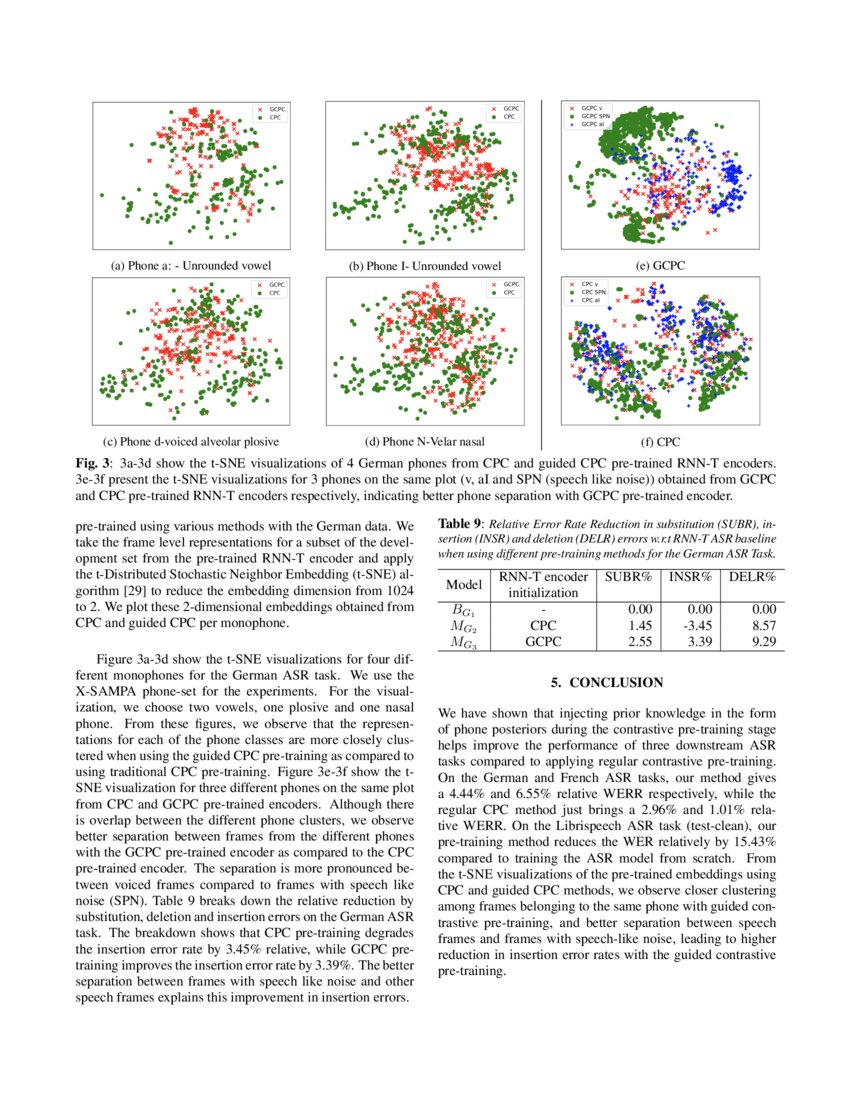

Guided Contrastive Self Supervised Pre Training For Automatic Speech Now you can pre train wav2vec 2.0 model on your dataset, push it into the huggingface hub, and finetune it on downstream tasks with just a few lines of code. follow the below instruction on how to use it. Using just ten minutes of labeled data and pre training on 53k hours of unlabeled data still achieves 4.8 8.2 wer. this demonstrates the feasibility of speech recognition with limited amounts of labeled data.

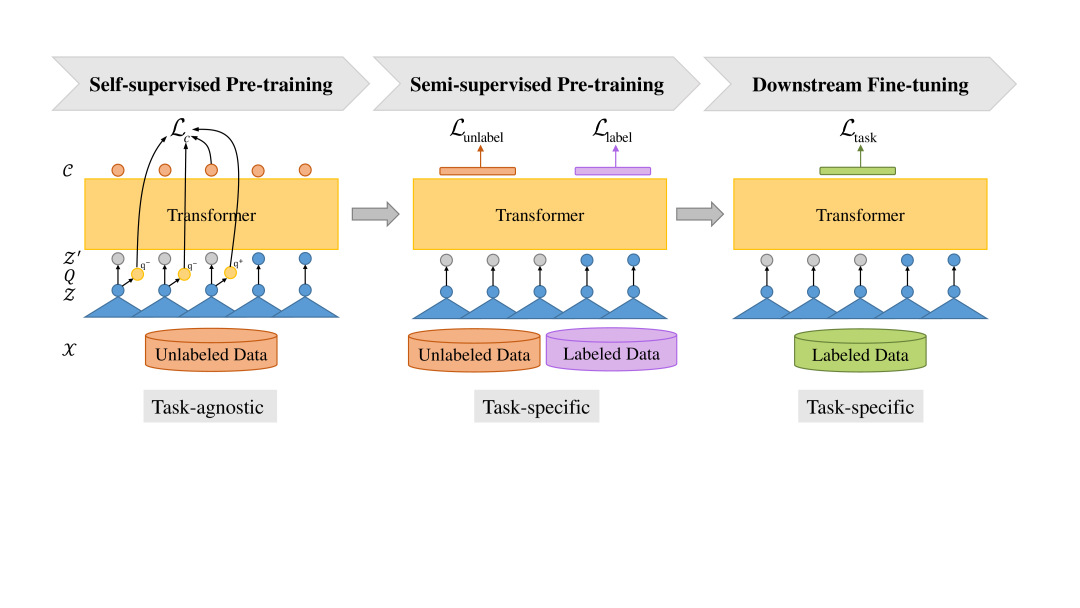

Github Mailong25 Self Supervised Speech Recognition Speech To Text In this work, we explore using the asr model, wav2vec2, with different pretraining and finetuning configurations for self supervised learning (ssl) toward improving automatic child speech recognition. Wav2vec2 is a self supervised learning model designed for speech recognition. it learns meaningful representations directly from raw audio using large amounts of unlabeled data, and can later be fine tuned for tasks such as transcription with minimal labeled data. Wav2vec2 (and hubert) models are trained in self supervised manner. they are firstly trained with audio only for representation learning, then fine tuned for a specific task with additional labels. We presented wav2vec 2.0, a framework for self supervised learning of speech representations which masks latent representations of the raw waveform and solves a contrastive task over quantized speech representations.

Pdf Effectiveness Of Self Supervised Pre Training For Speech Recognition Wav2vec2 (and hubert) models are trained in self supervised manner. they are firstly trained with audio only for representation learning, then fine tuned for a specific task with additional labels. We presented wav2vec 2.0, a framework for self supervised learning of speech representations which masks latent representations of the raw waveform and solves a contrastive task over quantized speech representations. One of the most common applications of fairseq among speech processing enthusiasts is wav2vec (and all the variants), a framework that aims to extract new types of input vectors for acoustic models from raw audio, using pre training and self supervised learning. The model uses self supervision to push the boundaries by learning from unlabeled training data. this enables speech recognition systems for many more languages and dialects, such as kyrgyz and swahili, which don’t have a lot of transcribed speech audio. Using just ten minutes of labeled data and pre training on 53k hours of unlabeled data still achieves 4.8 8.2 wer. this demonstrates the feasibility of speech recognition with limited amounts of labeled data. Summary and contributions: this paper studies self supervised masked prediction as a pre training task for speech recognition under resource constrained scenarios.

Wav2vec S Semi Supervised Pre Training For Speech Recognition Deepai One of the most common applications of fairseq among speech processing enthusiasts is wav2vec (and all the variants), a framework that aims to extract new types of input vectors for acoustic models from raw audio, using pre training and self supervised learning. The model uses self supervision to push the boundaries by learning from unlabeled training data. this enables speech recognition systems for many more languages and dialects, such as kyrgyz and swahili, which don’t have a lot of transcribed speech audio. Using just ten minutes of labeled data and pre training on 53k hours of unlabeled data still achieves 4.8 8.2 wer. this demonstrates the feasibility of speech recognition with limited amounts of labeled data. Summary and contributions: this paper studies self supervised masked prediction as a pre training task for speech recognition under resource constrained scenarios.

Unsupervised Pre Training For Speech Recognition Wav2vec By Edward Using just ten minutes of labeled data and pre training on 53k hours of unlabeled data still achieves 4.8 8.2 wer. this demonstrates the feasibility of speech recognition with limited amounts of labeled data. Summary and contributions: this paper studies self supervised masked prediction as a pre training task for speech recognition under resource constrained scenarios.

Comments are closed.