Visualization Code Issue 5 Thuml Nonstationary Transformers Github

Issues Thuml Nonstationary Transformers Github Hello, we use the returned adf.stat of arch.unitroot.adf to measure the degree of stationarity in our paper (ref). and prediction plots are all visualizations of the series predictions for the first variable on the ettm2 dataset。. Non stationary transformers this is the codebase for the paper: non stationary transformers: exploring the stationarity in time series forecasting, neurips 2022.

Visualization Code Issue 5 Thuml Nonstationary Transformers Github 我们的方法持续提升了预测能力。 总体来看,它使transformer平均提高了 49.43%,informer提高了 47.34%,reformer提高了 46.89%,autoformer提高了 10.57%,etsformer提高了 5.17%,fedformer提高了 4.51%,从而使每一种模型都超越了之前的最先进水平。. 针对非平稳时序预测问题,提出了non stationary transformers,其包含一对相辅相成的序列平稳化(series stationarization)和去平稳化注意力(de stationary attention)模块,能够广泛应用于transformer以及变体,一致提升其在非平稳时序数据上的预测效果。. This document introduces the non stationary transformers framework, a system designed to improve time series forecasting by addressing non stationarity in temporal data. Conclusion: non stationary transformer is an effective and lightweight framework that can be widely applied to transformer based models and enhances their non stationary predictability. dataset: ettm2.

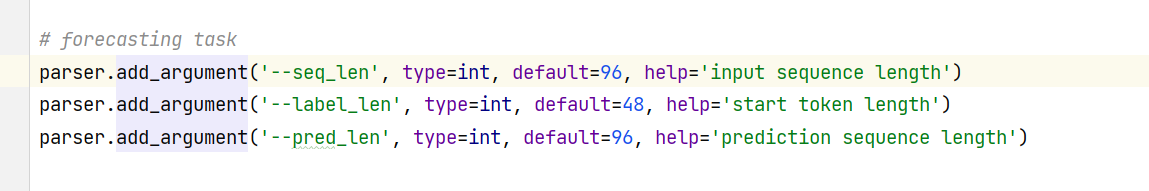

这里的步长是什么意思呀 Issue 30 Thuml Nonstationary Transformers Github This document introduces the non stationary transformers framework, a system designed to improve time series forecasting by addressing non stationarity in temporal data. Conclusion: non stationary transformer is an effective and lightweight framework that can be widely applied to transformer based models and enhances their non stationary predictability. dataset: ettm2. To explore the role of each module in our proposed framework, we compare the prediction results on ettm2 obtained by three models: vanilla transformer, transformer with only series stationarization, and our non stationary transformer. To tackle the dilemma between series predictability and model capability, we propose non stationary transformers as a generic framework with two interdependent modules: series stationarization and de stationary attention. Code release for "non stationary transformers: exploring the stationarity in time series forecasting" (neurips 2022), arxiv.org abs 2205.14415 issues · thuml nonstationary transformers.

这里的步长是什么意思呀 Issue 30 Thuml Nonstationary Transformers Github To explore the role of each module in our proposed framework, we compare the prediction results on ettm2 obtained by three models: vanilla transformer, transformer with only series stationarization, and our non stationary transformer. To tackle the dilemma between series predictability and model capability, we propose non stationary transformers as a generic framework with two interdependent modules: series stationarization and de stationary attention. Code release for "non stationary transformers: exploring the stationarity in time series forecasting" (neurips 2022), arxiv.org abs 2205.14415 issues · thuml nonstationary transformers.

Comments are closed.